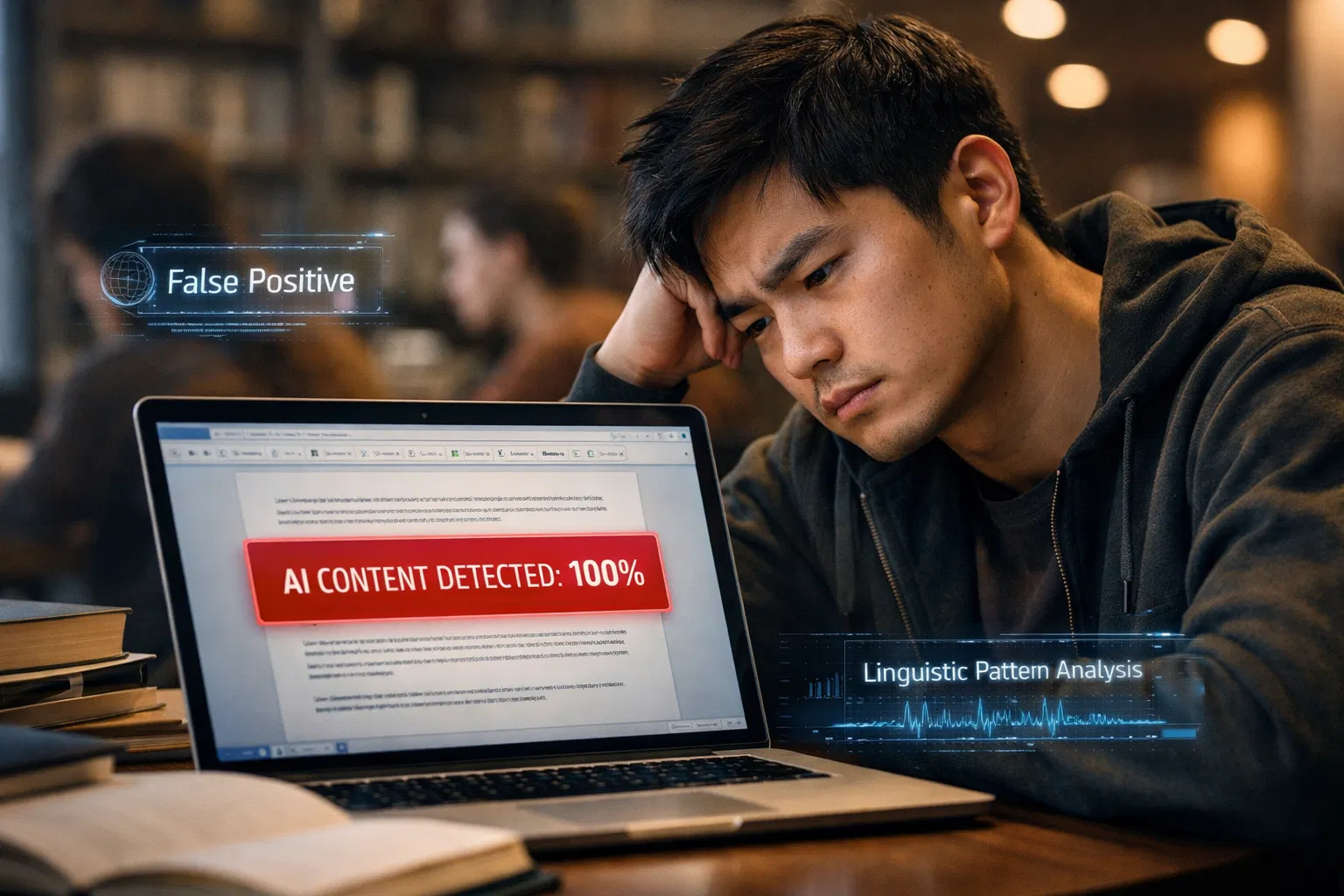

In April 2025, the Federal Trade Commission filed a complaint against Workado LLC, the company behind the AI Content Detector formerly marketed as Content at Scale AI. The complaint described a company that had been advertising its tool as 98 percent accurate at detecting AI-generated text. The FTC's investigation found that the tool's actual accuracy rate on general-purpose content was 53 percent. In the words of Chris Mufarrige, Director of the FTC's Bureau of Consumer Protection: "Consumers trusted Workado's AI Content Detector to help them decipher whether AI was behind a piece of writing, but the product did no better than a coin toss."

Workado is not an anomaly. It is the case that the FTC investigated. The broader pattern it represents, detection tools claiming accuracy rates that bear little relationship to real-world performance on diverse content, is the norm in this market, not the exception. The gap between what vendors publish in their marketing materials and what independent academic research and real-world testing consistently find is large, systematic, and consequential for every student, researcher, writer, and professional whose work is evaluated using these tools.

This article examines that gap in detail: how vendors construct benchmark figures, what independent studies actually find, the specific methodology choices that make vendor numbers misleading, and what writers need to understand about the real reliability of the systems being used to judge their work. Understanding this gap is practical knowledge for anyone using an AI text humanizer or facing an accusation based on a detection score.

Key Takeaways

The FTC took enforcement action in 2025 against an AI detection vendor for claiming 98 percent accuracy when independent testing showed 53 percent accuracy, a gap large enough to constitute consumer deception under Section 5 of the FTC Act. This is the first and clearest regulatory signal that accuracy claims in the AI detection market require the same substantiation standard as any other advertising claim.

OpenAI, the company that built the most widely used large language models, shut down its own AI text classifier in July 2023 citing a "low rate of accuracy." The tool correctly identified only 26 percent of AI-written text while falsely flagging 9 percent of human-written text as AI-generated. The company behind the technology most commonly being detected could not build a reliable detector for its own output.

The Washington Post tested Turnitin in 2023 on a small sample and found a 50 percent false positive rate, directly contradicting Turnitin's publicly stated claim of less than 1 percent false positives. Turnitin explicitly acknowledged a tradeoff: its AI checker can miss roughly 15 percent of AI-generated text in a document because it deliberately calibrates to minimize false positives. The 1 percent and 50 percent figures can both be true at different threshold settings and across different content populations.

Vendor-published accuracy figures are almost always measured on controlled benchmark datasets: balanced samples of clearly AI-generated text and clearly human-written text, with thresholds set to optimize the reported metric. Real-world content is none of these things. It includes mixed documents, edited AI content, formally written human text, and writing by populations whose style overlaps with AI output. Accuracy on a controlled benchmark routinely overstates real-world accuracy by 20 to 40 percentage points, depending on the content type.

The populations most affected by the gap between vendor claims and real-world performance are those least likely to be represented in vendor benchmark datasets: non-native English speakers, formally trained academic writers, neurodivergent writers, and writers who use grammar-checking tools. These groups face false-positive rates that, in some studies, are 10 to 20 times the vendor-reported headline figures. Using humanizedAI content tools to adjust the statistical properties of genuine writing helps mitigate the consequences of this gap, regardless of what any vendor claims its detection rate is.

The FTC Action: The Moment a Regulator Looked at the Numbers

The Workado case is worth examining in detail because it is the clearest documented example of what happens when someone with investigative authority looks behind a vendor's accuracy claim.

FTC Workado AI detector complaint from April 2025 describes a company that marketed its AI Content Detector as "one of the most trusted" detection tools, claiming 98.3 percent accuracy in distinguishing between AI-generated and human-written text. The company claimed the tool was trained on a wide range of material, including blog posts and Wikipedia entries, to serve a general user base.

The FTC's investigation found that the AI model powering the detector was not built, trained, or fine-tuned by Workado. It was pulled from Hugging Face, an open-source repository. That model was trained almost exclusively on academic content, not on the blog posts and Wikipedia entries Workado described. On general-purpose content, the best accuracy result the company's own testing produced was 74.5 percent. On non-academic content, accuracy dropped to 53.2 percent. This was not a marginal discrepancy. It was a 45-percentage-point gap between what was advertised and what the tool delivered.

The FTC finalized its consent order in August 2025, requiring Workado to stop making accuracy claims without competent and reliable evidence, to retain that evidence for future claims, and to notify its customers that the company had settled charges of false or unsubstantiated advertising. The draft notification letter sent to customers stated: "We claimed that our AI Content Detector will predict with a 98% accuracy rate whether text was created by AI content generators like ChatGPT, GPT4, Claude, and Bard. The FTC says we didn't have proof to back up those claims."

Why This Matters Beyond Workado: The FTC action against Workado is significant not because it was a uniquely bad actor, but because the investigation revealed what is routine in this market: accuracy figures that are specific to narrow content categories advertised as general performance, benchmark results conducted on controlled datasets presented as representative of real-world use, and no independent third-party verification of the claims. Workado got caught. The broader question is how many other tools are operating on the same pattern. Using statistical adjustment tools that bypass AI detectors is not circumventing legitimate quality assurance. It is protecting against a measurement system whose reliability claims have been shown, in at least one regulatory investigation, to be unsubstantiated. |

What Vendors Actually Claim and What Those Numbers Mean

Almost every major AI detection vendor publishes accuracy figures in the high 90s. Understanding what these numbers actually represent requires reading the methodology footnotes that vendors rarely emphasize in their marketing materials.

Originality.ai's99 percent accuracy claim describes Originality's September 2025 model release as achieving "notable 99% accuracy on leading flagship AI models." Originality publishes more methodological detail than most competitors: its Lite version claims 0.5% false positives, and its Turbo version claims 1.5% false positives. This level of transparency is genuinely unusual in this market.

But even this comparatively transparent disclosure reveals the benchmark problem. When Originality says "99% accuracy on leading flagship AI models," it means accuracy on clearly AI-generated text from the specific models it tested. The false positive figure (0.5 to 1.5 percent) applies to the human writing in its benchmark dataset. What constitutes the human writing in that dataset determines everything, and benchmark datasets almost never represent the full range of real-world writing populations. Originality's aggressive calibration, designed for publisher use cases where the cost of publishing undisclosed AI content is considered higher than the cost of false positives, means its real-world false positive rate on diverse professional content has been measured at 5 to 9 percent in independent testing, three to eighteen times the vendor-reported figure depending on the source.

The Pattern Across the Market

The same pattern repeats across vendors. GPTZero reports 0.24 percent false positives on its controlled benchmark of 3,000 samples but acknowledges real-world rates are higher, particularly for non-native English writers and formal academic prose. Turnitin claims less than 1 percent false positives and is relatively transparent about its conservative calibration tradeoffs. Free tools like ZeroGPT do not publish false positive methodology at all, leaving users to discover rates through experience or independent research.

The regulatory principle established by the Workado case is that an accuracy claim must be substantiated by evidence that reflects the actual population the tool is being used on, not just the population it was tested on internally. This principle, if applied consistently, would require most AI detection vendors to substantially revise their published accuracy figures or the conditions under which they apply. Using an AI humanizer tool is not a bad-faith response to detection. It is a rational response to a market in which accuracy claims have been shown to be context-specific and often overstated.

What Independent Studies Actually Find

Beyond the FTC action, a consistent body of academic and independent research documents the gap between vendor claims and real-world performance.

OpenAI Shut Down Its Own Detector

In July 2023, OpenAI discontinued its own AI text classifier, citing a "low rate of accuracy." The classifier correctly identified only 26 percent of AI-generated text, while falsely flagging 9 percent of human-generated text as AI-generated. OpenAI, the company that produces the output that every AI detector is trying to identify, could not build a reliable detector for its own model's text. This is not a failure of execution. It reflects a genuine scientific difficulty: the statistical properties that distinguish AI output from human writing are not stable across content types, writing styles, and writing populations.

The Washington Post Turnitin Test

In 2023, the Washington Post tested Turnitin on a sample of human-written texts. The test found a 50 percent false-positive rate, directly contradicting Turnitin's publicly stated claim of less than 1 percent. Turnitin's response is reasonable: the Washington Post test had a small sample size, and Turnitin's calibration specifically trades false negatives for false positives. Turnitin confirmed that its AI checker can miss roughly 15 percent of AI-generated text in a document because it deliberately calibrates conservatively to minimize false positives. Both numbers, 1 percent and 50 percent, reflect the tool's behavior under different conditions. The 50 percent figure reflects what happens on specific types of formally written human text. The 1 percent figure reflects the vendor's benchmark conditions.

The RAID Benchmark Study

A 2024 study by researchers from the University of Pennsylvania evaluated multiple detection tools on the RAID dataset, which contains diverse text types. Key findings: most detectors failed to maintain accuracy when false positive rates were constrained below 1 percent, becoming effectively useless at rates below 0.5 percent. Some detectors showed false positive rates as high as 16.9 percent (ZeroGPT) and 0.62 percent (Originality.ai) at specific operating points. The study found that the fundamental tradeoff between sensitivity and specificity, which applies to all classifiers, is particularly acute in AI detection, and that the operating points vendors choose for their headline figures are often not representative of real-world deployment conditions.

The Perkins et al. 2024 Study

Perkins et al. (2024), published in the International Journal of Educational Technology in Higher Education, tested six detectors on AI-generated text from GPT-5, Claude, and Gemini. Baseline accuracy across all six detectors averaged 39.5 percent before any adversarial techniques were applied. After students applied simple modifications, including paraphrasing and spelling variations, average accuracy dropped to 22.1 percent. This finding, from the most relevant academic integrity application context, puts baseline detection accuracy at less than 40 percent for modern AI models, a far cry from the 95-99 percent figures vendor marketing presents.

CyberScoop'sFTC Workado investigation notes that Workado's tool was "no better than a coin flip" for general-purpose content and describes the FTC's conclusion that the tool's model was trained only to classify academic content, despite being marketed to general users. The parallel to other tools in the market is the relevant context: every tool has specific training conditions under which its accuracy is measured, and those conditions rarely match the full diversity of content being submitted to it. Tools that beat AI detectors statistically are responding to measurement systems whose actual reliability is substantially lower than their marketing materials suggest.

How Benchmark Methodology Produces Misleading Numbers

Understanding why vendor benchmarks consistently overstate real-world accuracy requires understanding the specific methodology choices that produce inflated figures.

Controlled Dataset Selection

Vendor benchmarks typically use balanced datasets: roughly equal numbers of AI-generated and human-written texts. The AI-generated texts are unmodified outputs from specific models at specific temperatures. The human-written texts are from existing corpora that represent a narrow range of writing styles, typically English-language, adult-authored, native-speaker prose in a small number of genres. This balance and consistency are appropriate for measuring a tool's theoretical maximum performance, but do not represent the distribution of real-world submissions.

Threshold Selection

Most detection tools produce probability scores from 0 to 100 percent, with a threshold above which content is flagged. Vendors choose thresholds for their benchmark reports that optimize the headline accuracy figure. A tool might report 99 percent accuracy at a threshold that flags only very high-confidence cases, but that same threshold would miss the majority of AI-generated content in real submissions. At thresholds appropriate for practical academic use, the same tool's accuracy drops substantially. The Workado FTC complaint explicitly noted that the vendor reported best-case results, not typical results. ReducingAI detection risk by adjusting your content's statistical profile is effective across any threshold setting because it targets the underlying measurements rather than a specific threshold.

Temporal Validity

AI models improve continuously. GPT-3 output is statistically easier to detect than GPT-5 output because earlier models produce more uniform, more predictable text. Vendor benchmarks conducted on older model output systematically overstate accuracy on current model output. A tool benchmarked primarily on GPT-3.5 content in 2023 and reporting 95 percent accuracy is not necessarily 95 percent accurate on GPT-5 content in 2026. The Perkins et al. 2024 study explicitly found accuracy rates below 40 percent on content from GPT-5, Claude, and Gemini, all current-generation models.

Population Representation

Benchmark datasets almost never include representative samples of the writing populations most likely to face false positives: non-native English speakers, neurodivergent writers, formally trained academic writers, and writers who use grammar correction tools. A 2024 study at UC Davis found that 15 of 17 flagged students were false positives, with flagged students disproportionately non-native English speakers and writing center users. If this population were represented in vendor benchmarks in proportion to its share of academic submissions, vendor-reported false-positive rates would be substantially higher.

Vendor Claims Versus What the Evidence Shows

Tool | Vendor-Claimed Accuracy / FP Rate | What Independent Evidence Shows | Key Discrepancy |

GPTZero | 99.3% accuracy; 0.24% false positive rate (own benchmark, 3,000 samples) | Higher FP rates in real-world conditions; elevated on ESL and formal writing; Perkins et al. found 39.5% baseline detection on modern AI models across tools | Benchmark is vendor-conducted on controlled dataset; real-world rates higher for specific populations |

Turnitin | Less than 1% false positive rate; up to 99% accuracy | Washington Post test found 50% FP on specific human texts; explicitly misses ~15% of AI text by design; elevated on ESL writing | 1% FP is real under conservative calibration; Washington Post 50% reflects specific content type; both can be simultaneously true |

99% accuracy; 0.5-1.5% false positive rate (own benchmark, September 2025) | Scribbr 2024 test found 76% overall accuracy; flagged 2022 human blog post as 61% AI; independent testing shows 5-9% FP on professional content | Aggressive calibration accepted by design for publisher use; substantially higher FP on diverse human writing than benchmark suggests | |

Copyleaks | Claims industry-leading 0.2% false positive rate; 90%+ accuracy | Independent testing suggests closer to 5% FP depending on content type; inconsistent results on technical and formulaic writing | Low FP claim on specific content types; less reliable on newer AI models and diverse writing styles |

ZeroGPT | Does not publish standardized false positive methodology publicly | RAID benchmark found FP rates as high as 16.9% at specific operating points; independent reviewers document high FP and easy evasion via paraphrasing | No published methodology makes any claim unverifiable and any FP figure uncontested |

Workado (AI Content Detector) | Claimed 98% accuracy; "one of the most trusted" detectors | FTC found 53% accuracy on general-purpose content; trained only on academic content; "no better than a coin toss" (FTC) | FTC-documented; 45-percentage-point gap between claim and measured performance; resulted in consent order |

The FTC Workado final consent order, finalized in August 2025, requires Workado to stop making accuracy claims without competent and reliable evidence. The broader implication for the market: accuracy claims require the same substantiation standard as any other advertising claim. Under this standard, most AI detection vendors would need to substantially revise their published figures or the conditions to which they apply. Producing undetectable AI text from genuine human writing through statistical adjustment is a response to a market where the accuracy of the systems doing the measuring has been shown to fall significantly short of what is claimed.

The Data on Non-Native English Speakers: The Worst-Kept Secret

The most thoroughly documented instance of AI detection accuracy failure is the systematic bias against non-native English speakers. This is not a disputed finding. It is consistent across multiple independent studies and has been replicated with different detection tools, writing samples, and language backgrounds.

The Stanford Liang et al. 2023 study found that seven widely used GPT detectors misclassified over 61 percent of TOEFL essays written by non-native English speakers as AI-generated, while achieving near-perfect accuracy on native English student essays. This 61 percent false-positive rate exists alongside vendor-reported false-positive figures of 0.2 to 1.5 percent, because those vendor figures are not measured among the non-native English-speaking population.

The mechanism is straightforward: AI detection measures statistical predictability in word choice and uniformity in sentence structure. Non-native English writers, writing carefully and correctly in a non-primary language, produce text with lower perplexity and lower burstiness than native English writing. Formal vocabulary and consistent grammatical precision, which characterize careful non-native English writing, are the same statistical properties that language models optimize for. The detector cannot distinguish between the predictability of AI-generated content and that of carefully written content in a non-primary language.

Institutions deploying AI detection in student populations that include significant international student bodies are using tools that produce documented false-positive rates of 61 percent in that population while claiming less than 1 percent overall. This discrepancy is not disclosed in vendor marketing materials, not communicated to institutions in standard implementation guidance, and not acknowledged in most institutional AI detection policies. AI detection bypass tools that shift the statistical profile of ESL writing toward the native English range are correcting a documented bias, not enabling academic dishonesty.

Why Institutions Keep Using Tools Whose Limitations Are Documented

If the evidence for systematic false positive problems is documented, published in peer-reviewed journals, and confirmed by an FTC enforcement action, why do institutions continue deploying these tools in high-stakes academic integrity contexts without meaningful disclosure to students?

Liability Asymmetry

For an institution, the perceived risk of being seen as "soft on AI cheating" is more salient than the risk of false positive harm to individual students. Institutional administrators rarely see the downstream consequences of false positive accusations for individual students. They see the reputational benefit of appearing to maintain academic standards and the political cost of appearing to enable AI cheating. This asymmetry means institutions adopt detection tools without adequate due diligence on their accuracy limitations.

Vendor Opacity

Tool vendors have no incentive to disclose accuracy limitations that would reduce adoption. The Workado case demonstrates what happens when regulatory scrutiny reaches this space: a 45-percentage-point gap between claimed and actual performance that was not disclosed to the institutions or individuals relying on the tool. Without regulatory pressure, there is no market mechanism driving vendors to transparently disclose real-world false-positive rates across diverse populations.

The Status Quo Bias

Once a tool is embedded in an institution's learning management system and workflow, replacing it requires administrative effort and budget justification. Turnitin is integrated into Canvas, Blackboard, and Moodle at over 16,000 institutions. The switching cost is substantial. This creates institutional inertia that persists even as evidence of accuracy limitations accumulates. HumanizingAI writing to reduce statistical detection risk is a practical response for individual writers navigating systems that institutions are unlikely to replace or substantially reform in the near term.

What Writers Need to Understand

The picture that emerges from this evidence is specific and actionable.

A detection score is a probabilistic estimate, not a finding of fact. Every tool produces probability scores based on statistical measurements of text properties. No tool determines with certainty whether a human or an AI produced a piece of text. When an institution treats a detection score as evidence of AI use, it is treating a probabilistic estimate as a definitive conclusion. The Adelphi University court ruling of January 2026 established that a Turnitin score with no supporting documentation does not constitute a valid factual basis for a finding of academic misconduct.

Vendor accuracy claims require scrutiny. A tool claiming 99 percent accuracy requires understanding the benchmark conditions, the content population, the threshold setting, and the temporal validity of the testing before that number means anything for your specific situation. The FTC has established that these claims require substantiation. A student or professional who wants to challenge a detection-based finding has legitimate grounds to ask what evidence supports the tool's accuracy claims in the context of their specific writing.

Real-world accuracy of modern AI models is substantially lower than vendor figures suggest. The Perkins et al. 2024 study found a baseline accuracy of 39.5 percent across six detectors on content from GPT-5, Claude, and Gemini. After simple adversarial modifications, average accuracy fell to 22.1 percent. These figures are from the most relevant academic context and reflect the gap between controlled benchmark performance and real-world detection of modern AI content. Using a free AI humanizer to adjust the statistical properties of genuine human writing is a rational response to being evaluated by systems whose accuracy on the relevant population and content type is substantially lower than their headline figures suggest.

Non-native English speakers and formally trained writers experience documented multi-fold higher false-positive rates than vendor figures indicate. This is a systematic bias present in the tools by construction and not disclosed in standard vendor accuracy communications. Writers in these populations have both practical and ethical grounds to use statistical adjustment to align their writing's measured properties with what the tool was calibrated to read as human.

Solution Section: The Rational Response to Unreliable Measurement

Given the documented gap between vendor claims and real-world detection accuracy, the rational protective strategy for writers is a combination of statistical adjustment, process documentation, and informed awareness of institutional policies.

Statistical Adjustment Before Submission

Running your own content through a detection tool before submission and adjusting any sections that score high is not gaming an accurate system. It is protecting yourself against an inaccurate system. Detection tools measure perplexity and burstiness. These properties can be adjusted in genuine human writing without altering the content or misrepresenting the author. The goal is to ensure your writing's statistical profile falls within the range the tool associates with human writing, which is appropriate when the tool has documented rates of misclassifying human writing that falls outside the native English casual prose range it was calibrated on.

Process Documentation as the Ground Truth

The most durable protection is documentation that no detection score can rebut: version history that shows your writing developing in real time, Grammarly Authorship reports that track the proportion of content you typed directly, research notes with timestamps, and draft evidence. These process records are the actual evidence of human authorship, and they are what the Adelphi University judge found decisive in overturning a detection-based academic misconduct finding. Humanize neurodivergent writing, or any other formally patterned human writing, benefits from both statistical adjustment and process documentation: the adjustment reduces detection risk, and the documentation provides a rebuttal if detection occurs despite the adjustment.

Challenging Accuracy Claims in a Dispute

Writers facing adverse institutional decisions based on detection scores have legitimate grounds to challenge the accuracy claims behind the tool. Citing the Perkins et al. finding of 39.5 percent baseline accuracy, the Stanford Liang et al. finding of 61 percent false positives on ESL writing, and the FTC enforcement action establishing that accuracy claims require substantiation provides a factual basis for challenging the evidentiary weight of any detection score. These are not obscure findings. They are peer-reviewed studies and regulatory actions that are publicly available and citable in any formal appeal.

Conclusion

The data on AI detection accuracy in 2026 tells a consistent story that vendors do not tell their customers and institutions do not tell their students: real-world false positive rates are substantially higher than vendor-reported figures, the gap is largest for the populations most systematically disadvantaged by it, and the regulatory system has already confirmed that at least one major vendor's accuracy claims did not meet the basic standard of being supported by evidence. The FTC action against Workado is not a story about one bad actor. It is a story about an industry where marketing claims routinely exceed what evidence supports, and where the people bearing the cost of that gap, the students and writers who face false accusations, have been the last to receive honest disclosure. Understanding this gap is not pessimism about AI detection technology. It is informed engagement with a measurement system whose limitations are documented, whose claims are regulatorily contested, and whose consequences for individual writers are real.

Frequently Asked Questions

What did the FTC actually find about AI detector accuracy claims?

In April 2025, the FTC filed a complaint against Workado LLC for claiming 98.3 percent accuracy for its AI Content Detector, even though independent testing found the actual accuracy rate for general-purpose content was 53.2 percent. The FTC finalized a consent order in August 2025 requiring Workado to stop making accuracy or efficacy claims about its detection products without competent and reliable evidence supporting those claims, to retain that evidence for future claims, and to notify affected customers of the settlement. The FTC Director of Consumer Protection described the tool as performing "no better than a coin toss" on general-purpose content. The case established that accuracy claims for AI detection tools are subject to the same advertising substantiation standards as any other consumer product claim.

What does "99% accuracy" actually mean for an AI detection tool?

"99% accuracy" on a vendor-published benchmark typically means the tool correctly classified 99 percent of texts in a controlled dataset consisting of balanced, clearly AI-generated text and clearly human-written text from a specific content population, at a threshold the vendor chose to optimize the reported figure, tested at a specific point in time against specific AI model versions. Real-world accuracy depends on all of those variables, plus the distribution of content types, the proportion of formally written versus casual text, the proportion of non-native English writers, and whether the AI content has been lightly edited or paraphrased. The Perkins et al. 2024 study found baseline accuracy averaging 39.5 percent across six major detectors on modern AI model output before any modification. The gap between 99 percent and 39.5 percent reflects the difference between controlled benchmark conditions and real-world academic use.

What did the Washington Post find when it tested Turnitin?

In 2023, the Washington Post tested Turnitin on a sample of human-written texts and found a 50 percent false-positive rate, directly contradicting Turnitin's stated claim of less than 1 percent false positives. Turnitin's response clarified the trade-off it makes: its AI checker is deliberately calibrated to miss roughly 15 percent of AI-generated text in a document to minimize false positives. Both the 1 percent vendor figure and the 50 percent Washington Post result can be simultaneously accurate because they reflect different content populations and different threshold conditions. Turnitin's conservative calibration is appropriate for its academic use case and is actually more protective than most tools, but the Washington Post test shows what happens when formally structured human writing encounters a tool calibrated on different content.

Why do vendor benchmarks consistently overstate real-world accuracy?

Four methodology choices systematically inflate vendor benchmark figures. First, controlled dataset selection: balanced samples of clearly AI-generated and clearly human-written text from a narrow range of writing styles that do not represent the diversity of real-world submissions. Second, threshold selection: vendors report accuracy at threshold settings that optimize the headline figure, not at settings appropriate for practical deployment. Third, temporal validity: benchmarks conducted on older AI model output overstate accuracy on current models that produce more human-like text. Fourth, population representation: benchmark datasets rarely include the writing populations most likely to experience false positives, so elevated false-positive rates for those populations do not appear in the headline figures. The Workado FTC case is the clearest documented example of these issues reaching the point of regulatory action.

What should students and writers understand about AI detection data before trusting institutional decisions?

Five facts are essential. First, detection scores are probabilistic estimates, not findings of fact: no tool can determine with certainty whether a human or an AI produced a text. Second, vendor accuracy figures reflect specific benchmark conditions that differ significantly from real-world use, and the Workado FTC action established that these claims require substantiation. Third, real-world accuracy on modern AI models averages around 39.5 percent before modification, according to the Perkins et al. 2024 study. Fourth, false positive rates for non-native English speakers are documented at 61 percent or higher, far above vendor-reported headline figures. Fifth, process documentation, including version history, Grammarly Authorship reports, and draft evidence, provides the best rebuttal to any detection-based finding. Using an AI text transformer to adjust the statistical properties of genuine human writing before submission reduces the risk of a false flag, while process documentation and knowledge of institutional appeal procedures protect against the consequences of a false flag that occurs despite that adjustment.