AI content detection scores are a standard feature of editorial, publishing, marketing, and business writing processes. You can find these scores in content management systems, submission sites, academic sites, and freelance briefs. The vast majority of professionals who use these scores are never provided any information on what these scores actually mean, how much accuracy can be expected, and what these scores are supposed to and shouldn’t be used for. A 74% score doesn’t mean a document is 74% AI-generated. An 18% score doesn’t mean a document is 82% human-generated. Each score is a statistical prediction, a classification prediction based on a particular dataset, a particular evaluation, and a particular time. AI detection in professional and academic contexts 2026 confirms that false positive rates of 5% to 15% are typical across current detection platforms depending on content type, and that high-confidence scores above 90% warrant investigation but do not automatically justify conclusions. This guide is intended for everyone who uses, encounters, or is affected by AI detection scores in any aspect of professional writing.

The techniques described in this guide are relevant to any situation where AI detection scores are used, encountered, or are relevant to writing. These situations include journalists and editors using AI detection to evaluate submissions, content marketing teams using AI detection to manage AI-assisted content creation and distribution, publishers using AI detection to set guidelines for authors, enterprise communications teams using AI detection to develop AI use policies, and writers using AI detection to understand what the result means to them. One of the most important literacy skills in writing is understanding what AI detection scores mean. In 2026, it is one of the most important literacy skills in writing.

What an AI Detection Score Actually Measures

The detection software doesn’t “read” the text for meaning. It looks at statistical properties of the text, like perplexity (the predictability of word choices based on surrounding context) and burstiness (variations in sentence length throughout the text). Low perplexity and low burstiness are indicative of AI-generated text because language models try to pick the most probable word based on statistical predictions. Human writing tends to have higher perplexity and more burstiness because it tends to be more expressive and dependent on context. what an AI detection score actually measures documents how these signals translate into the percentage figure users see: the score represents the proportion of the text whose statistical properties match the AI-output pattern in the tool's training data. It does not represent a probability that the document was generated by AI. It represents statistical similarity to AI-generated text, which is not the same thing.

Reading Detection Scores Correctly

AI detection software doesn’t “read” text for meaning. It looks at statistical properties of the text, like perplexity (how predictable a word choice is based on surrounding context) and burstiness (how varied sentence length is throughout a document). Low perplexy and low burstiness are hallmarks of AI-generated text because language models try to predict the statistically most likely word choice at each point. Human text tends to have high perplexity and varied sentence length because human authors make expressive, context-dependent word choices that aren’t always efficient. how to read and interpret AI detection scores documents three specific failure modes in score interpretation that are consistently observed in professional settings: relying on a single score, using the same threshold for different types of content, and ignoring sentence-level results in favor of document-level results. All three result in unnecessary errors, whether in false accusations of AI use or in failing to identify real AI use.

The most useful information in an AI detection report, whether positive, negative, or inconclusive, is not the percentage figure. It’s the sentence-level results, sometimes displayed as a heatmap or color-coded overlay. Sentences with high confidence in the body of a document, where there’s no expectation of such sentences, and where there’s some specific statement or unusual phrasing, are more likely to be real AI use than sentences in the introduction or conclusion, where there’s structural predictability in any human-written essay.

Interpreting Score Ranges in Practice

A 0%-20% score is generally consistent with human-written content in most professional contexts. There is no action that should be taken on these types of scores in isolation. Scores between 20%-60% are generally ambiguous and must be interpreted in context. The interpretation of a 45% score on a technical white paper written by an engineer in their second language is very different from the interpretation of the same score on a marketing blog post written by a freelance writer with no prior writing history. Scores above 60%, especially on long-form content where the model has enough text to reach statistical reliability, are likely to indicate the use of AI, but the output must still be reviewed by a human before taking action. AI detection benchmarking and what scores mean confirms that even at the 99% confidence level, commercially deployed detectors produce false positive rates that are non-trivial at scale. A tool claiming 99% accuracy at the document level still generates roughly one wrongful flag per 100 fully human-written documents scanned, which is a significant burden at publishing volumes.

Threshold Frameworks by Professional Context

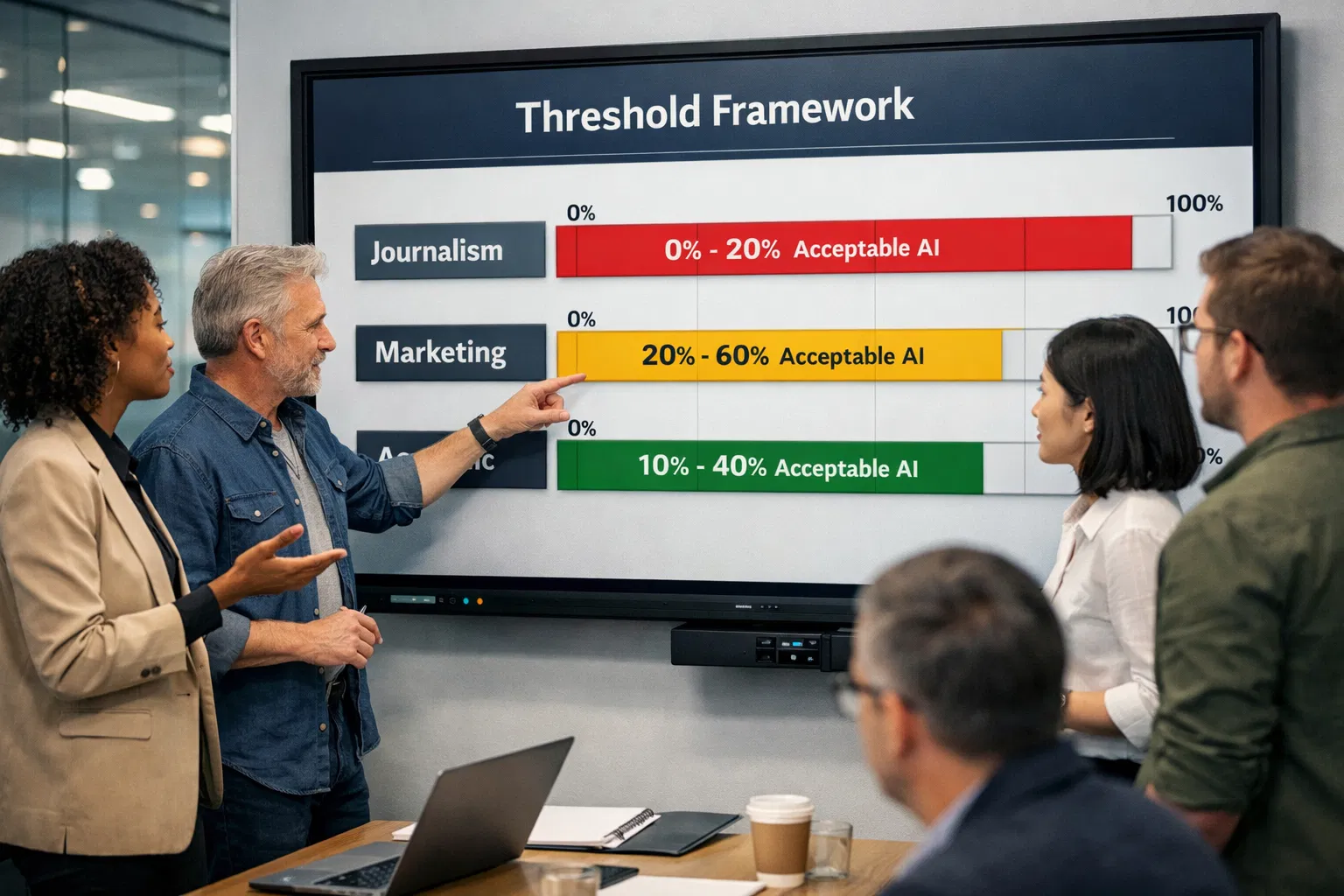

There is no universal threshold above which a detection score means AI was used and below which it does not. Every professional context has different standards, different risk tolerances, and different writing populations with different baseline detection profiles. The appropriate response to a score of 35% in a newsroom is entirely different from the appropriate response to a score of 35% in a corporate communications team. Setting explicit, context-appropriate thresholds is one of the most important things any professional writing environment can do to use detection tools responsibly. acceptable AI percentage thresholds by professional context establishes the practical ranges that have emerged across industries: journalism holds the line at near-zero for bylined work; academic publishing operates around the 10% to 20% range; content marketing accepts hybrid workflows up to 30%; and internal business documents tolerate up to 40% in many organisations. These are not official standards, but they reflect the working norms that professional contexts have developed in response to detection tool deployment.

Professional Context | Typical Threshold | Interpretation at Threshold | Recommended Action Above Threshold |

Investigative journalism / news bylines | Effectively 0% | Any AI signal in a named byline piece raises attribution and accuracy questions, regardless of score | Escalate to editor immediately; do not publish without verified human authorship and editorial sign-off |

Academic journal submission | 0-10% | Even minor AI involvement may constitute undisclosed use under most journal policies as of 2026 | Request author disclosure and process documentation; apply journal-specific AI use policy before peer review |

Corporate legal and compliance documents | 0-15% | Formal document style naturally produces AI-like patterns; sentence-level review is more reliable than document score | Legal reviewer check of flagged sentences; no automated rejection, document-level score alone is insufficient |

Publishing and editorial (books, magazines) | Below 20% | Scores in this range are consistent with heavily grammar-tool-edited human writing | Human editorial review of flagged passages; verify with a second tool before raising with author |

SEO and content marketing | Below 30% | Accepted working zone for hybrid workflows where AI assists with structure and human writers revise and verify | Review flagged sections for brand voice, factual accuracy, and uniqueness; do not rely on score alone |

Internal business documents (memos, reports) | Below 40% | AI assistance in drafting internal documents is widely accepted; detection is used for transparency, not enforcement | Log AI usage for governance records; no publication gatekeeping action required |

Regulated sectors (finance, healthcare, legal) | Domain-specific, typically below 10% | Regulatory guidance and liability exposure make any undisclosed AI generation a compliance risk | Flag immediately for human expert review; maintain audit trail; consult compliance officer before publication |

Setting Your Own Threshold Policy

A threshold policy for a professional writing environment should address three issues explicitly: at what score should content be reviewed instead of approved automatically? At what score should content be verified instead of approved? At what score, if at all, should content be discussed with the writer? These three thresholds will be different for different writing environments. For a journalism site, they might be set at 5%, 10%, and 15%. For a content marketing firm, they might be set at 20%, 40%, and 60%. The point is that they should be set explicitly and should be reviewed regularly based on changes in detection tool accuracy. how to handle AI detection false positives in your workflow confirms that the organisations that handle detection disputes most effectively are those that have pre-documented what each score range means in their specific context, so that writers are not surprised by enforcement actions and reviewers are not making ad hoc judgments under pressure.

Multi-Tool Verification: Why One Score Is Never Enough

The most important thing to know about the technology behind the detection of AI writing in professional writing is that the scores given by each technology on the same content are vastly different. The score on the same document might be 72% on one platform and 15% on another, and each might have been generated on the same day by a platform that claims 95%+ accuracy. The reason is that each was trained on a different set of data, uses a different classification system, was set at a different threshold, and was last updated on a different date. The score from only one source should never be the only source used to make an editorial decision. best AI detectors for professional content teams 2026 documents the practice that professional content teams and publishers have converged on: run every flagged piece through at least two independent tools before escalating. If both tools flag the same sentences at high confidence, that convergence is meaningful. If the tools produce divergent scores, the divergence itself is evidence that the flag is tool-specific rather than reflecting genuine AI authorship.

How to Conduct a Multi-Tool Verification

A multi-tool verification process should follow a defined sequence. First, run the document through your primary tool and record the document-level score and the specific sentences flagged. Second, run the same document through a second independent tool, preferably one using a different underlying methodology. Third, compare the sentence-level results: if both tools flag the same passages with high confidence, that convergence warrants further investigation. If they diverge significantly, treat the flag as inconclusive and apply your organisation's lower-threshold response rather than your higher-threshold one. Fourth, document both results and your interpretation for the editorial record. peer-reviewed study on AI detection accuracy across tool types confirms that complementing AI detection tools with other modes of assessment is necessary, and that false positives from one tool are not corroborated by divergent results from a second, well-calibrated tool. The study recommends that journal editors and professional reviewers not rely solely on any single detector when evaluating manuscripts or submissions.

Understanding False Positives and Population Bias

False positives in AI detection are not evenly distributed across writers. Certain populations of professional writers face systematically higher false positive rates, not because they use AI more frequently but because their writing patterns happen to overlap with AI output on the statistical measures that detection tools use. In professional writing environments, this creates an equity problem: the detection policy that seems neutral on paper produces unequal outcomes across the writer population. AI detection accuracy studies across diverse writer populations documents through multiple peer-reviewed studies that detection tools consistently underperform on ESL writing, highly formal technical writing, and writing that has passed through grammar tool editing. These are not edge cases. They are the normal writing profiles of large segments of the professional and academic writing community.

Writer Profile | Why Detection Is Elevated | Mitigation for Reviewers |

ESL and non-native English writers | Second-language writers use simpler syntax, more predictable vocabulary, and more consistent grammatical constructions. These are the exact properties that detection tools flag as AI-like, because AI training data also exhibits these patterns. The Stanford HAI study (Liang et al., 2023) found that 61.3% of TOEFL essays by non-native speakers were flagged as AI-generated. | Apply lower effective threshold for ESL contributors. Request process documentation rather than flagging for review. Consult the writer about their drafting method before escalating. Do not treat score as standalone evidence. |

Formal academic and technical writers | Academic prose uses standard transitions, hedging language, thesis-evidence-conclusion structure, and domain-constrained vocabulary. All of these produce low perplexity and low burstiness, the same statistical properties that characterise AI output. | Review the assignment rubric or submission guidelines to see whether the flagged patterns were required by the format. Compare score against the writer's previous submissions on the same topic. |

Writers who use grammar and editing tools | Grammarly, Hemingway, and similar tools regularise phrasing, standardise sentence length, and remove idiomatic variation. A document that has passed through multiple editing tool passes will show lower perplexity than the original draft, often enough to cross detection thresholds. | Ask the writer whether grammar tools were used and at what stage. If they can provide a pre-tool draft, the contrast between the two is itself evidence of human composition. |

STEM and technical writers | Lab reports, engineering documents, legal memos, and mathematical proofs use domain-constrained vocabulary, standardised structures, and passive voice, all of which reduce burstiness. The subject matter itself constrains word choice in ways that resemble AI output. | Run the same prompt through a major LLM and compare the output to the flagged document. If they diverge substantially in structure, specific detail, and argumentation, the human document is unlikely to be AI-generated. |

Writers producing short-form content | Detection tools require a minimum of roughly 250 to 300 words for statistically reliable classification. Content below this threshold, social media copy, executive summaries, captions, taglines, produces unreliable results with elevated false positive rates. | Do not apply detection scores to submissions under 300 words. For short-form professional content, assess AI use through workflow documentation and editorial conversation rather than automated scoring. |

Specific Guidance for ESL and Technical Writers

Professional environments with ESL contributors, international authors, or technical domain specialists require an adjustment to the policy. The policy does not have to be changed to reduce standards. The policy must recognize that applying the same statistical threshold to all writers will result in an unfair number of false positives for these populations, leading to unequal treatment of writers based on levels of originality. The adjustment to the policy is to require documentation of the process for any contributor flagged by the threshold. Stanford study on AI detector bias against non-native English writers established the foundational evidence for this policy adjustment: AI detectors misclassified 61.3% of essays by non-native English speakers as AI-generated. Professional environments that apply detection to ESL contributors without this adjustment are effectively enforcing a higher evidentiary standard against non-native speakers, which is an unjustifiable professional equity gap.

Integrating Detection Into Professional Writing Workflows

The best use for detection tools is to integrate them into a system as a quality indicator, rather than a gatekeeper at the end of a process. The organisations that get the best use out of AI detection are those that integrate it into the content pipeline early on, rather than seeing a score as a block to publication. The difference this makes is significant; writers are able to address any problems before they become an issue, and reviewers are able to make a judgment based on their understanding of the writer, the brief, and the content history. content marketing workflow measurement and AI oversight 2026 confirms that only 19% of content teams in 2026 use measurement frameworks to track AI-related performance indicators. Such organisations are better placed to use detection tools as a continuous quality process rather than an intermittent enforcement process.The position of the workflow is important. Detection at submission is more valuable than detection at publication because it allows time for a dialogue between the editor and the writer. Detection at the paragraph level or at the sentence level is more valuable than detection at the document level because it provides more targeted attention to the problematic content rather than rejecting the entire document. Detection logged systematically is more valuable than ad hoc detection because the log provides the pattern detection required to see which content types are producing high scores without the use of AI at all.

Where to Position Detection in the Editorial Pipeline

The lowest level of conflict, and one that helps build a documentation habit, is pre-submission detection, which writers do on their own before submission. This allows writers to deal with high detection scores before they become an editorial problem. Some professional platforms and agencies even mandate a pre-submission detection report as part of a submission package, treating a writer's own detection check as a disclosure, not a barrier to submission. AI detection false positives and workflow implications for professionals confirms that professionals who maintain pre-submission detection habits rarely face downstream disputes, because the habit also encourages the process documentation that resolves false positive claims. Post-submission detection at the editorial review stage is the most common current position in publishing and content teams, but it produces the most disputes when elevated scores surface after a writer has been paid or a piece has been approved.

Building a Professional AI Detection Policy

A functional AI detection policy for a professional writing environment is one that answers six questions, and those answers are shared with all writers for or within an organization. The six questions are: "What tool or tools are used for this, and why?" "What score triggers a particular level of response?" "What does a response at a particular level entail?" "How does a writer dispute a flagged response?" "How are disputes resolved?" and "How is this policy reviewed and updated as tool accuracy changes?" If a policy is unable to answer all six questions, then it is not a finished policy. evaluating and selecting AI content detection tools 2026 notes that the most effective AI detection tools for professional use are those that provide transparent reporting, documented false positive rates, sentence-level breakdown, and clear guidance on how to interpret their output. These features are necessary but not sufficient: the human policy framework that governs how results are used matters more than the tool's technical specifications.

Core Components of a Well-Designed Detection Policy

A good detection policy for professional writing will have four main components. The first is a description of what is included in the use of AI in terms of what is and isn’t permitted within an organization's writing contexts. This includes whether it's human writing edited by AI, human writing edited and generated by AI, or content generated without human touch. The second is a description of what happens at each score tier, who reviews it, and what level of evidence is required before taking any negative action. The third is a description of an appeals process, how a writer can appeal a flag, what evidence they can provide, and who makes the final decision. The fourth is a review cycle, at a minimum once per year, where it's re-evaluated based on detection accuracy. how AI detection false positives should be handled in policy confirms that organisations that publish their detection policies to contributors have lower rates of detection disputes than those that apply detection without writer notification. Transparency is not just a fairness measure. It is an operational efficiency measure that reduces the time spent on disputes and appeals.

Reducing Unnecessary Detection Risk in Your Professional Writing

Professional writers who wish to avoid unwarranted flags without changing their basic approach to writing can address some of the more common mechanical causes of high detection scores. These causes include over-editing with grammar checkers, adherence to strict sentence structure templates, and vocabulary choice based on topics rather than overall vocabulary range. These approaches do not signal AI use, but they all cause the text to fall within the statistical range of AI detection systems. humanize AI text to reduce false detection risk provides a practical tool for reintroducing the lexical and rhythmic variation that grammar tools and template adherence tend to remove, restoring the burstiness profile that detection tools associated with human writing without requiring significant changes to the underlying content or argument.

Draft your writing in Google Docs, enabling version history. Your writing history is the most effective protection against a false positive, and it will be created automatically, requiring no additional effort.

Save a pre-grammar-tool version of all professional writing before running it through Grammarly, Hemingway, or other writing tools. The pre-grammar version will show the natural language variations that are lost in the process of editing, and it will be the decisive proof in a false positive dispute.

Run a pre-detection check on your writing using the same tool your client or editor uses on your writing. If certain sentences are high-risk, revise those sentences for more variety and specificity before submitting the writing. It is always easier to fix high scores before they become an issue than to dispute them afterwards.

Keep evidence of your research trail. Bookmarked sources, PDFs, and research notes that existed before your final document will demonstrate your research process. The research trail does not exist in AI-generated documents.

If you use AI tools for permissible purposes such as brainstorming, outlining, or summarizing research, document your use in your submission notes. It’s better to be transparent about permissible uses of AI tools than to leave it up to a detection tool to give a probabilistic answer.

Conclusion

AI content detection scores in professional writing are never definitive. Rather, they are probabilistic signals. To understand AI content detection scores, one must understand what they are trying to signal. One must also understand that no one-size-fits-all rule will work. One must use multiple tools to distinguish AI content detection signals from anomalies specific to each tool. One must also understand that human judgment cannot be replaced. The professionals using AI content detection tools most effectively in 2026 are those who have developed policy, incorporated detection early in the process, accounted for bias in the contributor population, and educated themselves to use each detection score as a starting point for conversation rather than an endpoint for decision. AI content detection tools will continue to get better and worse in new ways with each iteration of language models. Policy and interpretive practice will continue to be more lasting than accuracy claims.

Frequently Asked Questions

How accurate are AI detection tools for professional writing in 2026?

real-world AI detector accuracy tested across content types 2026documents accuracy rates between 65% and 90%, depending upon the tool, content type, and whether or not the text has been edited or rewritten after creation. The tools work best with unedited, long-form AI content created with popular models, and worst with short text, human-AI hybrids, text written by ESL authors, and text that has been edited with grammar or style tools. No detector should be considered reliable in all writing situations, and none should be relied upon in making important professional decisions.

What should I do when two detection tools give very different scores on the same document?

Divergent scores from two independent tools are themselves evidence that the elevated score from one tool is tool-specific rather than a genuine signal of AI authorship. Document both scores, note the specific passages that each tool flagged, and compare: if the flagged passages do not overlap, the tools are identifying different statistical artefacts, not a consistent AI signal. Apply your lower-threshold response rather than your higher-threshold one. Request process documentation from the writer to supplement the inconclusive automated results. academic analysis of AI detection inconsistency across platforms confirms through academic analysis that detection algorithms struggle with evolving AI models and that inconsistency across tools is a documented and expected characteristic of the current detection landscape, not an anomaly.

Should I tell writers that their work will be checked with an AI detection tool?

Yes, in all professional contexts. Making writers aware of this prior to the process also fulfils a number of purposes, including ensuring that writers who have used AI for any part of a text are encouraged to declare this rather than relying on the tool to detect and declare, reducing the confrontational nature of a writer becoming aware of a flag having been raised after the event, and increasingly, a legal and contractual requirement for the industry sector. It also satisfies the principle that detection tools are to be seen as a means of transparency rather than surveillance, and this is the approach that results in the best professional relationship between organisations and writers.

How often should I update the thresholds in my detection policy?

The accuracy of detection tool results varies with every model update, and model updates occur quarterly or more frequently for the major tools. What may have been a good threshold in February may now be systematically off by August. At least annually, review your threshold policy with the latest false positives provided by the tool vendor and independent benchmark testing. In fact, the most effective professional detection policies likely include a standing agenda item in the quarterly content quality review process: are the detection results more or less consistent with editorial judgment? If you are seeing a pattern of detection flags being raised and always being dismissed by the editor in favor of the writer, then you are likely using a threshold that is too low for the mix of content you are currently being asked to review.

Can I use AI detection scores in employment or contractor decisions?

With great caution and, if possible, legal advice, depending on the jurisdiction and the context. The detection score is not sufficient grounds to terminate or refuse the professional relationship because the documented false positive rate for professional writing contexts, especially in technical, ESL, and grammar tool edited writing, is high enough that the detection score should not be taken as evidence of a violation. The detection score should be used as a starting point to discuss the process documentation, not as grounds to terminate the relationship. If the writer or contractor is able to provide credible process documentation that is inconsistent with the use of AI generation, the process documentation should be given more weight as evidence than the probabilistic output of a tool with an admitted false positive rate.

This guide is based on the current state of the art in the ability to detect AI content, industry practice, and professional writing standards as of March 2026. The accuracy of content detection tools, the policies adopted by the companies that provide them, and the threshold levels set by the industry are constantly changing. The advice given in this document is not intended to be legal or compliance advice. For legal advice, you should consult qualified legal professionals.