A student submits her essay, which took her two weeks to write. The professor uses an AI detector. The detector indicates that 87% of her work was generated by AI. The student did not use ChatGPT. The student did not use any AI tool. The student is a non-native English speaker whose writing style trips the AI detector. This is happening in universities across the world. The numbers indicate that the situation is worse than people think.

The AI-powered content creation market is projected to reach $80.12 billion by 2030 (Grand View Research), growing at a 32.5% annual rate. As AI-generated content becomes increasingly common, the AI detection tools industry has emerged alongside it. These tools claim to distinguish human writing from machine output. The story that the research behind these tools reveals, however, is quite disturbing: not only are the tools unreliable and biased, but they are also causing real harm to real people. This guide provides the evidence. We'll look at the reasons why AI detection tools fail, identify the groups these tools mainly harm, and provide ways in which you can defend your content against being wrongly accused. For a deeper dive into the mechanics, see our overview of understanding AI detection and how these systems actually evaluate your writing.

Key Takeaways:

The accuracy of AI detectors varies from 65% to 90%, depending on the detector. The free ones have lower accuracy compared to the paid ones.

A Stanford study found that 61.3% of essays written by non-native English speakers were misclassified by 7 of the top AI detectors.

In July 2023, OpenAI discontinued its AI detector after finding that it could correctly identify only 26% of AI-generated content. At the same time, it misidentified 9% of human-written content as AI-generated.

In a 2026 study, it was found that AI detectors misclassified between 43% and 83% of genuine student-written content as false positives.

Even top AI detectors like Turnitin and Originality.ai have a 1-2% false-positive rate, resulting in thousands of false accusations annually.

BestHumanize works towards protecting genuine content creators from being flagged by AI detectors through intelligent content optimization.

How AI Detectors Actually Work (And Why the Science Is Flawed)

perplexity and burstiness. Perplexity shows how predictable the next word is. AI models pick words based on statistical likelihood. Creating smooth, repetitive text with low perplexity. Humans, though, often choose unexpected words, which leads to greater perplexity. Burstiness tracks how sentence length and complexity change across a piece. Humans mix short, sharp sentences with longer, detailed ones. AI sticks to uniform patterns, so its burstiness is low. The real problem? Assuming low perplexity and low burstiness means AI authorship is flawed. That's not true. Technical manuals, legal briefs, academic papers, ESL writing, and standard business reports all show similar patterns. These tools aren't catching AI; they're catching a style that real humans use too. At least in theory, this shared style undermines their accuracy. For a detailed explanation of how these mechanisms function, Grammarly’s analysis provides an accessible technical overview.

The Data: How Often AI Detectors Get It Wrong

The gap between what AI detection companies claim and what independent research shows is staggering. Here’s what the evidence says.

False Positive Rates by Tool and Context

Source / Study | Context | False Positive Rate | Year |

Stanford (Liang et al.) | TOEFL essays (non-native speakers) | 61.3% | 2023 |

OpenAI AI Classifier | General text | 9% (before shutdown) | 2023 |

2026 Commercial Detector Study | Authentic student essays (192 texts) | 43–83% | 2026 |

Professional Content Audits | Human-written non-fiction | >30% | 2026 |

Turnitin (claimed) | Academic submissions | <1% | 2023–2026 |

Washington Post (Turnitin test) | Independent evaluation | ~50% | 2023 |

Hyatt et al. | STEM student essays (aggregated) | 1.3% (AI) vs 5% (human raters) | 2025 |

GPTZero | General usage | 70–80% accuracy range | 2026 |

Sources: Stanford HAI, OpenAI, Advances in Physiology Education, Washington Post, independent 2026 audits

The Scale Problem

However, even a low false positive rate quickly becomes catastrophic when you multiply it by the numbers. Take, for example, a university that processes 100,000 submissions every year and claims a 1% false-positive rate. This is equivalent to 4,800 false accusations per year, given the base rates. Every one of these is a student being investigated for academic integrity regarding something they actually wrote. The JISC National Centre for AI has flagged this as a burden too large for institutions to investigate properly.

Who Gets Hurt: The Human Cost of Unreliable Detection

Non-Native English Speakers

The most well-documented bias in AI detection is against non-native English speakers. The landmark Stanford study by Liang et al. (2023) tested seven popular AI detection tools on TOEFL essays from non-native English speakers. “The results are alarming: 61.3% of human-written essays are misclassified as AI-generated. In about 19% of cases, all seven tools agree on the misclassification.

The reason for this is structural. Non-native speakers use simpler words, more predictable sentence structures, and fewer grammatical variations, all of which are also characteristics of AI-generated texts that AI detectors flag. As Professor James Zou of Stanford University explained, these detectors "calculate a score based on a concept called perplexity, which is related to how sophisticated a piece of writing is, and non-native speakers tend to have a lower sophistication level."

For international students, false accusations carry particularly severe repercussions. Not only could they face severe academic consequences for any academic misconduct, but they could also face visa revocation and deportation, a fact that has a "chilling effect" on these students' willingness to even submit written work at all.

Students and Academics

A linguistics professor at the University of California, Davis, said that 17 students had been flagged by the school's AI detector for using AI in their essays. After manual checking, 15 of the 17 flagged cases were found to be incorrect positives. The students who were caught were mainly non-native English speakers and those who had writing tutors.

One professor at Texas A&M University used AI-detection software to check final assignments, leading many students to fail the class. When they appealed, a few students were able to show through writing portfolios, draft histories, and notes that everything they submitted was their own work. The university changed the marks, but students say the experience left them with ongoing academic anxiety and broken relationships with faculty.

Freelancers and Content Professionals

The effect is not limited to the academic sphere. Freelance writers have faced contract termination and non-payment for work due to false-positive identification, even when they could provide version histories and timestamps to prove their authorship. For those whose professional reputation depends on the originality of their work, being accused of using AI can be damaging.

When the Creators of AI Can’t Detect AI: The OpenAI Classifier Failure

Perhaps the most telling data point in this entire debate: OpenAI, the company that built ChatGPT, could not build a reliable detector for its own AI’s output.

OpenAI launched its AI Text Classifier in January 2023. By July 2023, less than six months later, the company shut it down, posting a terse note: “As of July 20, 2023, the AI classifier is no longer available due to its low rate of accuracy.”

The numbers were damning. The classifier correctly identified only 26% of AI-written text as likely AI-generated (true positives), while incorrectly labeling 9% of human-written text as AI-generated (false positives). It was unreliable on texts shorter than 1,000 characters, performed poorly on non-English texts, and was, by OpenAI’s own admission, “sometimes extremely confident in a wrong prediction.”

If the world’s leading AI company cannot build a reliable detector, that should give everyone pause. MIT Sloan has published an unequivocal position: AI detectors don’t work.

The Market Reality: AI Content Is Here to Stay

The question of AI-generated content is not going away. The numbers make this clear:

The generative AI content creation market was valued at $14.8 billion in 2024 and is projected to reach $80.12 billion by 2030 (Grand View Research).

The AI content marketing market alone grew from $4 billion in 2025 to $5.01 billion in 2026, at a 25.4% CAGR (Research and Markets).

70% of companies using AI for content creation report increased content output (SNS Insider).

AI-generated video is projected to account for 10% of all digital video content by 2026.

In blind academic tests, 94% of AI-written submissions went undetected by human evaluators.

Was this content generated by AI? is a question that is quickly becoming irrelevant. The only questions that matter are "Is the content accurate? Is the content valuable? Is the content the author’s ideas?" None of these answers are provided by detectors.

How to Protect Your Content from False AI Detection

Regardless of whether you're a student, freelancer, business owner, or publisher, falsely being flagged by an AI detector can have serious consequences. Check out these scientifically proven methods to safeguard your work from such situations.

1. Document Your Writing Process

The best way to refute a false accusation is to demonstrate the process. Don't just create documents and finish work; leave drafts, do version control, note down research, and save all timestamps of your work. Version history of Google Docs, Git commits for technical writing, and even screenshots of your notes can serve as evidence that the work is authentically yours.

2. Vary Your Writing Style Intentionally

Since detectors flag regular, routine texts, you can reduce the risk of false positives by varying sentence structure in a more human way, mixing short and long sentences, and adding unexpected words, personal stories, or first-person narration. Our guide to content humanization strategies provides detailed techniques for natural variation that doesn’t feel forced.

3. Test Across Multiple Detectors

No detector must be considered an authority. If you worry about being marked, review your material with several identifying programs. A great divergence among these instruments is, in fact, an indication of the technology's untrustworthiness. For proven techniques to optimize your text across detectors, explore our advanced rewriting techniques.

4. Understand Your Rights

Multiple universities and institutions, including guidance from MIT Sloan and the University of Kansas, have concluded that AI detector scores should not be used as standalone evidence in academic misconduct cases. If you’re accused, know that the burden of proof typically lies with the accuser, and detector scores alone are increasingly considered insufficient evidence.

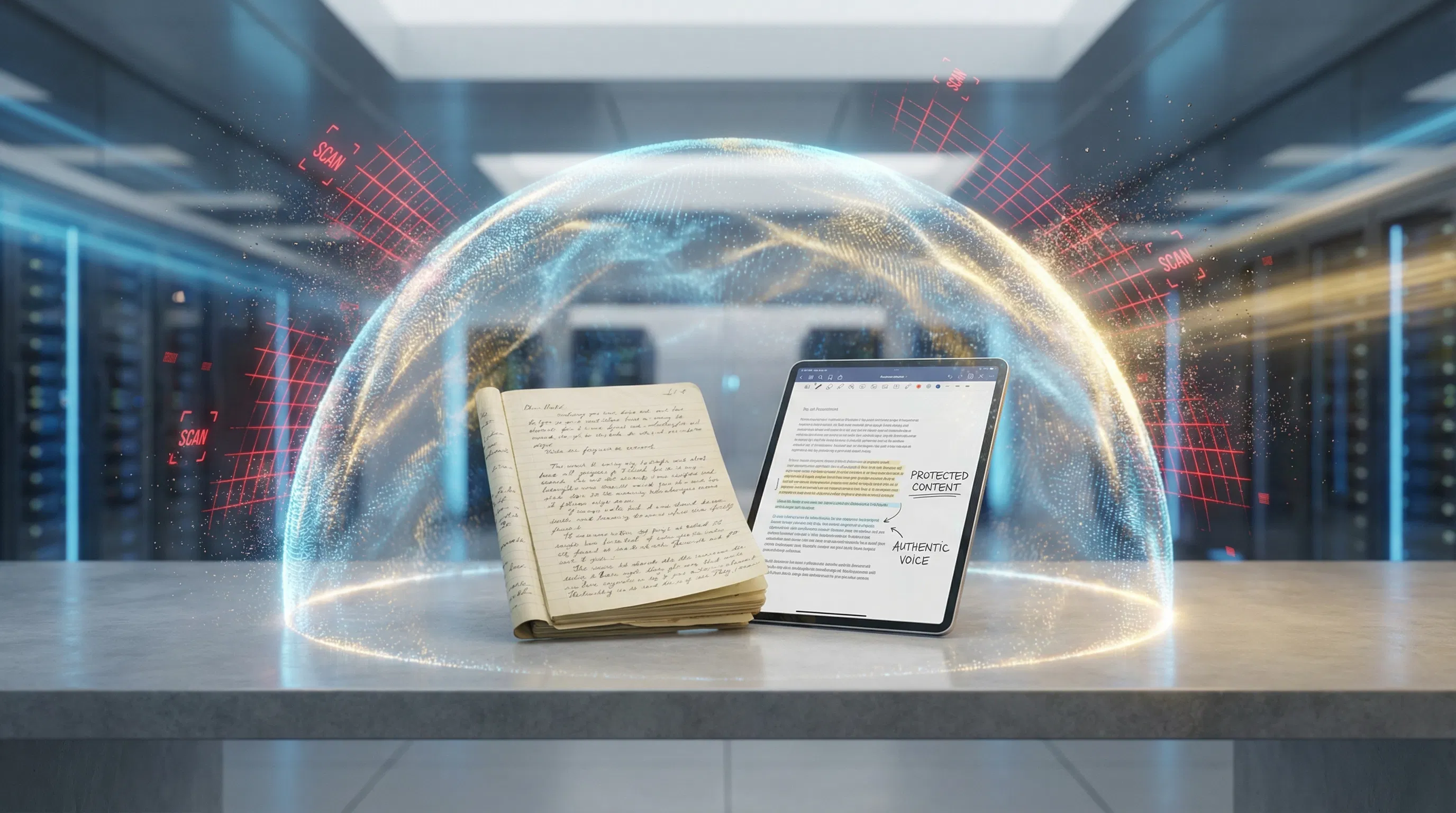

5. Use Professional Content Optimization Tools

Tools like BestHumanize are designed not to help people cheat, but to help legitimate content creators protect their work from the flawed detection systems documented throughout this article. By optimizing natural linguistic variation in your content and adjusting perplexity and burstiness to reflect authentic human writing patterns, these tools serve as a defense mechanism against unreliable technology.

The BestHumanize Approach: Protection, Not Deception

BestHumanize exists because AI detection technology is fundamentally broken, and real people are suffering real consequences from its failures. Our automated humanization platform is built on three principles:

Unlimited Access for Content Optimization

BestHumanize operates on an unlimited access model because protecting your content shouldn’t be rationed. Whether you’re a student submitting assignments, a freelancer delivering to clients, or a marketing team scaling content production, the platform provides unrestricted access to content optimization tools that ensure your human-authored work reads the way it should—naturally, dynamically, and authentically.

Expert Marketplace for Quality Assurance

Our marketplace helps you connect with domain-specific editors and content specialists for human review and refinement. This is not about making AI content look like it was written by humans; it’s about making sure your content, no matter how it was created, is of the highest quality, accuracy, and readability.

Compliance and Integrity Integration

BestHumanize integrates originality verification, citation management, and industry compliance checks directly into the content workflow. We believe that ethical content creation and protection from flawed detection systems are not mutually exclusive; they’re complementary. Our tools help you maintain integrity while defending against technology that too often lacks it.

The Ethical Framework: Transparency in an AI-Assisted World

This article isn’t an argument for deception. It’s an argument for fairness.

The AI detection tools currently on the market aren’t capable of reliably distinguishing between human and machine writing. They unfairly target non-native English speakers, formal writers, and anyone whose writing happens to match the incomplete data the algorithm was trained on. Using such tools for anything from academic integrity to professional credibility to content publishing is, in itself, an ethical failure. For a deeper exploration of these principles, see our ethical AI usage guidelines.

The path forward is clear: it requires three elements: transparency about AI use in content creation, recognition of the limitations of detection technology when making high-stakes decisions, and the availability of tools to help content creators protect their work from unfair algorithmic judgment.

Frequently Asked Questions

Are AI detectors accurate in 2026?

The accuracy of AI detectors also varies widely. The results of independent tests show that the accuracy range of paid AI detectors varies from 65% to 90%, while free ones like GPTZero are lower. However, it has been found that the metrics used to measure the accuracy of AI detectors are also misleading. Even if the overall false-positive rate of AI detectors is low, thousands of misidentifications will still occur given the volume of documents scanned. None of the big institutions recommends using AI detectors as the sole basis for detecting misconduct.

Why do AI detectors flag human-written content?

Perplexity, which measures word predictability, and burstiness, which measures sentence variation, are the two metrics used by the AI detectors. Any writing style that yields low scores on these metrics, such as technical, academic, legal, and even ESL writing, can lead to false positives. The detectors aren’t detecting AI; they're detecting a writing style shared by AI and some human writing.

Do AI detectors discriminate against non-native English speakers?

Yes. A peer-reviewed Stanford study (Liang et al., 2023) found that seven widely used AI detectors misclassified 61.3% of TOEFL essays by non-native English speakers as AI-generated, with 19% flagged unanimously by all detectors. This bias occurs because non-native speakers tend to use simpler vocabulary and more predictable sentence structures, the same patterns that detectors associate with AI output.

What happened to OpenAI’s AI classifier?

OpenAI launched its AI Text Classifier in January 2023 and shut it down in July 2023, less than six months later, citing “low rate of accuracy.” The tool correctly identified only 26% of AI-written text while falsely flagging 9% of human-written text. OpenAI, the creator of ChatGPT, acknowledged that reliably detecting AI-generated text remains an unsolved problem.

How can I protect my content from false AI detection?

Document your writing process with draft histories and version control. Vary your sentence structures naturally. Test across multiple detectors to expose inconsistencies. Understand your institutional rights. Most universities now recognize that detector scores alone are insufficient evidence. Consider using content optimization tools like BestHumanize that adjust your text’s linguistic patterns to reflect natural human variation. For more strategies, see our guide on reliable detection protection.

Should AI detectors be used as evidence of academic misconduct?

No. Multiple institutions and independent studies, including guidance from MIT Sloan and the University of Alberta, conclude that AI detector scores should not serve as standalone evidence. The error rates are too high, false positives are well documented, and the tools exhibit known biases. A detection score is a signal for investigation, not a verdict.

What is the difference between perplexity and burstiness?

The perplexity of a piece of writing is a measure of the predictability of the word choices in the writing. The lower the perplexity, the more predictable the language. Burstiness is the measure of the variation in sentence structure and length. The lower the burstiness, the more regular the sentence structure. The scores for AI writing on both measures are low, as they are for formal academic writing, technical writing, and non-native speakers. Scribbr’s guide offers a detailed technical breakdown of these concepts.

How does BestHumanize help with AI detection issues?

BestHumanize helps legitimate content creators protect their work from false AI detection flags by optimizing the natural linguistic variation in their text. The platform adjusts perplexity and burstiness patterns to reflect authentic human writing, provides access to expert human editors for quality assurance, and integrates compliance checks to maintain content integrity. Learn more about our automated humanization platform.