ZeroGPT is one of the most popular free AI content detectors in the world, scanning tens of millions of texts per month as of 2026. Its ease of use, with no need to sign up or face a word limit, makes it the first port of call for writers, students, and content professionals alike when looking to check the viability of AI-assisted content. Scoring 0% with ZeroGPT, which means the content is completely human-written according to the tool, is an important real-world achievement. However, it is not easy to achieve with any humanizer, especially after the ZeroGPT model update. It requires one that understands what ZeroGPT is actually detecting and focuses on those areas instead of rearranging words. ZeroGPT accuracy and how humanizers perform against it in 2026 confirms that ZeroGPT relies primarily on perplexity scoring and burstiness analysis, two statistical signals that measure how predictable word choices are and how much sentence length varies across a document. These are also the precise signals that effective AI humanizers target. Understanding this connection is the starting point for evaluating any humanizer: a tool that claims to score 0% on ZeroGPT should be able to explain, at least in general terms, how it addresses these two signals.

This guide covers how to evaluate and choose an AI humanizer that consistently delivers 0% on ZeroGPT. It explains what ZeroGPT measures and why some humanizers beat it while others do not, the five criteria that distinguish reliable tools from ineffective ones, the most important red flags to avoid, how to run a proper pre-purchase test on any tool, and what a 0% ZeroGPT score means in the context of a broader multi-detector workflow.

Why ZeroGPT Is the Right Starting Benchmark, and Its Limits

ZeroGPT is the correct place to begin testing an AI humanizer for several reasons: it is free to use without any word limits, which makes testing different tools on substantial content feasible, and the approach used by ZeroGPT is transparent, based on perplexity and burstiness without any deep learning model, so you can think about why a given tool passed or failed against it. what separates effective humanizers from generic paraphrasers in 2026 points out another important limitation, which will influence how we interpret a ZeroGPT score: “Another limitation to keep in mind when interpreting a ZeroGPT score is that it is one of the easier detectors to evade because it relies on superficial statistical indicators that humanizing tools are specifically designed to manipulate. Passing ZeroGPT at 0% doesn’t mean you’ll pass GPTZero at 85% or Originality.ai at 90% – just that you’ve succeeded in manipulating the most superficial signals. Starting with ZeroGPT is correct. Ending the evaluation after a 0% result on ZeroGPT is incorrect.

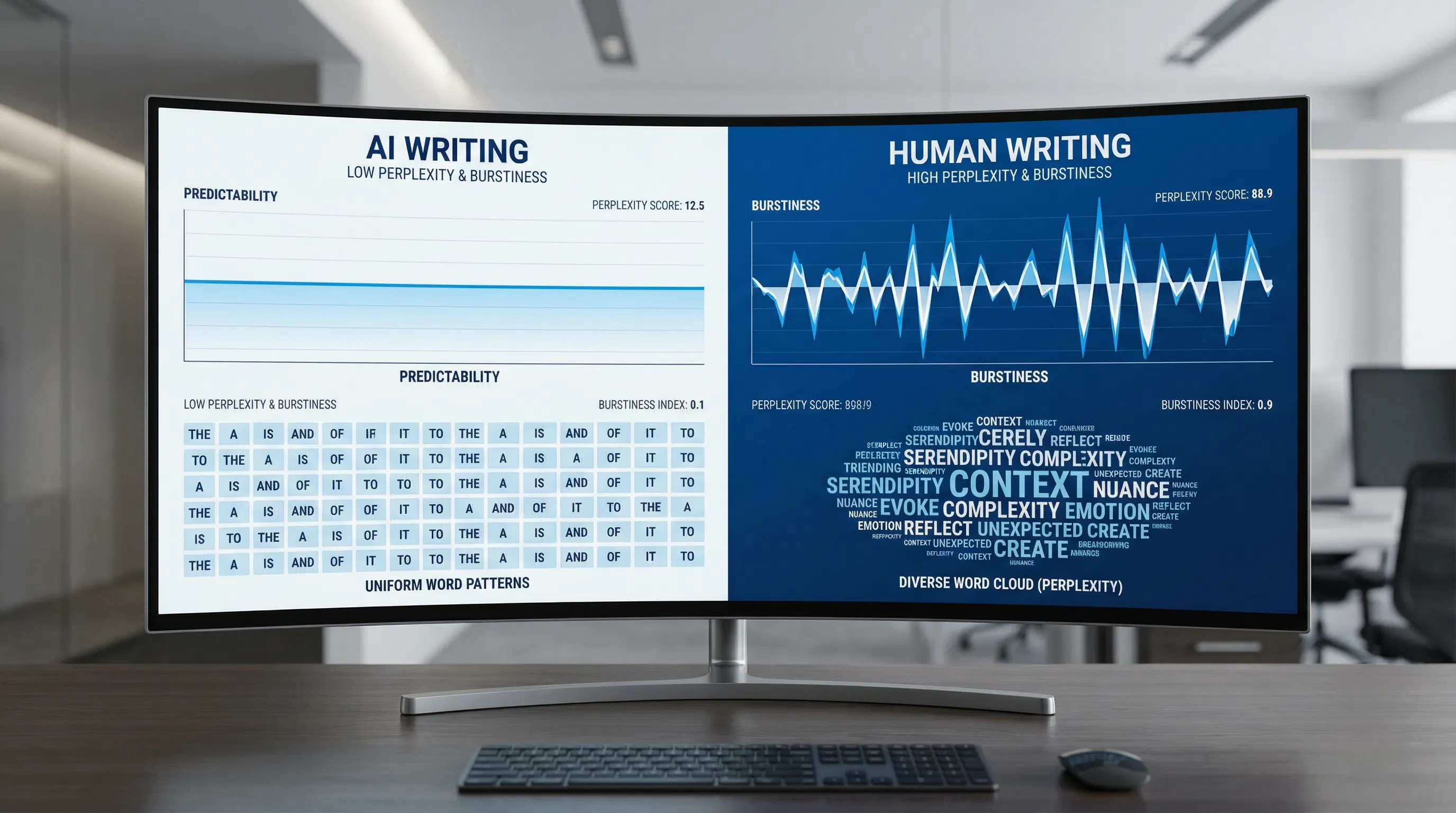

What ZeroGPT Actually Measures: Perplexity and Burstiness

The key is in understanding what ZeroGPT actually measures. It doesn't read for meaning, evaluate the quality of arguments, or verify the factual content. It measures two statistical characteristics of the content that reliably differ between AI-written content and human-written content. The first is perplexity: the degree to which the words chosen in the content are surprising in the context of the words that came before them. AI models write by choosing the statistically most likely next word at each point in the writing process. As a result, their writing tends to have very low perplexity. Human writing is full of surprising, context-dependent word choices, resulting in high perplexity. The second is burstiness: the degree to which the sentence structure in the content is simple or complex. AI writing tends towards simple sentence structure; human writing is full of simple sentences and complex sentences. independent testing of ZeroGPT accuracy and what its scores represent establishes through 2026 testing that the real-world accuracy of ZeroGPT ranges from 70% to 85%, which is considerably lower than its claimed 98%, and demonstrates that even a single pass of paraphrasing is enough to substantially impact the scores of AI, validating its heavy reliance on the obvious perplexity and burstiness cues that are trivial for humanizers specifically designed for the purpose to manipulate.

Why Some Humanizers Beat ZeroGPT and Others Do Not

The main difference between humanizers which consistently receive a 0% rating on ZeroGPT and those which do not lies in the depth to which they rewrite. Synonym substitution tools, while frequently presented as humanizers, substitute individual words with synonyms without altering the structure of the sentence, the ordering of clauses, or the overall rhythmic pattern. This has a minor effect on perplexity by adding uncommon vocabulary but has no effect on burstiness and frequently creates unnatural wording, which itself has a high probability of being anomalous. Purpose-built humanizers rearrange the structure of the sentence, adding vocabulary in context rather than synonyms, and rearrange clauses to break the predictability that is characteristic of AI. what an acceptable AI detection score means and how humanizers achieve it confirms that the detection scores may move by 30 to 60 percentage points with relatively modest stylistic changes. This accounts for the consistent 0% scores of quality humanizers versus the varied results from generic paraphrasers. The message for tool selection is clear: ask if the tool discusses its approach in terms of variation in sentence structure and enhancement of burstiness, as opposed to vocabulary diversification. A tool that only discusses synonym replacement is unlikely to deliver consistent 0% scores.

The Five Criteria That Actually Distinguish Reliable Humanizers

When assessing the humanization capabilities of an AI humanizer to deliver consistent performance with the ZeroGPT model, it's necessary to go beyond the marketing claims of the humanization tool itself in terms of bypass rates. Bypass rates cannot be verified and are usually only measured on a single piece of content. The following five criteria allow you to test the humanization capabilities of the content you provide. tested ranking of AI humanizers by bypass rate and output quality in 2026 tested twelve tools along dimensions such as bypass rate, readability, meaning preservation, speed, and price; the tools were used three times each with the average value taken as the final test; the texts were read manually to check for meaning drift and grammatical errors not picked up by automated tests. The relevant dimensions are the right five. Each is discussed below with specific advice on testing them yourself before committing to a tool.

Bypass rate on ZeroGPT. This is the primary criterion, but it needs to be tested on a meaningful-length passage, not a 50-word excerpt. Take a 400 to 500 word sample generated by a current AI model such as ChatGPT, Claude, or Gemini, run it through ZeroGPT before humanization to confirm it scores at 90% or above, humanize it with the tool under evaluation, and check the ZeroGPT score on the humanized output. A reliable tool should drop the score below 10% on the first pass on most content types, not just on the sample the tool uses on its own demonstration page.

Meaning preservation. Read the humanized output carefully and compare it to the original. Every key claim, piece of evidence, and conclusion in the original should be present in the humanized version. Aggressive rewriting that achieves a 0% ZeroGPT score by replacing your arguments with generic filler has not solved your problem; it has created a different one. This criterion requires human judgment and cannot be delegated to an automated test.

Output naturalness. Read the humanized text aloud. Natural human writing has varied rhythm: some short sentences, some longer ones, idiomatic phrasing, occasional asides, and contextually motivated vocabulary. AI-generated text and poorly humanized text both sound wrong when read aloud, for different reasons: AI text sounds too smooth and uniform, while over-humanized text sounds like it was translated through a thesaurus. A tool that achieves 0% on ZeroGPT but produces text that no human would plausibly have written has not solved the underlying problem for any use case where a human reader will also evaluate the content.

Multi-detector consistency. A 0% score on ZeroGPT from a tool that only manipulates perplexity and burstiness will not transfer to GPTZero, which uses a seven-component detection system including a deep learning classifier, or to Originality.ai, which uses a different model architecture optimised for web content. Run the humanized output through all three before trusting a tool. A genuinely effective humanizer achieves low scores across all major detectors, not just the easiest one.

Consistency across content types. Test the tool on more than one content type: an academic essay, a professional article, and a technical explanation. Tools optimised for one content type often fail on others. A humanizer that achieves 0% on blog post copy but scores 45% on academic writing is not a reliable tool for anyone who writes across multiple content types.

Evaluation Criterion | What to Test | Green Flag | Red Flag |

Bypass rate on ZeroGPT | Paste a 500-word GPT-4 essay, humanize it, check ZeroGPT score | Score drops from 95%+ to under 10% on first pass | Score stays above 30%, or tool claims success without showing a verifiable result |

Multi-detector consistency | Run the same humanized output through GPTZero and Originality.ai | All three detectors agree the output is human-written | Passes ZeroGPT but flags at 60%+ on GPTZero or Originality.ai — indicates surface-level manipulation only |

Meaning preservation | Read the output and compare it to the original argument | Every key claim, evidence point, and conclusion survives intact | Arguments are missing, changed, or replaced with generic filler — the humanizer has rewritten your content, not improved it |

Output readability | Read the humanized text aloud | Sounds natural; sentence rhythm varies; vocabulary is contextually appropriate | Sounds like a thesaurus exploded; sentences are identical length; transitions are mechanical or missing |

Free tier adequacy | Test with a 300-500 word passage on the free plan | Meaningful free test with no word limit that forces you to pay before you can see results | Only processes 50-100 words on free tier — too short to evaluate real detection performance |

Consistency across runs | Humanize the same text three times on the same settings | Each pass produces different output; all three pass detection | Output is nearly identical across runs — indicates synonym substitution rather than genuine rewriting |

Grammar and error rate | Check the output with a grammar tool after humanization | Fewer than two or three minor errors per 500 words | Multiple grammatical errors per paragraph — tool has introduced noise to evade detectors at the cost of quality |

Update frequency | Check the tool's changelog or release history | Model updates documented in the past 90 days | No changelog; no published update history; no mention of adapting to detector updates |

How to Set Up a Proper Pre-Purchase Test in Under Ten Minutes

A structured pre-purchase test will take less than ten minutes and will eliminate the most common mistakes in the evaluation process for a humanizer. Your task is to create a 400-word piece using the same model as the one you actually use in your workflow, on a similar topic to the ones you usually write on, on the free version of the tool you're testing. Verify the original's ZeroGPT score to ensure it's above 90%, and then proceed to humanize and verify the new score. If the tool doesn't offer a worthwhile free version, it's a clear sign the tool might be flawed. A tool that has nothing to prove before requiring a payment plan knows it will never be able to provide consistent results on unknown content. best free AI humanizers that offer genuine testing capability in 2026 were tested for their quality of free access, verifying that the best tools in 2026 offer sufficient free access to conduct a meaningful test, rather than restricting free use to 80 to 100 words, which is not sufficient to determine any statistical trend. After testing on the free plan, read the text aloud, assess the meaning, and test using GPTZero and Originality.ai. You can have the entire picture of what the tool can really offer within ten minutes without paying a single dollar.

Red Flags: What to Avoid When Choosing a Humanizer

The AI humanizer market in 2026 includes a large number of tools that market themselves aggressively on the basis of 0% detection claims but deliver inconsistent results in practice. Several patterns reliably identify tools that are unlikely to perform as advertised. tested free AI humanizer tools ranked by bypass rate and output quality tested tools specifically by reading outputs manually for meaning drift, awkward phrasing, and grammar errors that automated bypass testing cannot catch, and found that many tools producing 0% ZeroGPT scores on demonstration content produced unreadable or meaning-changed output on independent test passages. The red flags that predict this outcome are identifiable before purchase.

Self-reported 0% bypass claims without verifiable third-party testing. A tool that posts screenshots of its own tool showing 0% ZeroGPT is not providing independent evidence. Look for independent reviews that tested the tool with their own content.

Free tier capped below 150 words. A passage under 150 words is too short for ZeroGPT to produce reliable results in either direction. Any tool that caps free testing at this length is deliberately preventing you from evaluating its real performance.

Identical output on repeated runs. If you humanize the same text three times and get near-identical output each time, the tool is performing rule-based synonym substitution rather than genuine rewriting. This type of tool may pass ZeroGPT on the first run but will produce predictable patterns that more sophisticated detectors identify.

Grammar errors introduced by humanization. Some tools introduce deliberate noise, grammatical irregularities, and unusual phrasing to evade detection by increasing apparent unpredictability. This tactic does raise perplexity scores, but it produces text that is flagged immediately by any human reader. A humanizer that improves detection scores at the cost of readability has solved the wrong problem.

No changelog or model update history. Detector models update quarterly. A humanizer that does not update its own model in response will fall behind. A tool with no published update history cannot demonstrate that it has adapted to ZeroGPT's most recent changes.

Passes ZeroGPT only. If a tool's documentation or reviews only ever mention ZeroGPT bypass and never discuss GPTZero or Originality.ai, the tool has likely been optimised specifically for ZeroGPT's perplexity and burstiness signals without building the deeper structural rewriting that more sophisticated detectors require.

The Critical Distinction: Paraphrasers vs Purpose-Built Humanizers

The most common mistake made in the selection of a humanizer is the selection of a paraphrasing tool as a means of arriving at a humanizer. While paraphrasing tools are optimized for arriving at different versions of text, humanizers are optimized for moving the detection scores. This means that paraphrasing tools are optimized for arriving at different versions of text, while humanizers are optimized for arriving at different versions of text based on their statistical properties. While paraphrasing tools can arrive at different versions of text, they cannot arrive at different versions of text based on their statistical properties. Stanford study on AI detector bias and what human writing actually looks like statistically established the statistical profile of human writing over various populations, validating that true human writing has significantly variable sentence length, context-driven rather than statistically preferred vocabulary, and high levels of burstiness without any evidence of manipulation, but rather as an inherent property of how humans think and express themselves. A good humanizer will mimic these properties in its output. A paraphraser is an algorithm that adds synonyms. The consequence of selecting a paraphraser is an output that ZeroGPT cannot identify consistently, but can be detected by more sophisticated models.

Why Multi-Detector Testing Determines Whether a Tool Is Actually Reliable

A 0% rating on ZeroGPT is a good starting point but is clearly insufficient on its own. ZeroGPT uses a DeepAnalyse classification algorithm to measure perplexity and burstiness but is one of the more permissive detectors out there. GPTZero uses a seven-part system, including not just perplexity and burstiness analysis but also a deep learning classifier, education domain classifier, sentence-level classifier, and Paraphraser Shield, which is specifically aimed at humanized AI text. Originality.ai has a different model architecture geared towards web content and updates its model on a quarterly basis. Copyleaks boasts a false positive rate of one in ten thousand, which it says is industry-leading. If a humanizer can fix both perplexity and burstiness, then it will outperform ZeroGPT. If a humanizer can perform deep structural changes targeting all statistical signals, then it will outperform all four detectors. how perplexity and burstiness work and why both must be addressed by effective humanizers , which explains how perplexity and burstiness are the first layer of detection for all of the commercial tools, including ZeroGPT, Copyleaks, Originality.ai, and even older versions of GPTZero. As such, these are the first layers of detection, and any tool seeking to humanize will need to contend with these. However, it is worth noting that any tool seeking to humanize at this level will not be able to pass through the deep learning classifier layer of GPTZero.

What a 0% ZeroGPT Score Actually Means for Your Real-World Workflow

A 0% score on ZeroGPT means that the tool's Perplexity & Burstiness analysis did not detect any statistically significant AI fingerprint in the text at the time of the scan. This does NOT mean the text will always score 0% on any other tool. This does NOT mean the text will always score 0% on the next model update of ZeroGPT. And this does NOT mean that a human reader, even one knowledgeable about AI writing patterns, will NOT detect AI stylistic fingerprints in the text that ZeroGPT did NOT detect. A consistent 0% score from a reliable humanizer is sufficient protection for any workflow where ZeroGPT is the only tool in use. A consistent 0% score from a reliable humanizer is just part of the verification process for any workflow where GPTZero, Turnitin, or Originality.ai is/are also in use. GPTZero detection accuracy and how ZeroGPT compares as a standalone tool This confirms that those utilizing ZeroGPT as a first pass tool rather than a final verdict are utilizing it correctly, and that the accuracy of GPTZero's hybrid and paraphrased content is substantially higher than that of ZeroGPT in independent testing. For any professional application in which detection has implications, a humanizer that achieves 0% on ZeroGPT and less than 15% on both GPTZero and Originality.ai is the goal.

Addressing False Positive Risk: When the Problem Is Your Writing, Not AI Use

One of the most significant but overlooked use cases for AI humanizers is the mitigation of false positive detection of genuinely human-written content. The false positive rate of the model, or the probability of the model incorrectly identifying human-written content as AI-generated, ranges from 15% to 25% in independent testing in 2026. This depends on the nature of the content. Formal academic writing, heavily edited professional writing, ESL writing, and technical documentation tend to have lower perplexity and burstiness than human conversation and thus tend to be classified as AI-likely even when they were created entirely by human authors. understanding ZeroGPT's accuracy and how its false positive rate affects different writing styles documents the fact that the detection relies on surface-level patterns that are easily manipulable in either direction: the AI text with minimal editing passes the test, and the human text with low perplexity and burstiness scores triggers the test. The consequence of this is that the humanizer can be used as a legitimate tool for writers who naturally write in a way that the AI system misidentifies as machine-generated, by adding the variation in sentence lengths and vocabulary diversity.

How ESL Writers Can Use Humanizers to Protect Against Systematic False Flags

There is a demonstrated structural disadvantage for ESL writers. ESL writing has lower lexical diversity and more predictable syntax than fluent native English writing. This is not due to ESL writers’ use of AI tools but is a natural consequence of second-language writing processes. Second-language writing processes are based on a more limited vocabulary and syntax than native English writing. The model used for zeroGPT cannot tell whether low perplexity is due to AI writing or ESL writing processes. There is systematic false identification of ESL writing, as demonstrated in several independent studies. AI content detector false positives and how to protect your original writing This confirms the fact that false positive rates for ESL writers remain high across all tools. Additionally, the article provides the ESL writer with advice on what to do when the original content is being detected as written by a machine. By using a humanizer to introduce sentence-level variation and vocabulary diversity to accurate and original ESL writing, the ESL writer is actually using the technology to correct a bias in the tools, rather than to deceive by passing off machine-written content as human-written. The same structural changes to the text that a humanizer makes to machine-written content can also be used to make ESL writers' original content more similar to the structure and patterns of native English writing.

What Makes a Humanizer Stay Reliable as ZeroGPT Updates Its Model

ZeroGPT updates its detection model periodically to improve coverage of new AI models and new evasion techniques. A humanizer that scores 0% reliably today but does not update its own model in response will fall behind ZeroGPT's improvements over time. This is the same detection lag dynamic that affects all detection tools, applied in reverse: just as detectors lag behind new AI generation models, humanizers lag behind detector updates if they do not actively maintain their rewriting approach. ZeroGPT's evolution over time and what has changed in its detection methodology documents that ZeroGPT has evolved substantially since its 2023 launch, adding features and updating its DeepAnalyse model, and that each update changes what the tool identifies as AI-typical. The practical implication for humanizer selection is to prefer tools that document their update history and explicitly describe their approach to keeping pace with detector changes. A tool that last updated its model in 2023 is not a reliable choice for achieving consistent 0% scores on ZeroGPT's 2026 model.

How to Tell Whether a Humanizer Is Keeping Pace with Detector Changes

Three signs point to an active humanizer based on updates to detectors. The first is that the tool has published its changelog, its release history, which lists updates to models, with updates within 60 to 90 days. The second is that its own documentation, such as its blog, talks about specific updates to detectors and how it has reacted to them. The third is that reviews from 30 to 60 days ago show constant bypass rates, as opposed to decreasing bypass rates. A tool with reviews from six months ago and its changelog from 2024 is clearly one that has failed to update as often as ZeroGPT and its competitors update detectors every quarter. the false positive problem in AI detection and what it means for humanizer evaluation confirms that the detection landscape changes frequently enough that a tool's 2024 performance is not a reliable predictor of its 2026 performance without evidence of active maintenance. The most reliable humanizers in 2026 are those whose development teams treat detector updates as a continuous obligation, not a one-time engineering problem.

Applying These Criteria: How to Choose and Use the Right Tool

BestHumanize for consistently scoring 0% on ZeroGPT and other major detectors it was built with the same parameters as outlined in this guide: significant structural modification targeting both perplexity and burstiness instead of synonym replacement, performance consistency across ZeroGPT, GPTZero, and Originality.ai, preservation of meaning by keeping your original arguments intact, and a transparent free tier to allow you to test your actual content before committing. The basic process for using any humanization tool, such as this one, remains the same. You create your content, use the original score on the ZeroGPT model to verify the content indeed requires humanization, humanize the content, verify the score on the humanized content, read the humanized content for meaning and naturalness, and then verify it against a second model. The process takes less than five minutes per document and has a verifiable outcome at every step.

The Complete Five-Step Evaluation Workflow Before Committing to Any Tool

Step one: Obtain a 400 to 500-word text from your chosen AI model of interest on a subject of interest to your actual use case. Step two: Test the text with the ZeroGPT tool and verify it has a score above 90%. Step three: Humanize the text using the free tier of the tool you’re currently evaluating and verify the ZeroGPT score on the humanized text. If the score does not drop below 10%, the tool can be eliminated from consideration. Step four: If the text has a passing score in the previous step, test the text in the GPTZero and Originality tools. If the text has a passing score in either of these tools above 25%, the tool has been optimized for the ZeroGPT tool but cannot be considered reliable. Step five: Test the text to be read aloud and compare it to the original text. If the text has introduced grammatical mistakes, changed your arguments, or sounds unnatural, the tool should be eliminated. GPTZero review including accuracy, features, and how it compares to ZeroGPT provides useful context on how GPTZero's detection differs from ZeroGPT's and why passing both is a stronger indicator of humanizer quality than passing ZeroGPT alone. A tool that passes both consistently, produces natural output, preserves meaning, and documents regular updates is a tool worth paying for.

Conclusion

To select an AI humanizer with reliable 0% scores in ZeroGPT, it is necessary to understand what ZeroGPT actually tests, check if the tool indeed carries out deep structural rewriting instead of synonym replacement, test it with your content before making a purchase, and check if the 0% scores in ZeroGPT translate to the tool's performance in GPTZero and Originality.ai. The five parameters that should be used in the evaluation process include the tool's bypass rate, meaning preservation, naturalness of the output, consistency of the tool in multiple detectors, and frequency of tool updates. The red flags that should be used in the evaluation process include the tool's claim of bypassing detectors without actually testing it, the free version being limited to content under 150 words, the tool producing the same output repeatedly in multiple tests, the tool introducing grammatical errors in the content being humanized, and the lack of an update history. A tool that passes the evaluation process with flying colors, including achieving 0% scores in ZeroGPT and below 15% in GPTZero and Originality.ai, is worth using.

Frequently Asked Questions

Is it possible to guarantee 0% on ZeroGPT every time?

No humanizer can promise 0% on all content types at any given time because the model is updated periodically by ZeroGPT, and different content types have different baseline burstiness profiles. Academic writing, technical documentation, and other forms of professional writing have lower baseline burstiness than conversational writing, so they have higher scores on the ZeroGPT model even when written by humans. The best humanizers can promise is 0% on standard blog/article content types, and less than 10% on other content types. Any tool promising 0% on all content types for all future versions of the ZeroGPT model is making a claim that is impossible given the adaptability of content type detection systems.

Why does my humanized text score 0% on ZeroGPT but 70% on GPTZero?

This discrepancy almost always indicates that the humanizer you used manipulates perplexity and burstiness, which are ZeroGPT's primary signals, but does not address the deeper structural and stylistic patterns that GPTZero's seven-component detection system identifies. ZeroGPT's reliance on these surface-level signals makes it the easiest major detector to beat with basic humanization techniques. GPTZero's deep learning classifier and Paraphraser Shield feature catch humanized text that has only been perplexity-optimised. The solution is to switch to a humanizer that performs deep structural rewriting, produces genuinely variable sentence patterns, and is documented to achieve low scores across both ZeroGPT and GPTZero.

Can I use an AI humanizer on my own human-written text to avoid false positives?

Yes, and this is one of the most legitimate use cases for AI humanizers. This is because the false positive rate of 15% to 25% by ZeroGPT on human-written content, as independently tested and reported in 2026, means that a considerable percentage of legitimate human content is misidentified. Writers whose style is naturally formal, consistent, or predictable, including those whose first language is not English and those whose work is predominantly written, will be impacted by this. Using your original human content and passing it through a humanizer to create variation in sentence length and vocabulary is not an attempt to fool anyone but is, instead, an attempt to work around an existing bias in detection technology.

How long should a test passage be for a valid humanizer evaluation?

A minimum of 300 words is required for the evaluation process, with the ideal range being between 400-500 words. The evaluation process is not reliable if the input is below 250 words, as stated in the ZeroGPT tool's own documentation. If the input is below 150 words, you might as well not be testing the tool at all; you're only testing if it can handle manipulating sentences. It is unfair that tools only offer free testing with passages ranging between 80-100 words, which is preventing you from testing the tool's real capabilities. The average content is at least 500 words, which is why it is the standard testing range prior to purchase.

How often do I need to retest a humanizer I am already using?

Retest your humanizer after major updates to the ZeroGPT model, which happens periodically throughout the year. A spot check can be done 60 to 90 days into the cycle by repeating the same testing method you used during your first evaluation. This involves creating fresh content with AI, humanizing it with your tool, and comparing the scores from the ZeroGPT and GPTZero tests. If the scores have increased substantially since your last review, it’s a sign your humanizer has fallen behind the update cycle for the model, and it’s time to review alternatives or see if the tool has updated its model.

This guide represents the performance of AI humanizers as of March 2026, the detection approach used by ZeroGPT tools, and industry testing. The performance of detection tools and humanizers alike changes rapidly. The information provided may not be accurate in the future as the detection and humanization sides of the equation continue to advance. Always test the tools with your content before making a buying decision. Use AI content tools responsibly and in accordance with the policies of the institutions or platforms you use.