Your content team writes every single word. The research is original, the arguments are human-created, and the brand voice is a result of years of editorial polish. Then a client runs your latest batch of blog posts through an AI detector and sends a screenshot: "87% likely AI-generated." The discussion that follows is awkward, time-consuming, and hurts the relationship, which needs to be resolved. And the most annoying part is that the detector is wrong. Why chasing AI detection scores can undermine content quality documents this dynamic precisely: agencies are constantly defending false flags or making edits to satisfy a detector's scorecard, burning hours trying to prove human authorship to tools that are not reliable enough to serve as final arbiters.

This guide is for content marketing teams, agencies, and freelance writers who have been flagged, want to respond effectively to a false flag, and want to implement workflow changes to prevent the problem from recurring. It covers why professional content is structurally more likely to trigger false positives than casual writing, the immediate response when a flag occurs, the root cause diagnosis that reveals which specific writing habits are driving scores, the prevention workflow that addresses each cause, and the longer-term process changes that protect your team's reputation without compromising content quality.

Why Professional Marketing Content Gets Flagged More Than Casual Writing

The counterintuitive truth about AI detection is that better writing often scores worse. Detection tools measure perplexity (word predictability) and burstiness (sentence length variation), and professional content systematically scores low on both. It has been edited to a high standard of consistency. It follows the brand voice guidelines, which constrain vocabulary and tone. It has undergone multiple grammar-checker passes that standardize phrasing. From a detection tool's perspective, each of these quality improvements removes the idiosyncratic variation that distinguishes human writing from machine output. Real-world AI detector accuracy testing confirmed that no tool exceeded 80% accuracy across varied real-world content and that false-positive rates were substantially higher for professional, formal writing than for casual text. Your team's best work is most likely to be flagged.

There is also the problem of format. Marketing content follows templates: introductions that establish context, bodies that develop an argument with supporting evidence, conclusions that summarize, and a call to action. AI language models were trained on the same genre of writing that produces these templates, so they generate content that follows the same structural patterns. When a detection classifier learns that AI output looks like well-structured, formally organized prose, it struggles to distinguish professional human writing from AI output. Why perplexity and burstiness fail to reliably detect AI in formal writing explains this concretely: the overlap between well-edited human writing and AI output in the same structural space is substantial enough that no threshold can cleanly separate them.and also add 2 4k hd photo that relevant on this article and indicate where i can put it

The Seven Root Causes for Content Teams

Most content team false positives trace to one or more of the following seven causes. Identifying which applies to your specific flagged content is the first step in both responding to the flag and preventing recurrence.

Root Cause | Why It Affects Marketing Teams Specifically | Detection Signal Triggered |

Heavy grammar and style tool use | Marketing teams routinely pass drafts through Grammarly, Hemingway, and in-house style checkers. Each tool corrects idiosyncratic phrasing and regularizes sentence structure, removing the natural variation that distinguishes human writing from AI output. | Low perplexity (predictable word sequences after tool regularisation), low burstiness (sentence length standardised across revision passes) |

Brand voice templates and style guides | Teams writing to a strict brand voice guide, defined tone, approved vocabulary, and sentence length guidelines produce text with a characteristic consistency that is statistically similar to AI output trained on formal writing. | Uniform register, restricted vocabulary range, low lexical diversity across the document |

Highly polished final drafts | Professional content goes through multiple review passes, each removing rough edges, idiomatic phrases, and irregular constructions. The final version is maximally polished and statistically closest to AI output. | Low perplexity in the final draft: polishing reduces the surprisal values that mark human authorship |

Short-form content (under 300 words) | Social copy, meta descriptions, email subject lines, and ad copy submitted for detection checks are too short for reliable statistical classification. Detection tools acknowledge minimum length requirements, but are still used on short content. | Insufficient text for statistical patterns to emerge reliably; results approach chance accuracy on short content |

Standardised content formats | FAQ sections, product description templates, listicles with parallel structure, and technical specification copy all follow predictable formats that overlap with AI output patterns. | Structural template matching; parallel sentence structures interpreted as AI-typical uniformity |

Topic-constrained vocabulary | Content on narrow technical topics uses a restricted vocabulary that is not a sign of AI generation but of subject-matter specificity. Detection tools trained on diverse writing interpret a low vocabulary range as AI output. | Low lexical diversity in subject-specific content; domain vocabulary is misread as constrained AI-style vocabulary |

Cyborg writing practices | Any combination of AI drafting tools, outline generators, and human editing creates mixed-origin content. Even a single paragraph generated with AI assistance and edited by a human can elevate the overall AI score across the entire piece. | Mixed-document classification: AI-generated sentences raise aggregate score even when surrounded by human-written content |

The Immediate Response: What to Do When You Are Flagged

First Principle: A detection flag is a statistical estimate generated by a tool with documented false-positive rates ranging from 2% to 28%, depending on content type. It is not a verdict on your team's integrity or your writers' work practices. Your response should be factual, measured, and grounded in evidence. The goal is to resolve the specific dispute, not to win an argument about whether detection tools are perfect. |

Step 1: Do Not Become Defensive or Accept the Flag Without Investigation

Both scenarios are wrong. Dismissing the flag without investigation is wrong because it damages your credibility if the content actually does contain AI-generated content that needs to be disclosed. Accepting the flag without evidence-based pushback means allowing an unreliable statistical estimate to damage your team’s reputation. The first right action is to investigate the flag yourself.

Step 2: Run the Flagged Content Through Multiple Tools

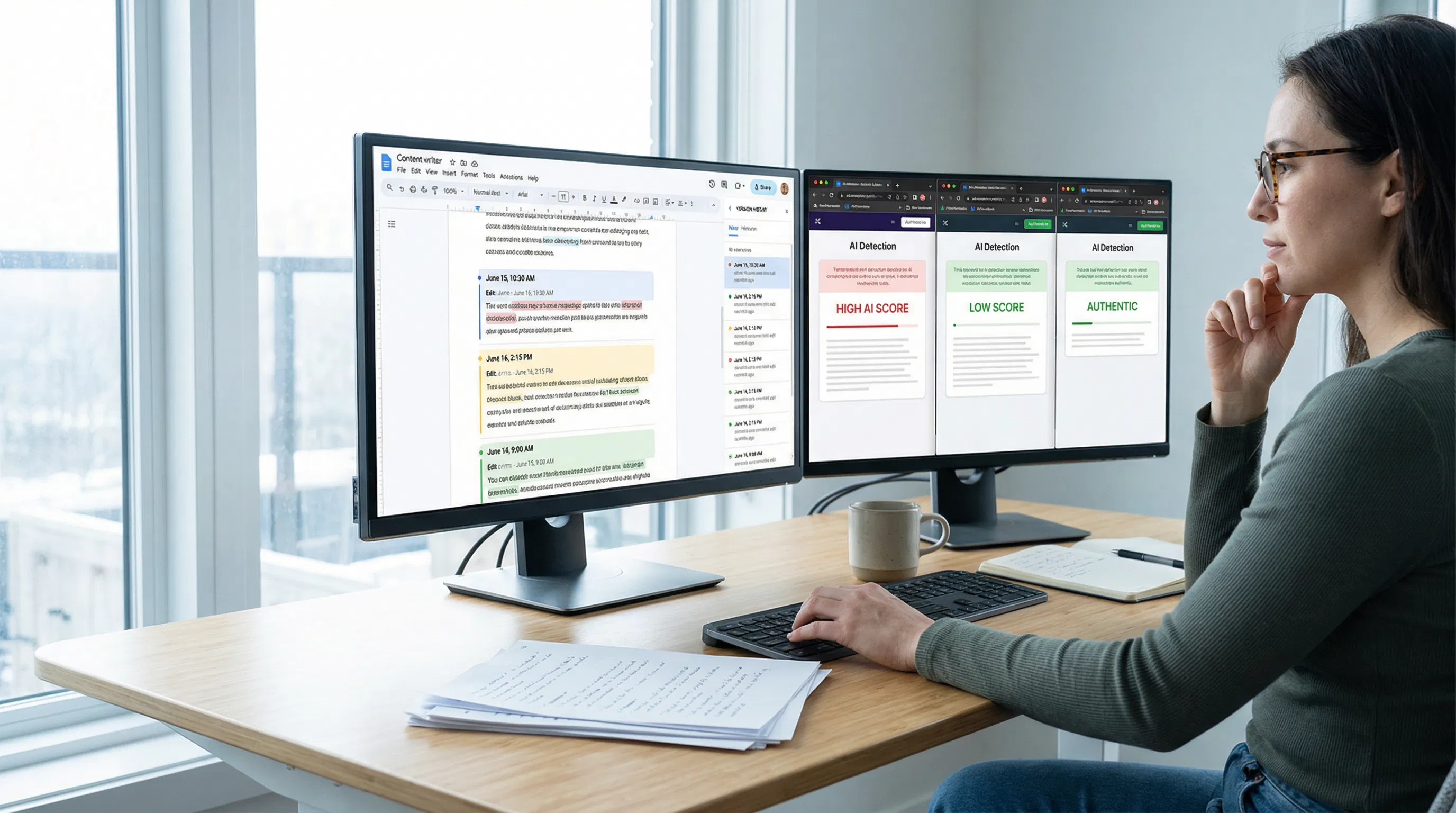

The single best piece of evidence to prove your point in a false flag dispute is the disagreement between the tools. So, if your client used one tool to flag the content at 87%, take the same content and run it through two different tools with different methodologies. So, if one tool flags it and the other two do not, it is evidence that the flag is actually related to the tool, not the AI. How AI content detectors work and why different tools produce inconsistent results confirms that different detectors use different statistical approaches and produce different results on the same content, which is precisely why no single tool's output should be treated as definitive.

You will use GPTZero for sentence-level output to identify the exact content that is flagged; Copyleaks for having one of the lowest false positive rates based on independent testing; and a third tool of your choice. You will also include screenshots showing the date, tool name, and score. You may include all three tools if the results are inconsistent and compare them to your client's screenshot.

Step 3: Pull Your Version History and Process Documentation

Your best proof of real authorship is not a claim about the precision of a detection tool. It is a time-stamped record of your creation process. If you work on a project with Google Docs, open version history on a document you suspect (File -> Version History -> See Version History). Take screenshots showing how the document has been developed over time, with multiple versions. AI detection of false positives and how version control serves as evidence of authentic authorship confirm that version history showing incremental development is the most compelling evidence for resolving false-positive disputes, because AI-generated content appears as a single-event paste rather than an incremental, compositional process.

The evidence package to prepare: screenshots of version history with time stamps of multiple editing sessions, the initial draft before grammar tools were employed, any research notes, outlines, or brief annotations, and the brief/creative direction you were working from. A document that evolved over three sessions on three different days could not have been produced by AI in a single session.

Step 4: Identify and Address the Specific Root Cause

Reviewing the sentence-by-sentence breakdown, you will notice that sentences identified as suspect tend to be one of two types: those where the sentence length varies little compared to the rest of the text and those where one or more of the "high-frequency AI vocabulary" fingerprints are used ("leverage," "underscores," "furthermore," "pivotal," "it is worth noting," "robust," and "comprehensive"). All of these issues can be addressed. Review the suspect sentences and revise them to address these issues: vary sentence length in uniform-length passages, avoid using "high-frequency AI vocabulary," and add a specific detail to generic passages. Run the detection tool again to verify that the score has been raised.

Step 5: Present Your Evidence and Offer the Revised Version

Contact the client with a factual message such as "The tool you used has a known false-positive rate." I've used two other tools on this text with different results. Here is my version history showing how I've incrementally drafted this text over three sessions, and here is my revised version, which fixes the areas your tool incorrectly identified. This message offers a solution, not an apology, and reframes it as a quality improvement rather than a concession of wrongdoing.

The Prevention Workflow: Seven Changes That Stop False Flags Before They Start

Having settled one immediate problem, the next task is to modify the workflow so the same problem does not occur in the next shipment. The seven measures below address each cause in the table above. None of these measures requires a significant change in the workflow; most are simple behavioral adjustments that add either 10 or 15 minutes to the production process per item. QA workflow for AI-generated and AI-assisted content confirms that the most effective quality assurance for content teams combines automated checks against specific, measurable criteria with human review of elements that statistical tools cannot assess reliably, including voice, specificity, and process documentation.

Prevention Measure | What It Addresses | How to Implement |

Pre-publish multi-tool detection check | Catches flaggable content before it reaches the client, allowing revision without a dispute | Run all final drafts through at least two detection tools (GPTZero free tier for sentence-level output, plus one second tool). Flag anything above 30% for review and revision before submission. |

Sentence rhythm audit on every draft | Corrects low burstiness caused by grammar tool standardisation and style guide conformity | Before final submission, scan each draft for runs of three or more consecutive sentences of similar length. Insert a short emphatic sentence or extend one sentence with a subordinate clause to restore rhythm variation. |

AI vocabulary fingerprint pass | Removes the specific words and phrases that neural classifiers flag most consistently | After draft completion, run a find operation for "delves," "leverages," "underscores," "showcases," "pivotal," "crucial," and "robust." Furthermore, today's world is rapidly evolving. Replace each with a direct, specific alternative. |

Version history requirement for all deliverables | Creates defensible process evidence for any flag that slips through pre-publish checks | Require all writers to draft in Google Docs with version history enabled. Include a link to the version history in every delivery. This takes thirty seconds and provides permanent evidence of incremental human composition. |

First-draft preservation rule | Preserves the idiosyncratic voice of the first draft before grammar tools regularise it | Instruct writers to save a named copy of their first draft before running it through Grammarly or any other style tool. The first draft shows the natural voice that polishing removes. |

AI use disclosure protocol | Prevents the cyborg writing problem from creating undisclosed mixed-origin content | Define clearly which AI tools are permitted for which tasks (outline generation, research summaries, translation assistance), which require disclosure in the delivery, and which are prohibited. Ambiguity about AI tool use creates both ethical and detection risks. |

Specificity injection requirement | Raises perplexity by replacing generic claims with specific, verifiable details | Add a final checklist item before submission: every paragraph must contain at least one specific data point, named source, concrete example, or first-person observation. Generic attribution ('many experts believe') must be replaced with the specific source. |

Addressing the Grammar Tool Problem Specifically

The problem of Grammarly's false positives is a special case that deserves a brief discussion of its own, given its prevalence among content teams and its unintuitive solution. Grammarly, Hemingway, and other such tools are helpful for grammar, clarity, and consistency. What is less obvious is that these tools have the side effect of regularizing sentence structure in a way that reduces perplexity and increases the statistical similarity between your text and a model's output. The solution is not to avoid using these tools.

Save a copy of the pre-Grammarly version of all drafts. Label it appropriately, like "Article First Draft Pre-Grammarly." This will ensure that, in the event of any issues with the post-Grammarly version, there is proof of the human voice that has been lost.

After running the Grammarly tool, perform a manual burstiness test. The grammar tool will always standardize sentence length in a document by splitting long sentences into shorter ones and joining shorter ones. Manually scan the post-Grammarly version and find a paragraph in which all sentences have the same length. Add one long or short sentence to that paragraph.

The fingerprint vocabulary test should be performed after running the grammar tool, not before. The grammar tool, in an attempt to correct grammar, sometimes uses AI-generated word lists, or rather, AI-generated word fingerprints. This means that sometimes, in the suggestions given by the grammar tool, there might be a word that has been replaced with one of these common AI-generated word fingerprints. The suggestions from the grammar tool should not be accepted blindly.

Building a Team-Wide Process That Scales

While individual writers defending their own work is a part of the solution, the complete solution is a team-wide approach to making process documentation and pre-publish detection checking a normal part of the content creation process, not a crisis reaction to a single alert. This verifies that the best-defensible approach to content processes includes not only automated checks of specific, measurable factors but also human review of factors that detection tools cannot evaluate, such as voice consistency, the specificity of facts, and process documentation.

Create a Team Content Process Document

A one-page internal document detailing your team’s content production process, including where you create content, what tools you use, how you research, how many passes a given piece of content makes through your team’s process, and what each pass entails. This serves two purposes: It establishes a content production process as a deliberate, documented workflow rather than a series of individual processes, and it gives you a way to prove your team’s authorship in response to any client or reviewer’s concerns about how you create content. A team that can point to a written process is automatically more believable than one that responds to a flag with, "We wrote it, we promise."

Standardise Version History Requirements

Make version history in Google Docs a hard requirement for all client work, not a suggestion. Brief your writers on how to verify that version history is on for all work; how to name key versions of a piece (first draft, after research review, after client brief review, and final); and how to export version history screenshots upon request. It takes less than five minutes of your team’s time to ensure permanent, automatically dated documentation of original composition for every piece of work your team produces.

Add a Pre-Submission Detection Check to Your QA Checklist

Consider adding a pre-submission step to your content QA checklist: run every final draft through GPTZero before delivery, and review any passage flagged at 30% or higher. It takes about 3 minutes per piece and can help prevent fixable false-positive triggers from reaching the client. The point is not to hit some kind of target; the point is to spot passages that are statistically "AI-like" and review whether they are in fact a quality improvement opportunity (more specific, more varied in rhythm, more human in voice) or whether they are perfectly okay just the way they are, simply because of the limits of the tool on formal writing. An AI text humanizer tool for content teams can assist with the mechanical task of sentence variation and vocabulary diversity on passages that consistently flag, freeing the writer to focus on adding the specific details and personal voice that improve content quality rather than just detection scores.

Build a Client Communication Template for False Positive Scenarios

Having a communication template ready before a situation arises is important. The template should include information such as what a false positive detection by an AI tool is, why professionally written content is more likely to have a structured form that will set off an AI tool compared to unprofessional writing, what evidence your team holds for proving authorship, and how the client flag being raised has been researched and addressed. This helps to ensure that a client flag is addressed professionally and quickly, rather than reactively and slowly, while trying to understand the situation.

What to Do When a Client Insists on Detection Compliance

However, some clients won’t be satisfied with an explanation of false-positive rates and the evidence process. Some will require that all delivered content score below a certain threshold on a certain tool. This is a business relationship decision that each team must make independently, but the technical approach to meeting these requirements without compromising content quality is obvious: specific detection triggers were identified in the pre-publish check (sentence rhythm uniformity, fingerprint vocabulary, and generic claims without specifics), and we can use a humanizer tool as a final mechanical approach to remove any remaining statistical patterns and rerun the check to ensure compliance.

The key difference is that of making your content pass a detection threshold because you have made it better, more varied in rhythm, more specific in its evidence, and more authentic in its voice, versus making your content pass a detection threshold by adding artificial variation that detracts from quality. The first is always the right thing to do. The second is a short-term solution that weakens your content’s performance and gets you a different kind of problem. Strategy, keyword intent, and value-driven messaging get you more results than trying to optimize for some kind of AI detection score. Detection tools were never meant to dictate content quality, and basing your content standards on those tools means you’re creating content that meets a statistical model, not a human being.

Conclusion

There is a built-in disadvantage for content marketing teams when it comes to being detected by AI tools. The very practices that lead to high-quality and polished content, such as grammar tools and style guides, are the same ones that lead to low statistical diversity and are therefore detected by AI tools as human-written. The answer is not to stop producing high-quality content. The answer is to develop very specific, documented habits that restore the natural diversity that high-quality content production tends to eliminate, and to use the facts and evidence-based confidence that come from knowing exactly what your process is and being able to prove it.

Frequently Asked Questions

Why is our team's high-quality content flagging as AI when we wrote every word?

Professional content can trigger AI detection for structural reasons unrelated to AI. Grammar tools normalize sentence structure and standardize sentence length, reducing the statistical uniqueness that AI detection software associates with human-written content. Style guides limit vocabulary and tone, which aligns with the consistent tone that AI-generated content also follows. Review cycles can eliminate idiomatic phrases and unorthodox sentence structures. The end result is content that, according to statistical analysis, appears similar to AI-generated content, even though it was created by a human. This is a known, verifiable fact: testing has shown that professionally written formal content can have higher false positive detection rates than other types of content.

Does using Grammarly trigger AI detection flags?

It can contribute to them. Grammarly not only improves grammar and readability but also normalizes sentence phrasing and sentence length and replaces idiomatic phrases with more normal phrasing. All of these reduce perplexity (making word choice more predictable) and burstiness (making sentence length more uniform), and these two metrics are what AI detectors primarily look at. This is particularly true if Grammarly has heavily modified a document and its sentence-structure suggestions have been widely applied. The solution is to keep a copy of the document before using Grammarly, perform a burstiness check afterward, and avoid Grammarly's suggestions that contain AI-fingerprint vocabulary.

What evidence is most effective when challenging a false positive flag with a client?

There are three types of evidence that have consistently been shown to be the most effective. The first is a comparison of multiple tools: if a document has been run through two or three different tools and they yield different results, you know the flag is not due to AI composition. The second is version history: if you look at the version history of a Google Doc, where you can see a document being written over a series of days, you know it was written by a human. The third is process documentation: if you can find a first draft, research, or annotations, you know that an intellectual process underlies the document's creation. Evidence wins.

How long does it take to implement a false positive prevention workflow?

The core habits take less than 15 minutes per piece once established. Allowing the version history of Google Docs to take 30 seconds. Running a pre-submit detection scan takes three to five minutes. Running a burstiness check and a vocabulary pass can take 5 to 10 minutes on a typical piece. The first-draft preservation habit takes 30 seconds. The total time per piece is 10 to 15 minutes, a small price to pay compared to the time spent on a false-positive dispute. Team training for these habits can take about one hour of group time. The benefits are permanent.

Should we disclose when we use AI tools at any point in the content process?

The answer depends on your contracts with clients, your team's internal policies, and the specific use. Most content associations have either explicit or implicit assumptions about AI use that should be clarified proactively rather than found through a dispute over detection. If your team is constantly using AI tools during any phase of the content process, from research, summarization, and outline generation to translation assistance and draft generation, it is better to inform clients what is allowed and what requires disclosure. You are putting yourself at risk of both ethical and detection issues if you leave the use of AI tools in a content relationship. Making a clear disclosure of a limited, specific AI use, along with solid proof of substantial human editorial input, is a stronger position than unacknowledged AI assistance flagged.

This guide is based on current technology and best practices for a content team as of March 2026. Detection tool accuracy, false-positive rates, and vocabulary fingerprint patterns will continue to change as generative technology advances. The above-mentioned workflow is intended to address underlying structural issues that cause false positives across platforms and content types.