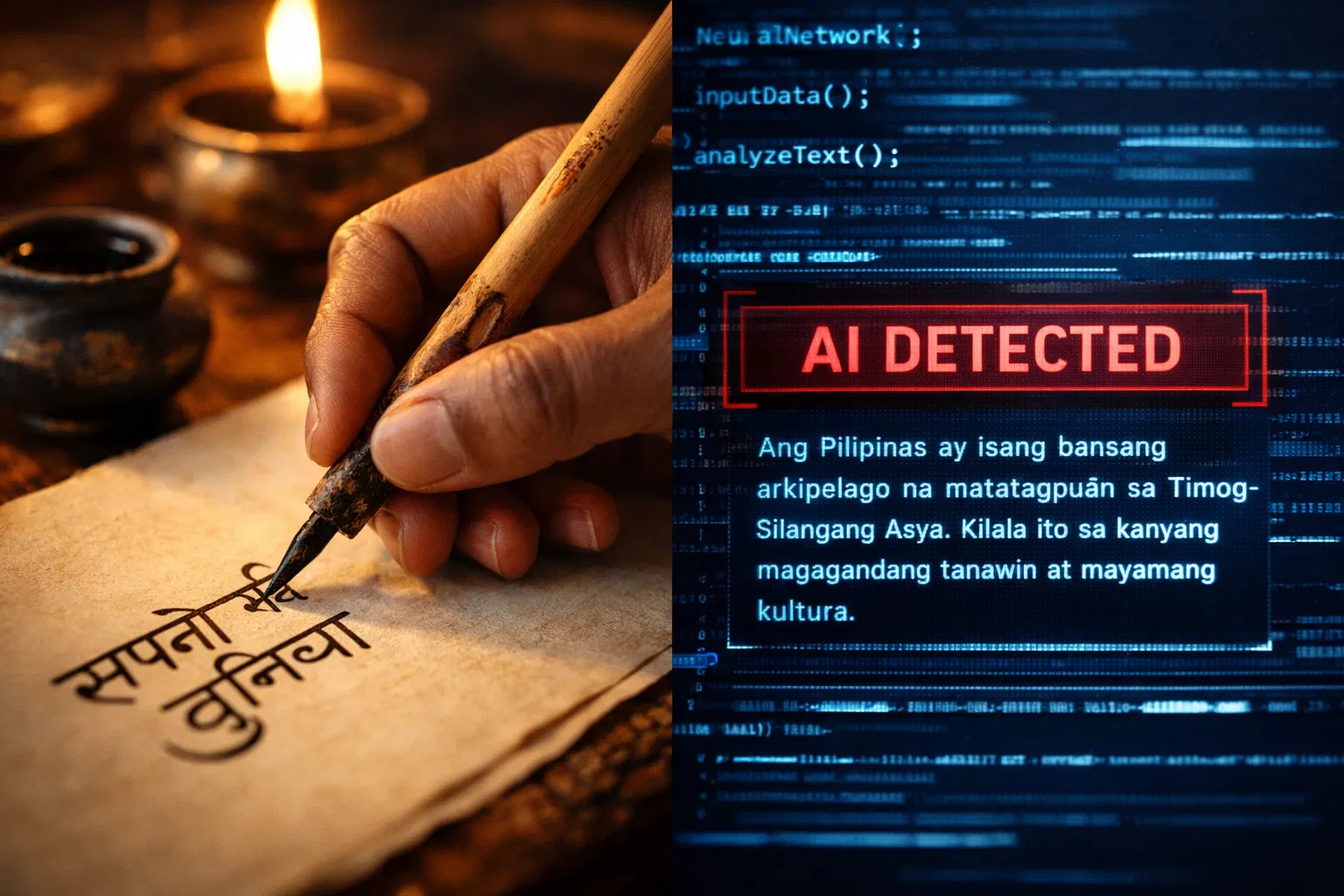

Most AI detection tools were built by teams working in English, trained on English datasets, and benchmarked against English writing. That is not a conspiracy against non-English writers. It is simply how the field developed. English dominates the internet, English text is easier to source in large quantities, and English-speaking institutions were the first to panic about AI cheating. The result is a global detection infrastructure that works reasonably well in English and considerably less well everywhere else.

The consequences are real. Students writing in Spanish, Tagalog, or Hindi face a system calibrated for a language they are not using. The statistical patterns detectors look for in AI output, including low perplexity and low burstiness, mean different things in different languages. A detector trained on English cannot reliably apply English-derived thresholds to Hindi prose written in the Devanagari script. The Stanford ESL bias study, published in the journal Patterns, found that seven widely used AI detectors classified more than 61 percent of TOEFL essays written by non-native English speakers as AI-generated, while achieving near-perfect accuracy on essays written by US eighth graders. The structural bias is not subtle.

This article walks through what the research actually shows about AI detection performance in Spanish, Tagalog, and Hindi, why each language presents distinct challenges, and what multilingual writers can do to protect their work. An AI text humanizer built to adjust the statistical properties that detectors measure is one practical tool, but understanding the underlying problem is the starting point.

Key Takeaways

AI detectors are substantially less accurate in non-English languages. Spanish achieves F1 scores of around 0.87, compared with 0.95 for English. Tagalog detection accuracy falls to 0.27 to 0.47 in independent benchmarks. Hindi accuracy is estimated at 75-85% under optimal conditions, lower for edited or mixed content.

The gap is structural, not accidental. Most detection models are trained on English-dominant datasets. The perplexity thresholds, stylometric baselines, and transformer embeddings they use are calibrated on English writing patterns. Applying them to other languages introduces systematic miscalibration.

Non-native English writers bear a double burden. When writing in English as a second language, they produce lower-perplexity text that gets flagged as AI. When writing in their first language, they use detectors that were never properly trained on that language. Neither situation is fair nor accurate.

Tagalog and Filipino face the sharpest accuracy problem of the three languages covered here. Filipino is a low-resource language in NLP terms, meaning that limited annotated data exists to train detection models. Research published in 2025 found that seven large language models tested on Filipino news classification achieved accuracy scores of only 0.27-0.47.

Hindi presents unique technical challenges through its Devanagari script. Tokenization, which is the foundational step in any NLP pipeline, behaves differently for scripts that are not Latin-based. This introduces errors that compound through every subsequent detection step.

The best-performing multilingual tools in 2026 include Originality.ai 2.0.0 with 97.8 percent accuracy across 30 languages, and Pangram, which claims 99 percent accuracy across 20 languages. Winston AI explicitly lists Tagalog as a supported language. However, independent benchmarks consistently show performance gaps compared to English. Tools designed to humanize AI content are valuable precisely because the detection landscape is uneven: a tool that adjusts the text's statistical profile can help writers regardless of which detector their institution uses.

Why AI Detection Underperforms Beyond English

Before examining each language individually, it helps to understand why non-English detection is systematically harder. The problems are not random. They follow from how detection systems are built.

Training Data Imbalance

AI detection models learn from examples. Feed a model thousands of examples of AI-generated English text alongside thousands of examples of human-written English text, and it learns to distinguish them. The problem is that large, labeled, balanced datasets of this kind exist primarily in English. For Spanish, the data is thinner. For Tagalog and Hindi, it is much thinner. A model trained on insufficient data for a given language cannot generalize reliably to that language's writing patterns. Multilingual detection accuracy confirms this directly: a 2023 Stanford and UC Berkeley study testing the GPT-2 Output Detector on 100,000 texts found F1 scores of 0.95 for English, 0.87 for Spanish, and 0.82 for Chinese. The 13-point gap between English and Chinese reflects the depth of the training data.

Tokenization Problems for Non-Latin Scripts

Modern transformer-based detectors use subword tokenizers like Byte-Pair Encoding or WordPiece to convert text into units a model can process. These tokenizers were designed and optimized on large English corpora. When they encounter Devanagari script for Hindi, or the agglutinative morphology of Filipino, they produce less accurate tokenization, introduce more out-of-vocabulary tokens, and degrade the quality of the perplexity and embedding calculations that follow. A detection system with a poor tokenization stage cannot produce reliable output, regardless of how sophisticated its classifier is.

Miscalibrated Perplexity Thresholds

Perplexity scores are not absolute. They depend on the language model used and on the distribution of writing in the calibration data. A threshold of "perplexity below X is likely AI" that works for English prose does not automatically translate to Spanish academic writing, which naturally yields different baseline perplexity scores due to the language's distinct syntactic and lexical properties. Applying English-calibrated thresholds to non-English text produces both false positives, flagging genuine human writing as AI, and false negatives, missing actual AI output. Tools that bypass AI detectors are most effective in this environment because they directly target the statistical properties being measured, rather than relying on the detector to apply sensible thresholds to the language.

Spanish: The Best-Supported Non-English Language

Spanish is the second most widely spoken language in the world by native speakers, and it receives by far the most attention from detection tool developers after English. The result is that Spanish detection is meaningfully better than that for other non-English languages, though it is still noticeably behind English.

Accuracy Benchmarks for Spanish

The most cited multilingual benchmark reported F1 scores of 0.87 for Spanish and 0.95 for English, a gap of 8 points. In practical terms, this means that about 13 percent of Spanish AI-generated text escapes detection, and false-positive rates on genuine human writing are higher than they are in English. GPTZero self-reports 82 percent accuracy for Spanish and French in independent testing conditions, while Originality.ai's multilingual model reports 97.8 percent across 30 languages, including Spanish. Multilingual AI detector Pangram claims 99 percent accuracy across more than 20 languages, including Spanish, with a false-positive rate tested independently on ESL datasets.

Why Spanish Performs Relatively Well

Spanish benefits from several structural advantages. It uses the Latin alphabet, which means standard tokenizers work reasonably well without major modification. It has a large presence on the internet, providing richer training data than most other non-English languages. Its grammatical structure, while different from English, shares enough ancestry that models built on English data can transfer some learning across languages. Major detection platforms have also explicitly invested in Spanish support because their core markets in the US, Latin America, and Spain demand it.

Where Spanish Detection Still Falls Short

The gap remains in two areas. First, regional variation. Spanish written in Mexico differs from Spanish written in Argentina or Spain in vocabulary, idiom, and even some grammatical constructions. A model trained primarily on Spanish from one region can misclassify authentic writing from another. Second, academic and technical Spanish. Formal academic prose in any language tends to score lower perplexity because it uses predictable disciplinary vocabulary. Spanish academic writers, like all formal writers in non-English languages, face elevated false positive rates when detectors miscalibrate their thresholds for the language.

Tagalog and Filipino: A Low-Resource Language Under Pressure

Tagalog, also known as Filipino in its standardized form, is the national language of the Philippines and is spoken by roughly 82 million people. It is also, by NLP standards, a low-resource language. That classification is not about the language's richness or its speakers' sophistication. It specifically refers to the scarcity of digitized, annotated data available to train machine learning systems. And in 2026, the consequences of that scarcity are acute.

Research Finding: A 2025 IEEE-published study on detecting AI-generated Filipino news articles tested seven large language models on the classification task of distinguishing human-written from AI-generated Tagalog text. The results showed accuracy scores of only 0.27 to 0.47, with five of the seven models heavily predicting all texts as human-written. The researchers concluded that entirely new or substantially improved detection strategies are needed for low-resource languages like Filipino. |

The Filipino AI detection study is among the first peer-reviewed studies to benchmark AI detection specifically on Tagalog text, and its findings are stark. A detection tool with an accuracy of 0.27 to 0.47 is barely better than random guessing. For Filipino students and writers, this means two things simultaneously: AI-generated Tagalog text is very likely to slip past detectors unnoticed, and genuine human-written Tagalog text may be flagged at elevated rates when detectors default to classifying uncertain inputs as AI.

The Code-Switching Problem

Filipino writing in the real world rarely stays cleanly in Tagalog. Code-switching between Tagalog and English, called Taglish, is standard in online content, academic work, social media, and everyday communication. A single paragraph might contain Filipino sentence structure with English technical terms, English phrases embedded in Filipino clauses, and Filipino morphological endings attached to English roots. This is natural, fluent Filipino writing. It is also something detection models have almost no training data for.

When a detector encounters Taglish content, it lacks a reliable framework for evaluating it. The perplexity calculation breaks down because the model does not know whether to apply English or Filipino baselines. The result is unpredictable classification that can swing from false positive to false negative depending on small differences in the amount of English in a given passage. Using humanized AI writing tools that adjust statistical properties at the text level, rather than relying on language-specific calibrations, can provide more consistent results for Taglish writers than language-specific detectors that simply are not built for the way Filipino people actually write.

Winston AI and Tagalog Support

Of the major general-purpose detection platforms, Winston AI explicitly lists Tagalog as a supported detection language alongside English, Spanish, French, and several others. This makes it currently one of the few mainstream options for Filipino-language detection. However, Winston AI's support is relatively recent and has not yet been independently benchmarked against a rigorous Filipino corpus, as English detection has been tested. Claims of high accuracy on a language with minimal annotated validation data should be treated carefully until independent research confirms them.

Hindi: Script Challenges and Moderate Detection Accuracy

Hindi is spoken by roughly 600 million people, making it one of the most spoken languages in the world. It is also written in Devanagari, a script that presents specific technical challenges for the NLP pipelines that power AI detection systems.

The Devanagari Script Challenge

Devanagari is an abugida script, which means each character represents a consonant with an inherent vowel sound that can be modified by diacritical marks. This is fundamentally different from the alphabetic structure of Latin-script languages. Byte-Pair Encoding and WordPiece tokenizers, which were designed for Latin scripts, handle Devanagari less efficiently. They produce more tokens per word, create more out-of-vocabulary fragments, and introduce noise that compounds through every downstream NLP operation, including perplexity scoring, embedding generation, and classifier predictions.

A research study presented at EMNLP 2024 specifically investigated AI-generated text detection for Hindi using the RADAR detector. The results showed consistently low accuracy and F1 scores, leading the researchers to conclude that RADAR has significant difficulty in accurately identifying AI-generated Hindi text. This aligns with the broader literature, which shows that general-purpose English detectors lose substantial capability when applied to Devanagari-script languages without specific retraining. Multilingual AI detection model from Originality.ai added Hindi to its 2.0.0 model's supported languages in 2025, alongside Korean, Ukrainian, and others, with an overall multilingual accuracy of 97.8 percent. However, their English-only models still outperform the multilingual version, acknowledging that Hindi detection remains harder than English.

Accuracy Estimates for Hindi

Practical accuracy estimates for Hindi AI detection currently cluster in the 75-85% range under optimal conditions, defined as unedited AI text submitted to a properly calibrated multilingual model. This drops noticeably for edited content, formal academic Hindi, and writing that mixes Hindi with English technical terms. OpenAI's own detection tool was found in a 2023 study to show linguistic bias and low accuracy on non-English texts, including Hindi and Arabic, reflecting the general English-first problem of the first generation of AI detection tools.

The Formal Academic Hindi Problem

Hindi used in academic, legal, and government contexts employs a more Sanskritized register called Shuddh Hindi, which uses vocabulary derived from Sanskrit rather than everyday colloquial Hindi. This formal register is highly predictable and structured, producing naturally low perplexity scores that detectors associate with AI output. Hindi scholars and students writing in a formal academic register face the same structural false positive problem that affects academic writers in every language, but it is compounded by the fact that Hindi-calibrated detection thresholds are less precisely tuned than English ones. Tools that beat AI detectors by targeting the underlying statistical properties, rather than language-specific calibrations, are more reliably effective for Hindi writers than detectors that may be applying poorly calibrated Hindi thresholds.

The ESL False Positive Crisis: Writing in English as a Second Language

There is a scenario worse than using a detector that was never properly calibrated for your language: writing in English as a second language and being evaluated by a detector that was built for native English speakers. This is the situation facing millions of international students, researchers, and professionals every day.

The mechanism is straightforward. Non-native English writers produce text with lower lexical richness, reduced syntactic diversity, and more grammatical predictability than native English writers. They learn formal academic phrases, apply them consistently, and avoid the idiomatic variation that native speakers use naturally. Every one of those characteristics, the consistent vocabulary, the structured sentences, the reliance on learned phrase patterns, registers as low perplexity to an AI detector. The detector interprets predictable, formally correct English writing as machine-generated output.

Core Finding: The Stanford Liang et al. study tested seven widely used GPT detectors on writing samples from native and non-native English writers. Detectors were near-perfect on US eighth-grade essays. On TOEFL essays written by non-native English speakers, more than 61 percent were classified as AI-generated. The researchers analyzed 1,574 accepted papers from the ICLR 2023 machine learning conference and found that papers by first authors from non-native English-speaking countries showed significantly lower text perplexity than papers from native English-speaking authors, even after controlling for paper quality. |

ESL detection bias has been extensively documented in the literature. Pangram tested its own detector against the same TOEFL dataset used in the Stanford study and found a false positive rate of 0.032 percent, compared to Turnitin's far higher rate on the same dataset. This shows that bias is not inevitable across all tools, but varies dramatically by platform. For writers who do not know which tool their institution uses or how it has been calibrated, the risk of a false accusation remains real. Reduce AI detection risk by introducing controlled variation in vocabulary, sentence length, and structural patterns before submission. This is not about faking non-native writing; it is about shifting the statistical profile of genuine human writing toward the range detectors expect.

Language-by-Language Accuracy Comparison

The table below consolidates available accuracy data across languages and detection scenarios. Figures are drawn from published benchmark studies, platform documentation, and independent testing reports. All figures reflect conditions for 2025 to 2026 using current detection tools.

Language | Estimated Accuracy (Unedited AI Text) | Key Challenges |

English (native) | 90 to 99% | Benchmark standard; highest training data quality |

English (non-native / ESL) | 30 to 70% correct classification | Stanford study: 61%+ of ESL human text flagged as AI |

Spanish | 80 to 95% | Best-supported non-English; regional variation gaps remain |

French | 78 to 92% | Similar to Spanish, the Latin-script advantage applies |

Chinese (Simplified) | 70 to 82% | Character-based script; 13-point F1 gap vs English in benchmarks |

Arabic | 65 to 80% | OpenAI's tool showed low accuracy; Perso-Arabic script challenges |

Hindi | 75 to 85% | Devanagari script tokenization; formal register false positives |

Tagalog / Filipino | 27 to 55% | Low-resource language; 2025 IEEE study: 0.27-0.47 accuracy on 7 LLMs |

Other low-resource languages | Often unsupported | Minimal training data; most tools not calibrated |

These are general ranges. Individual tool performance varies significantly. Using an AI content humanizer to adjust the statistical profile of your text before submission reduces reliance on whether the specific tool your institution uses has been properly calibrated for your language.

Code-Switching: The Problem No Detector Has Solved

Multilingual speakers do not write in clean, compartmentalized languages. They code-switch. A Filipino student writing an essay may produce sentences that weave Tagalog and English together mid-clause. A Spanish-speaking researcher might embed English technical terms throughout otherwise Spanish prose. An Indian academic might write in English but use Hindi transliterations for culturally specific concepts. This is not sloppy writing. It is natural, competent multilingual communication.

It is also something AI detection tools are almost completely unprepared for. Filipino's low-resource status in NLP is partly a code-switching problem: the data available to train Filipino language models is scarce because much Filipino content online mixes languages in ways that do not cleanly fit into monolingual training pipelines. The same dynamic affects detection. A detector calibrated on either pure Tagalog or pure English has no framework for text that is both Tagalog and English.

The practical effect is unpredictable detection results. Code-switched text might be classified under whichever language baseline the detector defaults to, or it might confuse the model entirely, leading to a high AI probability score simply because the mixed-language input is unfamiliar. Using a free AI humanizer to stabilize the statistical properties of code-switched writing before submission is a practical response to a system that has not yet built reliable multilingual detection capabilities.

What Multilingual Writers Can Do Right Now

The multilingual detection gap is a structural problem that individual writers cannot fix. But there are practical steps that reduce the risk of false accusations and improve the fairness of any review process that does flag your work.

Know Which Tool Your Institution Uses

Different tools have very different multilingual performance profiles. Winston AI explicitly supports Tagalog. Originality.ai's 2.0.0 model supports 30 languages, including Hindi. Pangram has specifically invested in reducing ESL false positives. Turnitin's multilingual accuracy is less consistently documented. If you are writing in Spanish, Tagalog, or Hindi, finding out which platform your school or publisher uses and looking up its specific language support is worth doing before you submit.

Document Your Writing Process

A draft history saved in version control, research notes, and annotated sources are the clearest evidence that your work is genuinely yours. An AI cannot produce a revision history showing organic development across multiple sessions. This documentation is your strongest protection if detection flags your work, regardless of language. AI detectors biased toward ESL, according to Stanford professor James Zou, means that practitioners should exercise caution before using low perplexity as an indicator of AI-generated text in contexts with high numbers of non-native English speakers. That caution cuts both ways: writers can invoke this documented bias in any appeal.

Adjust Statistical Properties Before Submission

A detection tool that scores your writing poorly is measuring specific statistical properties: perplexity, burstiness, phrase repetition, and stylometric patterns. These properties can be adjusted. Varying sentence length deliberately, introducing personal voice and specific observations, diversifying vocabulary within a passage, and breaking up overly consistent paragraph structures all shift the statistical profile of your text toward what detectors expect from human writers. This is especially valuable for ESL writers, whose natural writing style produces low-perplexity text through no fault of their own.

For writers who want a systematic approach rather than manual editing, running text through a tool designed to produce undetectable AI text characteristics addresses the statistical layer directly. When your work is entirely genuine, this is a legitimate protective measure against a detection system that has not been built to recognize how you write.

Solution Section: Protecting Your Work as a Multilingual Writer

AI detection tools are not going away. The institutions deploying them are not waiting for multilingual accuracy to improve before using them in consequential decisions. That gap between the tool's capabilities and its deployment creates a real and ongoing risk for writers working in Spanish, Tagalog, Hindi, and dozens of other non-English languages. Here is how to manage it practically.

Understand the Perplexity Gap for Your Language

If you write in Spanish, your baseline perplexity range differs from English. If you write in formal Hindi or in Taglish, the detectors you face were almost certainly not calibrated on writing like yours. Knowing this does not solve the problem, but it gives you the language to articulate the issue if your work is flagged: the detection tool has documented accuracy limitations in your language and cannot be used as standalone evidence of AI use.

Use Tools That Were Designed for Multilingual Writers

Not all humanization tools are equal. Some are built specifically on English-language statistical models and produce transformations optimized for English perplexity and burstiness profiles. A multilingual writer needs a tool that adjusts statistical properties to account for language-specific patterns, or that operates at a level of text structure general enough to improve detection outcomes across languages. Test the tool on your specific language before submitting important work.

Make the Bias Argument When You Appeal

The Stanford study, the IEEE Filipino detection benchmark, and the EMNLP Hindi detection research are all published, peer-reviewed evidence that current AI detectors perform poorly on non-English writing. If your institution uses a detection tool to support a misconduct case against you and you wrote in Spanish, Tagalog, or Hindi, these studies are your evidence. They establish that the tool's output is unreliable for your language and cannot serve as standalone proof of anything. AI detection bypass by processing your text to match expected human statistical profiles before submission is one approach. Building a solid documentation trail and understanding your rights under the academic integrity policy is another. Both are worth doing together.

Conclusion

AI detectors do not work equally across languages. Spanish detection is meaningfully below English. Hindi detection faces script-level technical problems that English-trained models have never had to solve. Tagalog detection barely rises above chance in independent benchmarks. Non-native English writers face the additional indignity of being penalized by a system that interprets their careful, formal English prose as machine output. None of this will be fixed quickly. The training data gaps are real and will take years to close. In the meantime, multilingual writers can protect themselves by understanding which tools are being used against them, documenting their process thoroughly, and using tools that help their genuine writing produce humanized neurodivergent writing styles that detection systems recognize as human, regardless of the language.

Frequently Asked Questions

Do AI detectors work accurately in Spanish?

Spanish is the best-supported non-English language for AI detection, but it still underperforms English. Independent benchmarks show F1 scores of around 0.87 for Spanish compared to 0.95 for English. GPTZero reports approximately 82% accuracy in Spanish in independent testing. Major platforms like Originality.ai and Pangram have invested in Spanish support and report higher internal accuracy figures. The main weaknesses are regional vocabulary variation across Spanish-speaking countries and elevated false-positive rates for formal academic Spanish, which naturally produces low-perplexity text that detectors associate with AI output.

Why do AI detectors fail on Tagalog and Filipino text?

Tagalog is a low-resource language in NLP terms, meaning there is very limited annotated training data available for detection models to learn from. A 2025 IEEE-published study testing seven large language models on Filipino news classification achieved accuracy scores of only 0.27-0.47, barely above chance. Code-switching between Tagalog and English, called Taglish, creates additional problems because no existing detector has been properly calibrated for mixed-language content. Winston AI lists Tagalog as a supported language, but independent benchmarking on rigorous Filipino corpora is still limited.

How accurate is AI detection for Hindi?

Hindi AI detection accuracy is estimated at 75-85% under optimal conditions, well below English detection rates. The primary technical challenge is the Devanagari script, which creates tokenization problems that degrade the entire NLP pipeline. The EMNLP 2024 study on Hindi AI detection found that the RADAR detector showed consistently low accuracy and F1 scores. Formal academic Hindi uses a Sanskritized vocabulary register that yields naturally low perplexity, leading to elevated false-positive rates in genuine human writing. In 2025, Originality.ai's 2.0.0 model added Hindi to its supported languages, but its English-only model still outperforms the multilingual version.

Why are non-native English writers falsely flagged as AI?

Non-native English writers produce text with lower lexical richness and more predictable grammatical structures than native English speakers. This is a natural result of learning a language through formal instruction rather than immersion. AI detectors interpret low-perplexity, predictable text as machine-generated because language models also produce predictable, statistically expected word choices. The Stanford Liang et al. study found that more than 61 percent of TOEFL essays by non-native English speakers were classified as AI-generated by seven widely used detectors, while US eighth-grade essays achieved near-perfect classification. Using an AI text transformer that introduces controlled variation in vocabulary and sentence structure can shift genuine human writing into the statistical range that detectors expect, protecting writers from unfair false-positive accusations.

What can multilingual writers do to avoid AI detection false positives?

Four practical steps help. First, identify which specific detection tool your institution uses and verify its documented accuracy for your language. Second, save draft histories, research notes, and annotated sources as evidence of authentic authorship. Third, introduce deliberate variation in sentence length, vocabulary, and structural patterns to raise perplexity and burstiness scores toward what detectors expect from human writers. Fourth, understand the published research showing that current detection tools perform poorly on non-English writing, and be prepared to cite that research if your work is challenged. The Stanford study, the IEEE Filipino benchmark, and the EMNLP Hindi research are all peer-reviewed and publicly available, providing documented grounds for any appeal.