If you are an ESL student or professional who writes in English as a second language, you face a risk that most native English writers do not: a significantly higher chance of having your genuinely human-authored writing falsely flagged as AI-generated. This is not a random or incidental problem; it is a documented, structural bias in how AI detection technology works, and it disproportionately affects millions of international students, ESL learners, and non-native English professionals worldwide. Stanford University research confirms that AI detectors are biased against non-native English writers, and the mechanisms behind this systematic misclassification found that while AI detectors achieved near-perfect accuracy on essays written by native-English US students, they incorrectly flagged more than 61% of essays written by non-native English speakers as AI-generated. That figure, over three out of every five ESL essays misidentified as machine-written, represents one of the most significant documented fairness failures in current AI detection technology.

This guide is written especially for ESL writers working in academic and professional settings with AI detection systems. In these pages, we’ll cover exactly why ESL writers trigger AI detection systems, what in your writing is causing false positives, some practical writing techniques to help prevent these false positives without sacrificing your voice or academic honesty, how to build your process documentation to protect yourself in case of an AI detection flag, and the entire process of responding to and appealing a false accusation of AI detection. Your writing is your own. This guide can help you prove it.

Key Takeaways

The ESL false positive problem is structural, not accidental. AI detectors measure statistical properties of text, primarily perplexity (word predictability) and burstiness (sentence rhythm variation), which are systematically lower in ESL writing than in fluent native English writing. Why AI detectors mislabel ESL essays as AI-generated — the specific linguistic features that create false positive bias confirm that non-native writers often use simpler vocabulary, shorter sentence structures, and formulaic transitions because they are linguistically effective, and those same characteristics are exactly what detectors associate with machine generation.

A 2026 follow-up to the Stanford study found that “the average false positive rate of 61.3% for essays written by Chinese TOEFL examinees far surpasses the 5.1% average false positive rate for essays written by US student examinees. This represents a 12-fold difference in false positive rates based solely on writer background.” This is not an isolated finding. Several independent studies have verified these rates of false positives among non-native English writers for all of the major detection tools.

The best way to avoid false AI detection is not to write in a way that is typical of native English speakers. It is to document your process in such detail that detection, regardless of your writing style, is defensible. Timestamped drafts, research materials, and revision history are stronger evidence of your authorship than refuting your detection score.

Certain writing habits that are completely appropriate for ESL writers can be adjusted not to 'game' detection, but to produce more varied, engaging writing that also happens to reduce the risk of false positives. Varying sentence length, reducing reliance on formulaic transitions, and adding specific, concrete details are good academic writing practices that also disrupt the statistical uniformity that detectors flag. Writing tools that help writers produce more natural-sounding text that reflects authentic voice and reduce detection risk can assist with this process, but the most important step is always developing genuine writing practice, not circumventing detection systems.

If you receive a false AI detection flag, you have rights. Institutions are required to provide evidence, allow you to respond, and review flags with human judgment, not treat a detection score as an automatic verdict. A documented writing process, combined with knowledge of your institution's appeal procedure, is your most powerful defense.

Why AI Detectors Flag ESL Writing

The Core Problem: AI detectors measure how statistically predictable text is. ESL writers often produce statistically predictable text — not because they used AI, but because they write with more common vocabulary, more consistent sentence structures, and more formulaic transitions than fluent native writers. These characteristics are the result of their language learning journey, not evidence of machine generation. |

To understand why ESL writing sets off AI detectors, you need to understand what exactly detectors are detecting. The two main statistical features that most detectors are designed to detect are the perplexity of a document, or how predictable a word choice is based on the previous context, and the burstiness of a document, or how varied the sentence structure and length are. The writing of AI, or language models, will always have low perplexity, or highly predictable word choices, and low burstiness, or a highly consistent structure. Human writing, in theory, will have high perplexity, or unpredictable word choices, and high burstiness, or varied sentence structure). How AI detectors work and why their statistical methods systematically disadvantage non-native English writers confirms that ESL writers score lower on both perplexity and burstiness measures, not because their writing is less human, but because their language-learning history produces writing patterns that overlap with the statistical profile of AI-generated text.

The unfairness of this is further compounded by the training data that is used. Most AI detection tools were trained on predominantly native English language data, which is more developed, more idiomatic, more varied, and more statistically unpredictable than the precise, grammatically structured English that ESL students write. When that tool analyzes any given text against its internal notion of 'what human-written text looks like', it is analyzing it against its notion of predominantly native English. ESL text that fails to meet that standard is classified as belonging to the other category: AI-generated.

The Seven ESL Writing Characteristics That Trigger Detection

ESL Writing Characteristic | Why Detectors Flag It | How Common Is This |

Simpler, more common vocabulary | Lower perplexity — words are statistically more predictable, which detectors associate with AI generation | Very common; affects the majority of ESL writers across all proficiency levels |

Shorter, more uniform sentence structures | Low burstiness — consistent sentence length resembles the uniform rhythm of AI output | Common; particularly pronounced for writers from educational systems that emphasise concise, structured writing |

Formulaic academic transitions ('In addition', 'Furthermore', 'In conclusion') | N-gram overrepresentation — these phrases are disproportionately common in both ESL writing and AI output | Widespread; directly taught in many ESL academic writing programmes |

Formal, grammatically correct but less idiomatic phrasing | Trained on native English corpora, detectors associate high grammatical regularity with machine generation | Consistent; writers who have learned English through formal instruction write more consistently than native speakers |

Direct, explicit topic sentences | Cultural writing norms from East Asian, Middle Eastern, and other traditions emphasise directness that detectors interpret as AI uniformity | Documented in cross-cultural writing research; affects millions of international students |

Reduced use of contractions and colloquial language | Formal register maintained throughout — native speakers naturally mix registers; ESL writers may not | Common at intermediate and upper-intermediate proficiency levels |

Limited use of idioms and figurative language | Low lexical diversity in idiomatic expressions — AI models also avoid idioms and cultural references | Affects writers at all proficiency levels; particularly pronounced for those writing in academic contexts |

Writing Techniques That Reduce False Positive Risk

The methods that follow are not about tricking the system or avoiding detection. Rather, they are standard rules of good academic writing that, when implemented consistently, will also minimize the statistical detectability that these systems are picking up on. Using these methods will make your writing better for human readers and, as a secondary effect, make it less likely to be mistaken for machine-generated text.

Technique 1: Vary Your Sentence Length Deliberately

This is the single most effective technique for reducing the risk of false-positive detection for ESL writing. The detection software looks for something called 'burstiness,' or the variation in sentence length. There is often too much consistency in sentence length in ESL writing. The sentences tend to be medium length and grammatically correct. The key is to introduce some variation. A short sentence for emphasis, then a long sentence to develop that idea, and then a medium sentence to transition. This interrupts the pattern of low burstiness. A good rule of thumb is that in any paragraph with four or more sentences, try to include at least one sentence with fewer than ten words and at least one sentence with more than twenty-five words. The short sentence does not have to be a complete sentence. A direct statement, a transition, or rhetorical questions work well. 'This matters.' 'The data suggests otherwise.' 'Consider the evidence.' These are easy and effective short sentences. You don’t need to force it. Just let your thoughts vary in length.

Technique 2: Replace Formulaic Transitions with Logic-Driven Connectors

Phrases like 'Furthermore', 'In addition', 'Moreover', 'On the other hand', and 'In conclusion' are part of any ESL academic writing lesson plan, but they also occur with high frequency in AI-generated text. This is not happenstance. The AI model was trained on academic writing data that ESL training also uses. Therefore, it is not surprising that these phrases have become part of what detection tools recognize. Using logic-driven connectors instead of formulaic ones is not weakening your work; it is enhancing it. 'The data also shows this.' 'The data confirms this.' 'These three factors explain why.' 'It is clear that these three factors explain why.' 'On the other hand' is not used in logic-driven connectors. The paragraph is rewritten so that the word selection shows that there is a contrasting idea. 'Early studies confirmed this. More recent studies confirm something else.' Logic-driven connectors show analytical thinking that formulaic connectors claim but do not actually demonstrate.

Technique 3: Add Specific Concrete Details

The language of AI-generated text is characteristically abstract. It makes general claims with generic attributions: 'Many researchers have found...', 'Studies suggest that...', 'It is widely accepted that...'. A human writer, on the other hand, who has actually engaged with the subject matter, provides specifics that prove what they're talking about. The specifics include the name of the researcher, year of publication, or finding. Adding specifics to your text performs two functions at once. It proves that you've actually engaged with the subject matter. Adding specifics also adds unpredictable, context-specific word choice that creates high levels of perplexity.

For every claim you make in your text, you should be able to say what is the specific evidence for that claim. If you've written 'climate change is having serious effects on agriculture', you should be able to write 'A 2025 FAO report found that wheat yields in South Asia have been reduced by an average of 6% per decade over the past three decades due to heat stress.' The specifics in your text are your DNA. They prove that you're a human being with knowledge.

Technique 4: Include Your Own Perspective and Analysis

While AI may provide information, it does not have any opinion, personal experience, or true critical perspective. When you add your own analytical voice, i.e., what you think of the evidence, why you think one argument is more persuasive than another, and how your own experience or cultural background gives you a particular perspective on the issue, you are adding information that the AI simply does not have. This, of course, is also what sets good academic writing apart from summary writing, so you are making your writing more useful to your reader and less recognizable as AI-generated writing.

When writing in academic contexts where the use of the first-person voice is appropriate, "In my view, the most significant implication of this finding is...", "What strikes me as most interesting about this argument is...", "My experience with this issue suggests that..." are exactly the kind of personal, context-specific language that is lacking from AI writing. Even in contexts where the writing needs to be impersonal, you can add your analytical perspective through word choices that suggest evaluation, e.g., "The most compelling evidence is...", "The weakest assumption in this argument is...", "What this finding fails to consider is...".

Technique 5: Use Contractions Where Appropriate

One common trait of ESL writing is the tendency to avoid the use of contractions such as 'it's,' 'don't,' 'can't,' and 'we're' in favor of the full forms 'it is,' 'do not,' 'cannot,' and 'we are.' This is because ESL students are often taught that the full forms are more appropriate in academic writing. However, in most academic essays and reports, the use of the occasional contraction in narrative paragraphs is desirable. The use of contractions is also one of the natural characteristics of human writing that AI models fail to replicate.

You do not have to make your entire essay or report consist of contractions. Adding them to your sentences in the various parts of your essay or report, especially in the discursive parts where you express your own opinion or arguments, is enough to add the variations that are characteristic of human writing. Read your final essay aloud and pay attention to the parts that do not sound natural. Those parts are the parts that can be made better with the use of contractions and variations that AI models fail to replicate.

Technique 6: Use Idiomatic Phrases and Cultural References Appropriately

ESL writers will also tend to use fewer idiomatic language and cultural references than native English speaking writers, as idiomatic language is often difficult to understand and utilize in a second language. This is perfectly logical, but it is also part of what makes text unpredictable, as it is not part of the most likely word sequence that an optimising language model would select. When it is appropriate to use an idiomatic phrase that you are familiar with, it is perfectly acceptable to do so. Don’t be afraid to use idiomatic language that you are unfamiliar with, and don’t avoid it as a precaution.

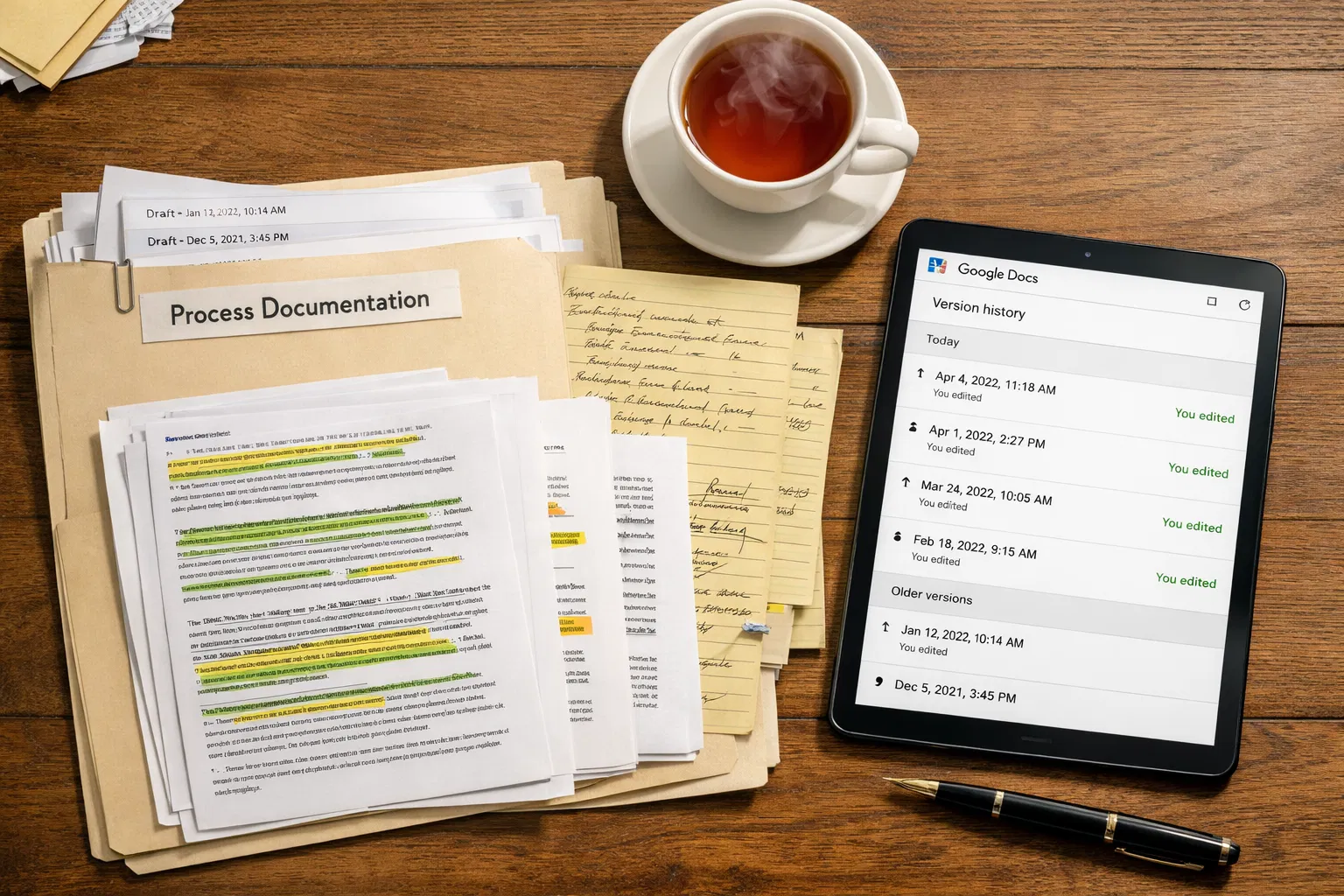

Building a Writing Process Record That Protects You

The most important protection against false AI detection is a documented writing process that creates verifiable evidence of authentic authorship. A detection score is a statistical estimate with a known error rate. Your revision history, research notes, and drafts are evidence of a genuine writing process. In any fair institutional review, documented evidence of process outweighs a detection percentage every time. Building a comprehensive writing process record is the most effective defense against false AI-detection accusations for all writers, particularly ESL students. A timestamped version history is the primary evidence that effectively resolves false-detection disputes because it demonstrates not only the final product but also the incremental human process that produced it.

Step 1: Write in Google Docs or Microsoft Word with Version History Enabled

Google Docs automatically saves every version of your document with timestamps as you write. This creates a verifiable record showing that your document was developed incrementally over multiple sessions, unlike a document that appeared fully formed in a single paste event. To access your version history in Google Docs, go to File → Version History → See Version History. You can name important versions (e.g., 'First draft', 'After feedback', 'Final edit') to make your process even clearer. Microsoft Word's track changes and version history features provide similar documentation. If your institution uses a submission portal that does not preserve version history, write in Google Docs first, then copy the Google Docs history into the submission format.

Step 2: Keep Your Research Notes and Outlines

Your research notes, annotated sources, highlighted readings, brainstorming notes, and concept maps are direct evidence that you engaged with the subject matter before writing. A student who collected and annotated their own sources, built an outline, and drafted a paper based on that research is demonstrably different from a student who submitted AI-generated text with no process behind it. Keep digital copies of all research materials: save highlighted PDFs, take notes in a document that carries a timestamp, and photograph or scan handwritten notes if you take them by hand. These materials are your intellectual record of the work you did before typing the first word.

Step 3: Save Intermediate Drafts

Even if you primarily write in Google Docs (where version history is automatic), save explicitly named intermediate drafts at key stages: after your first complete draft, after incorporating feedback, after your revision, and as your final version. Naming convention matters: 'Essay_Draft1_March10', 'Essay_Draft2_March15', 'Essay_Final_March18' create a clear timeline of development. This evidence is particularly powerful because it demonstrates that your writing changed and developed over time, a characteristic of genuine human composition that AI generation does not exhibit.

Step 4: Save Copies of All Research Sources

Maintain a reference list of every source you consulted, including the specific pages or sections you drew on. Your bibliography, combined with evidence that you actually read and engaged with the sources (annotations, notes, quotation marks around specific phrases you recorded), demonstrates the genuine research process behind your writing. If you used a specific quotation or paraphrase from a source, note where you found it. This documentation makes your intellectual process legible to anyone reviewing your work and makes the alternative explanation (that AI generated the content) implausible.

Step 5: Note Your Writing Timeline

Keep a simple log of when you worked on your assignment: the dates and times of your writing sessions, what you worked on in each session, and how long each session lasted. This does not need to be elaborate; a simple note in a text document with timestamps is sufficient. A writing log that shows three separate sessions over two weeks, with different sections of the paper developed in each session, is powerful evidence of a genuine human writing process. Most AI generation occurs in a single event; human writing occurs incrementally over time.

Running a Pre-Submission Self-Check

Before submitting any high-stakes assignment, run your completed work through a self-service AI detection tool to check how your writing scores and to identify any specific passages that may be flagged. GPTZero's free tier offers sentence-level detection that is particularly useful for this purpose. It shows you not just an overall score but which specific sentences are identified as potentially AI-like, giving you the opportunity to review and revise those passages before submission. How AI detection tools score ESL writing and which tools produce the most accurate results for non-native English writers confirms that GPTZero has significantly reduced its ESL bias relative to other major tools, with a 2% elevated false positive rate for non-native writers compared to 20%+ on some competing platforms making it the most reliable self-check option for ESL writers.

When reviewing your self-check results, pay particular attention to any passages that score as high-probability AI. Read those specific sentences aloud. Do they sound like you? Are they more uniform in rhythm than the rest of your writing? Is the vocabulary in those passages simpler or more formulaic than your writing typically is? These questions help you identify whether the flagged passage needs revision, and give you the self-awareness about your own writing patterns that is the long-term solution to false detection risk.

Run your self-check several days before the submission deadline, not the night before. This gives you time to review flagged passages carefully, make any revisions that genuinely improve your writing, and run a second check on the revised version. Do not make revisions solely to lower a detection score; make revisions that make your writing more specific, more varied, or more clearly analytical. Those revisions will lower the score as a secondary effect of becoming better writers.

If You Are Flagged: A Step-by-Step Response Guide

First Principle: A detection flag is not proof of wrongdoing. It is a statistical probability estimate produced by a tool with a documented 61%+ false positive rate for ESL writers. You have the right to respond, to present your evidence, and to have a human make the final judgment. Stay calm, gather your evidence, and follow the process below. |

Step 1: Do Not Panic and Do Not Delete Anything

Your initial reaction might be one of distress, and that is perfectly natural. However, the most important thing that you can do in the first few minutes after receiving the flag is to make sure that all your existing documentation is preserved. Do not delete any drafts, notes, or messages. Do not edit your original document. Go into your Google Docs version history and take screenshots of the earliest versions with the timestamps. Your evidence that you have already produced is your protection.

Step 2: Request the Specific Detection Evidence

You have the right to know exactly what evidence is being used against you. The information you should request is the specific detection report, the tool used, the version of the tool, the overall score, and if possible, the sentence-by-sentence breakdown of which exact pieces of text were highlighted as problematic. This is crucial in helping you develop your case because you will know exactly what the detector highlighted and be able to counter those exact pieces of text in your evidence. If the institution is not able to provide you with the exact detection report, it undermines their case and strengthens yours.

Step 3: Gather Your Process Documentation

Compile your complete writing process evidence into an organised package: your version history with timestamps, your research notes, your source annotations, your outline, any writing centre consultations or peer review sessions, and your writing timeline log if you kept one. How to compile writing process documentation effectively as evidence in a false AI detection dispute confirms that timestamped drafts and version histories are the primary evidence that successfully resolves false detection disputes, instructors and review committees respond to concrete process evidence far more effectively than to arguments about detector accuracy alone. Organise your evidence chronologically and label each piece clearly: Exhibit A: Research notes, Exhibit B: Draft 1 with timestamp, Exhibit C: Draft 2 with revisions, Exhibit D: Final submission.

Step 4: Know Your Rights as an ESL Writer

The documented bias in AI detection tools against ESL writers is supported by academic research and is therefore not in question. The Stanford University study, replications of the study, and admissions by Turnitin and other detection tools themselves all indicate that the rate of false positives is significantly higher in non-native English speakers. You are free to reference this academic research in your answer. Please make sure your answer includes the following: that you are a non-native English speaker, that the rate of false positives for ESL writers is documented to be significantly higher than that of native English speakers, the academic research supporting this claim, and that an AI detection score does not in itself constitute evidence of academic misconduct under most academic policies and the principles of fair academic process.

Step 5: Request a Meeting and Prepare to Discuss Your Work

Request a meeting with your instructor or the integrity officer handling your case, either in person or through video conferencing. This is not the time to debate; this is the time to show the instructor that you understand your own work. You need to be prepared to talk about your research process, the arguments presented in your submission, answer questions about the content of the submission that was marked, and provide your process documentation. If the student has actually written the work themselves, they should be able to talk about their work. This is part of the process of showing that they are the true authors.

Step 6: Submit a Written Response

Although you have a verbal dialogue with your instructor, it is still important to submit a written response to the formal allegation. This is to ensure that you have a paper trail showing that you disputed the allegation and provided evidence to support your claim. The written response should include: an affirmation of authorship, an overview of your process documentation and what it entails, an overview of what academic research states about false positives in ESL students, a request for human review of your case rather than relying on the score alone, and your contact information in case they have any questions.

Step 7: Appeal If the Initial Response Is Unsatisfactory

If your initial response does not alleviate the problem, then you will want to explore your formal appeal process. Most educational institutions have an academic integrity committee or an ombudsman that will review your case if you wish to dispute academic integrity claims. Your formal appeal will include all of the evidence presented in the above steps, organised in a cohesive manner, along with a statement explaining the procedural and substantive grounds for your formal appeal. These grounds will be that there is evidence that the detection tool has identified false positive rates for ESL writers, that there is insufficient evidence provided by the educational institution beyond the detection score, and that you have provided affirmative evidence for authorship.

Which Detectors Are Fairest for ESL Writers

Not all AI detectors treat ESL writers equally. Understanding which tools produce the most accurate results for non-native English writers helps you both choose appropriate tools for self-checking and make informed arguments about detector reliability if you are flagged by a specific platform. Independent analysis of AI detector accuracy and false-positive rates for non-native English writers across major platforms in 2026 confirms that, when training data is sufficiently inclusive of non-native English writing patterns, false-positive bias can be substantially reduced, which is why detector choice matters significantly for ESL writers.

GPTZero: The most ESL-fair major detector in 2026. It's the 2025–2026 model updates, specifically targeted at reducing ESL bias, resulting in a 2% elevated false-positive rate for non-native writers, compared to 20%+ on some competing platforms. Its transparent methodology and free tier make it the recommended self-check tool for ESL writers. Its sentence-level breakdown identifies which specific passages trigger detection, which is valuable for revision and evidence gathering.

Copyleaks: Strong multilingual support (30+ languages with verified accuracy) and a 5.04% false-positive rate for non-native English writers in its own 2024 study, still elevated above its native English baseline but significantly lower than many competitors. It is the best institutional option for multilingual organisations and the strongest tool for non-English content.

Turnitin: Acknowledges an elevated false-positive risk for ESL writers in its own documentation, and its conservative threshold design (suppressing scores below 20%) reduces wrongful accusation risk. However, it is institutional-only, and ESL writers cannot access it for self-checking. If flagged by Turnitin, its own published documentation on ESL false-positive rates is a citable resource in your appeal.

ZeroGPT: The highest false positive rate of any major tool, 20–28% in independent studies with documented bias against ESL and non-native writing. Do not use it for consequential self-checks, and cite its documented unreliability if it is the tool used to flag your work.

Originality.ai: Explicitly not recommended for academic use by its own company (it is designed for SEO content marketing). High false-positive rates (12–14% across all writers; higher for ESL) and the lack of adjustment for non-native writing patterns make it inappropriate for institutional enforcement against ESL students.

Conclusion

The documented bias of AI detection tools against ESL writers is perhaps one of the most significant fairness concerns facing current educational technology, and it is not something that will be fixed by waiting for better detection tools. In the meanwhile, the best strategies available to you are understanding why your writing is triggering detection tools, developing good writing habits that result in more varied and detailed writing, documenting your own process comprehensively in such a way that your own authorship can be verified, and understanding your own rights in case of detection. Your writing is your own. The statistical tools used to measure your writing are limited and biased against writers with your background. They are simply a fact of the current landscape, and you can use them to defend yourself.

Frequently Asked Questions

Why does my human-written ESL essay get flagged as AI-generated?

The AI detection tools analyze these statistical properties of text, particularly its perplexity, which is its word predictability, and its burstiness, which is its variation in sentence length. These properties are compared with those of AI-generated text. In contrast, ESL writing exhibits low perplexity and low burstiness compared to native English writing. This is due to the common words used, the regular sentence structures, and the frequent use of formulas in ESL writing. These are due to second-language acquisition and are not indicative of AI. These detection tools are trained mostly on native English writing, and ESL writing does not fit this model, which is why the systematic error found in the Stanford research occurs.

What is the false positive rate for ESL writers in 2026?

The seminal study conducted by Stanford University (Liang et al., 2023) identified an average false positive rate of 61.3% for non-native English speaker TOEFL essays, compared to 5.1% for native English speaker essays on the same topics, a 12-fold difference. This phenomenon was reinforced by the 2026 study, which confirmed the persistence of this trend. The 2024 study by Copyleaks identified a 5.04% false positive rate for non-native English writers on their platform, higher than their baseline for native English writers but lower than most of their competitors'. GPTZero models have lowered ESL bias to 2% above their baseline for native English writers by making specific improvements to their training data.

What is the most important thing I can do to protect myself from false detection?

Comprehensive documentation of your process should begin from the very start of your initial engagement with any task. This means enabling version history in Google Docs or Microsoft Word, storing your research materials, and creating a timestamped history of your sessions with your document. This provides verifiable evidence of your original authorship, which cannot be disproven in any way by detection scores. A timestamped history of your document's incremental development over time, based on your research materials, is your strongest defense, and it is especially critical for an ESL writer facing an elevated false positive risk.

What should I do if I am falsely accused of using AI?

Do not freak out, and do not delete anything. Immediately save all your drafts, notes, and version history. Request evidence of detection from the institution, such as which tool it used and what score it used to detect plagiarism. Gather your process documentation into an organized evidence package. Request a meeting with your instructor to discuss your work and present your evidence. Be sure to point out your ESL status and academic research supporting higher false positives among non-native English writers in your response. If your initial meeting is unsuccessful, access your institution's formal appeal process and write an appeal letter with your documentation attached.

Which AI detector tool is fairest for ESL writers?

GPTZero has achieved the greatest success in reducing ESL bias among the top detection tools, with its 2% elevated false-positive rate for non-native writers, compared to 20%+ false-positive rates of other detection tools. GPTZero is recommended for self-checking for ESL writers before submission. Copyleaks has the best support for multiple languages and has published its own data on false positives among ESL writers. ZeroGPT and Originality.ai have the highest false-positive rates and are least suitable for checking ESL writers.

This guide is accurate to the state of AI detection technology and policy as of March 2026. False positive rates and the capabilities of each platform, along with the policies of each institution, are constantly evolving. ESL writers are encouraged to check the institution's AI policy and false positive rates of each individual platform before making assumptions about the incident in question.