Your professor just told you that Turnitin flagged your essay as AI-generated. You wrote every word yourself. You spent days researching, outlining, and revising. Now you face an academic misconduct investigation for something you did not do.

False AI detection accusations are not rare edge cases. They are documented and widespread problems. A peer-reviewed Stanford study found that seven major AI detectors misclassified 61.3% of essays by non-native English speakers as AI-generated. A 2026 study of commercial detectors found false-positive rates ranging from 43% to 83% on authentic student writing. Even OpenAI shut down its own AI classifier in 2023 after it correctly identified only 26% of AI-generated text while falsely flagging 9% of human-written text.

This guide provides seven proven methods to fight a false AI detection flag. For a foundational overview of how these systems evaluate your text, see our guide to understanding AI detection.

Key Takeaways:

AI detectors produce false positives at rates ranging from 1% to 83%, depending on the tool, the text type, and the writer’s background.

The strongest defense against a false accusation is documented proof of your writing process, including drafts, version history, and research notes.

Running your text through multiple detectors exposes the technology’s inconsistency and strengthens your appeal.

Published research from Stanford, MIT Sloan, and OpenAI confirms that AI detection is fundamentally unreliable for high-stakes decisions.

Non-native English speakers, neurodivergent writers, and formal academic writers face disproportionately higher false positive rates.

BestHumanize provides proactive content protection by optimizing your text’s linguistic patterns to prevent false flags before they happen.

What Is a False AI Detection Flag?

A false AI detection flag occurs when an AI detection tool incorrectly identifies genuinely human-written text as machine-generated. In technical terms, this is called a false positive.

AI detectors analyze text using two primary metrics: perplexity and burstiness. Perplexity measures how predictable each word choice is. Burstiness measures the variation in sentence length and structure. AI-generated text typically scores low on both. The problem is that many categories of human writing also exhibit these same characteristics. For a data-driven exploration of how often these tools get it wrong, see our analysis of detection accuracy concerns.

Types of False AI Detection Flags and Who Gets Affected

False AI detection flags fall into several distinct categories based on who they affect and why they occur.

Language-Based False Positives

The most documented category affects non-native English speakers. The Stanford study by Liang et al. (2023) found that seven detectors misclassified 61.3% of TOEFL essays as AI-generated. In approximately 19% of papers, all seven detectors unanimously made this error. As The Markup reported, a Johns Hopkins professor noticed Turnitin was much more likely to flag international students’ writing.

Style-Based False Positives

Writers with formal, structured, or highly polished styles face higher false-positive rates regardless of their language background. Academic researchers, legal professionals, medical writers, and journalists following style guides all produce text that can trigger detection algorithms.

Tool-Induced False Positives

Writers who use grammar checkers like Grammarly, writing tutors, or editing tools may inadvertently increase their risk of false positives. These tools smooth out irregularities, producing more uniform text. The University of San Diego Legal Research Center has noted that neurodivergent students are also flagged at higher rates due to reliance on repeated phrases and structural patterns.

Platform-Specific False Positives

Different detectors produce different results on the same text. A 2026 independent test found average real-world accuracy of only 73%, with false-positive rates ranging from 2% to 28%. The JISC National Centre for AI confirmed that using multiple detectors in aggregate reduces false positives to nearly zero, while individual tools remain unreliable.

7 Proven Methods to Fight a False AI Detection Flag

Apply these methods in order to have the strongest possible defense.

Method 1: Request the Full Detection Report

Before responding emotionally, request the specific AI detection report. Ask for the exact detection percentage, which sections were flagged, and which tool was used. Many accusations are based on a single score without context.

Method 2: Document Your Writing Process

The single most powerful defense is proof of process. AI-generated text appears fully formed from nothing. Human-written text has a history. Collect everything:

Google Docs version history showing timestamped edits over days or weeks

Draft files saved at multiple stages of completion

Research notes, bookmarks, highlighted PDFs, and library access logs.

Outlines, brainstorm documents, or mind maps created before writing

Text messages or emails discussing the assignment with classmates

A well-documented writing trail is evidence that no algorithm can fabricate.

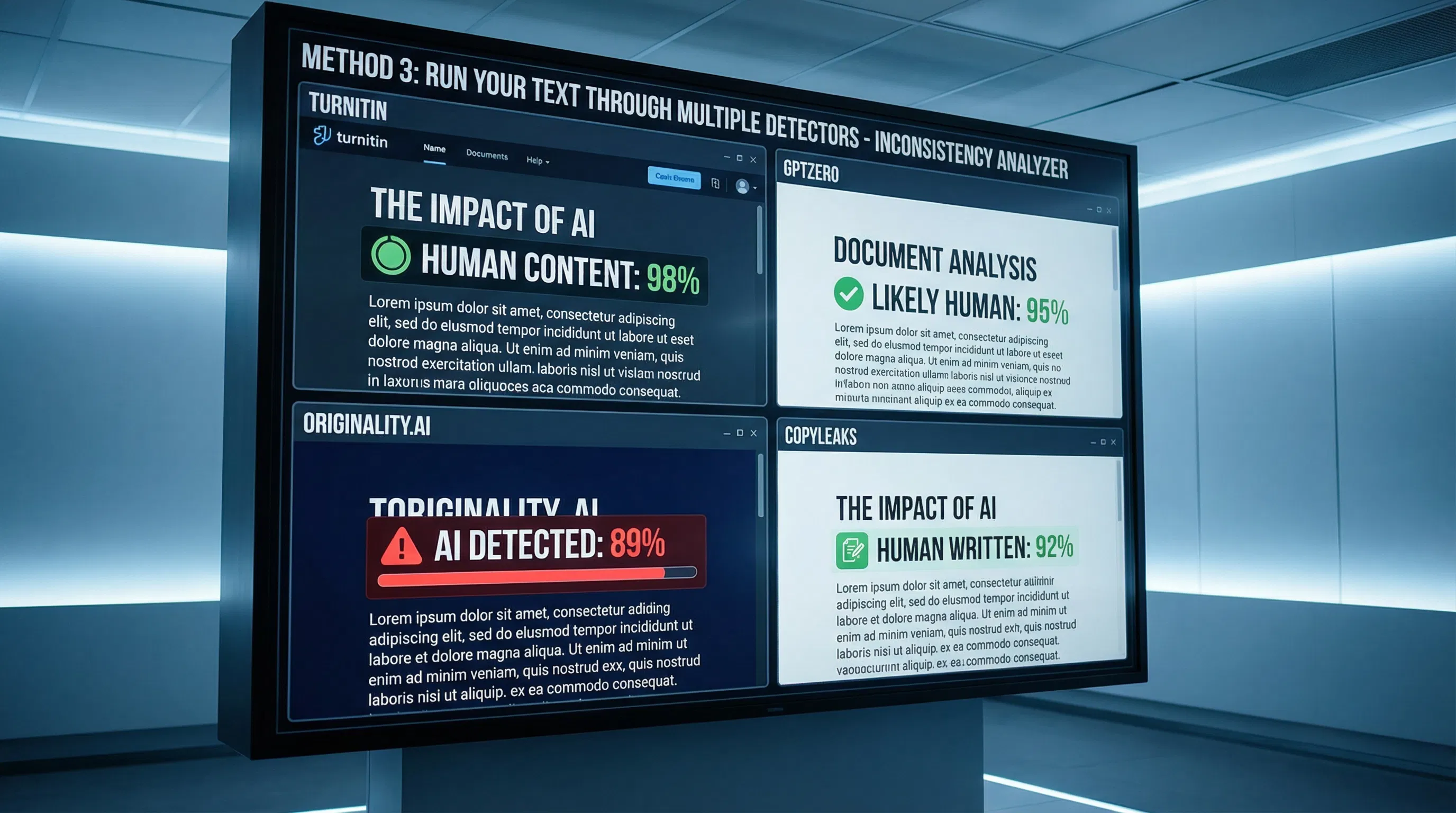

Method 3: Run Your Text Through Multiple Detectors

If Turnitin flagged your text, run it through GPTZero, Originality.ai, Copyleaks, and ZeroGPT. If the results disagree, that disagreement is powerful evidence. The Hyatt et al. (2025) study found that using detectors in aggregate reduces false positives to nearly 0%. Document every result with screenshots.

Method 4: Cite Published Research in Your Appeal

Your appeal should reference specific studies:

Stanford / Liang et al. (2023): 61.3% false positive rate on non-native English speakers. Peer-reviewed in Patterns.

OpenAI (2023): Shut down its own classifier after only 26% accuracy.

MIT Sloan: Published a position that AI detectors don’t work.

Hyatt et al. (2025): Found a 1.3% false-positive rate for AI detectors vs. 5% for human raters.

Method 5: Know Your Institutional Rights

Most universities have formal appeals processes. Key principles:

The burden of proof lies with the accuser, not the accused.

A probability score from an automated tool is not proof of misconduct.

Many institutions now include caveats about the reliability of AI detection in their policies. Quote your institution’s own language.

For cases involving potential expulsion or visa implications, consult an education lawyer.

Method 6: Demonstrate Subject Knowledge

Offer to discuss your paper’s content verbally. A student who genuinely wrote their work can explain their thesis, discuss sources, and articulate their arguments. An AI-generated essay’s supposed author cannot do this convincingly.

Method 7: Protect Yourself Proactively with Content Optimization

The best defense is prevention. Proactive content optimization adjusts your text’s perplexity and burstiness patterns to reflect authentic human writing variation. Our content humanization strategies and advanced rewriting techniques provide detailed methods for ensuring your genuinely human writing is read correctly by flawed algorithms.

Common Mistakes When Fighting a False AI Detection Flag

Admitting fault or apologizing preemptively. A Turnitin score is not evidence. Do not treat it as such.

Arguing emotionally without evidence. Present documented evidence and published research instead.

Relying on the detector’s score to defend yourself. Defend your process, not the score.

Waiting too long to gather evidence. Version history and browser records can expire. Preserve everything immediately.

Using a single alternative detector as counter-evidence. One conflicting result is a data point. Three or four is a pattern of unreliability.

The BestHumanize Solution: Proactive Protection Against False AI Detection

Fighting a false flag after the fact is stressful and disruptive. The better approach is to prevent false flags from occurring in the first place. Our automated content protection platform is designed specifically for this purpose.

Unlimited Humanization Access

BestHumanize operates on an unlimited access model. Students can check every assignment. Freelancers can verify every deliverable. Marketing teams can process entire content calendars. The unlimited model enables iterative refinement, refining your text multiple times until it achieves consistently safe scores across all major platforms.

Competing tools that charge per word force tradeoffs between protection and budget. BestHumanize eliminates this tradeoff at under $10 per month.

Multi-Detector Compatibility

The detection landscape is fragmented across Turnitin, GPTZero, Originality.ai, Copyleaks, ZeroGPT, and Winston AI. BestHumanize optimizes against all major platforms simultaneously, eliminating the whack-a-mole problem of passing one detector only to fail another.

This is particularly critical as institutions deploy layered detection strategies, with Turnitin as primary, GPTZero as secondary. BestHumanize ensures your content passes every layer.

Content Protection Integration

BestHumanize preserves your original meaning, voice, and tone while adjusting only the specific linguistic patterns that trigger detection algorithms. It is not a word spinner. It performs targeted optimization, identifying specific vulnerability points and resolving them surgically.

For organizations, BestHumanize integrates into existing content pipelines as an automated protection checkpoint. The transformation is fundamental: writers stop fearing algorithmic misjudgment and start operating with confidence that their work will be evaluated on its merits.

Conclusion

False AI detection flags are a documented, widespread problem caused by fundamentally flawed technology. AI detectors do not detect AI; they detect writing patterns that overlap between machine output and certain types of human writing.

Fighting a false flag requires evidence, not emotion. Document your writing process, run your text through multiple detectors, cite published research, and know your institutional rights. For proactive protection, tools like BestHumanize optimize your content’s linguistic patterns to prevent false flags before they occur.

A detection score is a probability estimate, not a verdict. You have the right to defend your work, and the evidence is overwhelmingly on your side.

Frequently Asked Questions

What is a false AI detection flag, and how common is it?

A false AI detection flag occurs when an AI detector incorrectly classifies human-written text as machine-generated. According to 2026 independent testing, false positive rates range from 2% to 83%, depending on the tool and text type. The Stanford study found a 61.3% false-positive rate among non-native English speakers.

What is the difference between perplexity-based and burstiness-based detection errors?

Perplexity-based errors occur when predictable vocabulary triggers the detector. Burstiness-based errors occur when uniform sentence structures are flagged. Grammarly’s analysis and Scribbr’s guide provide detailed technical breakdowns of how these metrics work.

How do I appeal a false AI detection accusation at my university?

Request the specific detection report, gather evidence of your writing process, run your text through multiple alternative detectors, and file a formal appeal citing published research on AI detection unreliability from Stanford, MIT Sloan, and OpenAI.

How much does it cost to protect my writing from false AI detection flags?

BestHumanize offers a free tier for initial content checks and unlimited protection plans under $10 per month. Compared to the potential cost of a failed course, a lost client, or a damaged reputation, proactive protection is negligible.

How does BestHumanize protect against false AI detection?

BestHumanize analyzes your text’s perplexity and burstiness patterns and optimizes them to reflect authentic human writing variation across all major detectors simultaneously. It preserves your meaning and tone while adjusting only the statistical signatures that cause false flags. For more strategies, see our guide on reliable detection protection.