Medical writing sits at an uncomfortable intersection. It is the discipline that arguably requires the most precision, consistency, and formal structure, and it is also the discipline whose natural characteristics most closely resemble what AI detectors are trained to flag. A well-written clinical research abstract reads differently from a general interest article. It uses established terminology consistently. It follows a predictable organizational structure. It minimizes personal voice and stylistic variation. Every one of those qualities is a signal that statistical AI detectors interpret as machine-generated output.

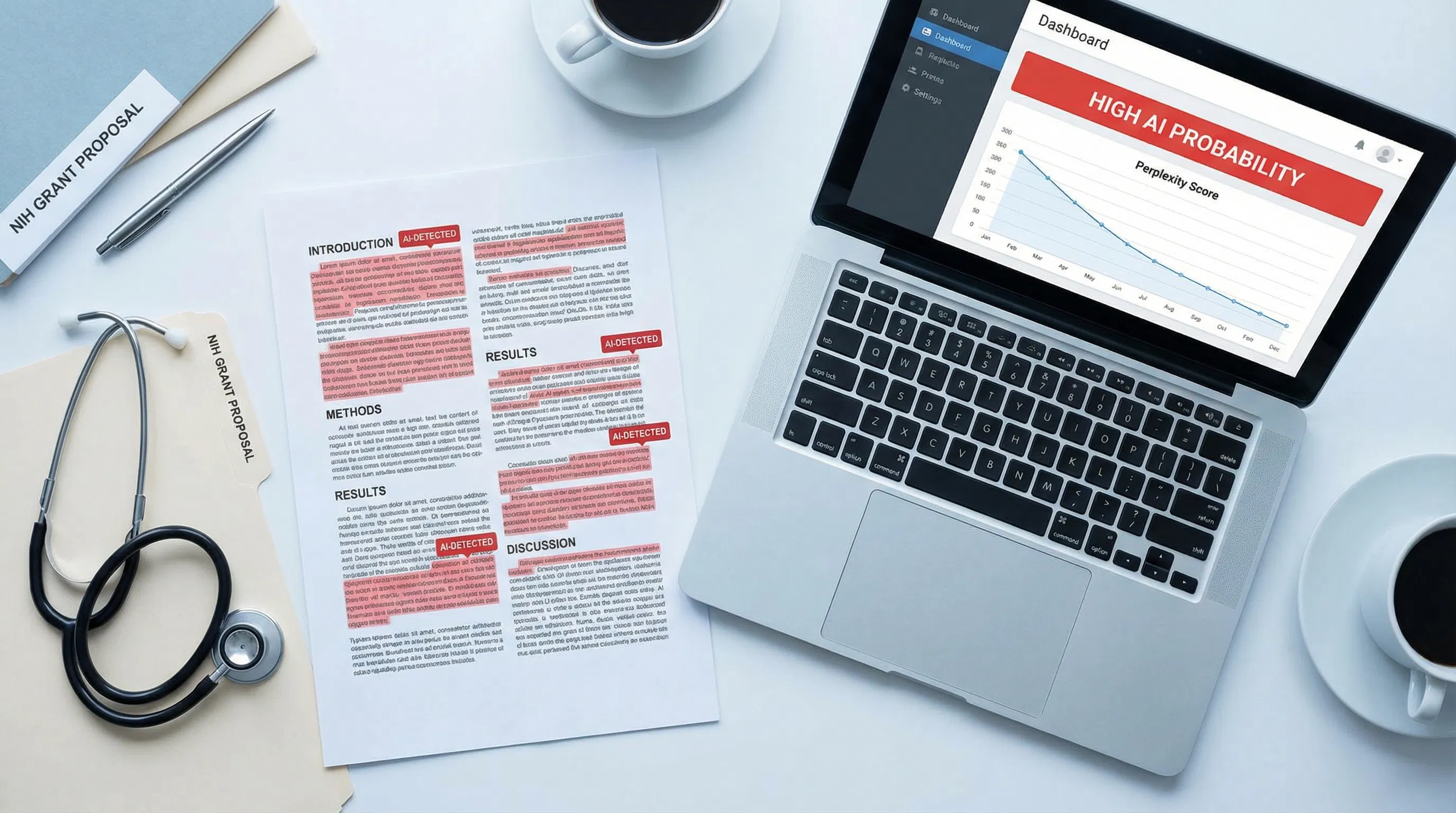

The problem has escalated as journals, funding agencies, and healthcare institutions have begun deploying AI detection tools without adequately accounting for this structural mismatch. The result is that researchers submitting genuine scientific work, clinicians writing original case reports, and grant applicants presenting their own novel hypotheses are finding their work flagged as AI-generated. AI impact medical writing confirms that while AI-assisted writing offers real efficiency benefits in healthcare, the detection tools institutions rely on cannot reliably distinguish between AI-generated medical text and the disciplinary writing conventions that define authentic scientific communication. For anyone whose career depends on that distinction, understanding why detection fails is the first step.

This article explains the specific features of medical writing that trigger false positives, how the NIH's 2025 policy changes affect grant applicants, what the peer-reviewed research actually shows about detection accuracy in scientific abstracts, and what tools like humanize medical writing offer as practical measures for writers navigating this landscape.

Key Takeaways

Medical writing's natural characteristics trigger AI detection false positives. Consistent technical terminology, IMRAD structure, passive voice, formal register, and low stylistic variation are all features AI detectors associate with machine-generated text. They are also the defining features of legitimate scientific writing.

A 2023 study by Rashidi et al. ran an AI detector on 14,440 genuine scientific abstracts published from 1980 to 2023. Up to 8.7 percent were falsely flagged as AI-generated, with 5.1 percent labeled as over 90 percent likely to be AI-generated. This includes abstracts written decades before large language models existed.

NIH formally prohibits substantially AI-developed grant applications. Under NOT-OD-25-132, effective September 25, 2025, NIH will not consider applications substantially developed by AI to represent the original ideas of applicants. The policy also introduced a limit of 6 applications per year per principal investigator and confirmed that NIH uses detection technology to identify AI-generated submissions.

NIH also prohibits peer reviewers from using AI tools. Under NOT-OD-23-149, issued June 2023, all NIH peer reviewers are prohibited from using generative AI to analyze or critique grant applications, as uploading grant content to external AI tools violates confidentiality requirements.

The practical risk for grant applicants is compounded by the ESL problem. Many competitive biomedical researchers write in English as a second language. Their writing naturally yields lower perplexity scores due to reduced lexical diversity, thereby increasing the false-positive risk, precisely in the applicant population competing hardest for NIH funding.

An AI humanizer tool can shift the statistical profile of genuine medical writing toward the range detectors expect from human authors, without altering scientific content, citations, or technical accuracy. This is a legitimate protective measure for researchers whose authentic work would otherwise be flagged.

Why Medical Writing Triggers AI Detection

To understand why medical writing gets flagged, you need to understand what AI detectors are actually measuring. They are not judging the sophistication of the ideas or the depth of the research. They are measuring statistical properties of the text: primarily perplexity (how predictable the word choices are) and burstiness (how much sentence length and structure vary). AI-generated text tends to produce low perplexity and low burstiness because language models select statistically probable word sequences and produce relatively uniform sentence structures. Medical writing, written by skilled humans with years of training in disciplinary conventions, produces those same signals.

Consistent Technical Vocabulary

Medical writing requires precise, consistent use of established terminology. A clinical researcher does not substitute "cardiac event" with "heart problem" for the sake of lexical variety. Terminology is defined, used consistently throughout a document, and corresponds exactly to diagnostic or procedural codes. This consistency is scientifically necessary. It also produces very low perplexity because the same high-precision terms appear repeatedly in predictable contexts, exactly as they would in AI-generated text following the same domain vocabulary.

Passive Voice and Impersonal Register

Scientific convention demands passive voice for methodology sections: "samples were collected," "patients were randomized," "data were analyzed." This is not a stylistic choice. It is a disciplinary norm that reflects scientific objectivity, removing the individual researcher from descriptions of procedures. AI detectors trained on general text expect human writing to contain first-person constructions, contractions, and informal markers. Medical writing systematically avoids them. Every section that reads "participants were excluded if..." scores as AI-like to a detector calibrated on mixed writing styles.

Formulaic Sentence Openings

Medical writing uses predictable transition phrases to signal logical structure: "Furthermore," "In addition," "However," "These results suggest," "Of note." These are taught as best practices in scientific writing courses because they create clarity and ease of navigation for readers skimming dense technical content. They are also the phrase patterns that AI detectors associate with machine output. Language models rely on high-probability transitional phrases; so does disciplinary medical writing. The detector cannot tell the difference.

Any researcher attempting to bypass AI detectors by manually varying these phrase patterns faces a real dilemma: the variations that satisfy the detector often violate the conventions their journal expects. A tool that adjusts statistical properties while preserving disciplinary language norms is the practical answer to this problem.

Scientific Abstracts: The Most Vulnerable Document Type

Of all medical writing formats, the structured scientific abstract is the one most likely to be incorrectly flagged as AI-generated. An abstract has a defined structure: Background, Methods, Results, Conclusions. It uses highly compressed, precise language. It avoids personal voice. It follows a predictable arc from research question to findings. All of these features, which make abstracts effective scientific communication, also make them the document type that most closely resembles AI-generated text under statistical detection methods.

Research Finding: A 2023 study by Rashidi et al. ran a GPT-2-based AI detector on 14,440 genuine scientific abstracts published in top journals between 1980 and 2023. Up to 8.7 percent were falsely identified as AI-generated, with 5.1 percent labeled as having over 90 percent probability of being AI-generated. Some journals showed false positive rates reaching 10.2 percent. This included abstracts written in the 1980s and 1990s, decades before large language models existed. The study documents the structural false positive problem: medical writing conventions produce statistical patterns that detection tools misinterpret as machine output. |

Oncology abstracts AI detection was examined in a 2024 JCO Clinical Cancer Informatics study that analyzed 15,553 ASCO meeting abstracts using three AI detectors. The study found a measurable increase in AI detection signal in abstracts from 2023 onward, consistent with real LLM adoption in scientific writing. But critically, the same methods that detected genuine AI use in 2023 abstracts also produced signals in 2021 and 2022 abstracts, written before those tools existed, confirming the underlying false-positive problem in medical writing.

For researchers who need to submit genuine abstracts that pass scrutiny, reduce AI detection risk by introducing measured variation in sentence rhythm, phrasing, and structure without compromising scientific content. The detection tool does not know that you wrote this work after three years of research. It measures how many consecutive tokens it can predict. Those are separate questions with different answers.

Grant Proposals: High Stakes, High False Positive Risk

A grant proposal is a distinctive form of professional writing that sits somewhere between academic writing and persuasive argument. It must demonstrate scientific originality, methodological rigor, and the applicant's personal expertise. It also must follow highly standardized structures, use precise technical vocabulary, and maintain a formal register throughout. These requirements create the same detection vulnerability as scientific abstracts, with dramatically higher stakes.

A false positive on a journal submission is damaging. A false positive on an NIH R01 or a major healthcare foundation grant can delay or end a research program. NIH AI grant policy issued in July 2025 makes this risk concrete: NIH now explicitly screens grant applications using AI detection technology and will not consider applications substantially developed by AI to be original ideas of applicants. For a researcher who wrote every word of their Specific Aims page themselves but whose writing style happens to produce low perplexity, this creates genuine exposure.

The Specific Aims Problem

The Specific Aims page is the single most consequential page in an NIH application. It must be clear, structured, and persuasive. Most successful Specific Aims pages follow a recognizable template: open with the problem, state the knowledge gap, introduce the central hypothesis, outline the aims, and conclude with significance. This template exists because it works. Experienced grant writers learn it explicitly, teach it to trainees, and refine their own applications within its framework.

That template also produces predictable, low-perplexity text. The phrase "the central hypothesis of this proposal is..." is a signal phrase in scientific writing. It is also exactly the kind of highly probable phrase sequence that detectors use to flag AI output. A researcher who has written dozens of successful grants using this structure faces a real risk that their latest application will be flagged as AI-generated by a system that has never seen the 20 years of drafts behind it.

ESL Researchers and the Compounded Risk

The ESL false positive problem is particularly acute for grant applicants. Many of the most competitive researchers in biomedical science write in English as a second language. They tend to use formal vocabulary consistently, rely on learned phrase patterns, and avoid the informal variation that detectors associate with native English writing. According to the Stanford Liang et al. study, their writing naturally produces lower perplexity. Under NIH's new detection policy, applications with lower perplexity could be flagged as AI-generated. Using tools that beat AI detectors by introducing controlled linguistic variation is a more practical solution than asking non-native English writing researchers to artificially adjust their language style.

NIH Policy: What the Rules Actually Say

NIH has issued two notices that together define the current policy environment for AI and grant writing. Understanding exactly what each says matters, because the consequences of misreading them run in both directions: applicants who believe all AI assistance is banned may be overcautious in ways that disadvantage them, while applicants who assume detection is too inaccurate to matter may be undercautious about genuine risk.

NOT-OD-25-132: Supporting Fairness and Originality (July 2025)

NIH originality notice 2025 is the most significant recent development. Effective for applications submitted from September 25, 2025, onward, NIH will not consider applications that are either substantially developed by AI or contain sections substantially developed by AI to represent the original ideas of applicants. The notice explicitly states that NIH will employ the latest technology to detect AI-generated content. It also introduces a limit of 6 applications per year per principal investigator. Limited AI assistance for specific tasks, such as grammar checking or literature search support, is not explicitly prohibited, but the notice warns that AI use carries risks, including plagiarism and fabricated citations.

NOT-OD-23-149: Peer Review AI Prohibition (June 2023)

NIH peer-review AI ban prohibits NIH peer reviewers from using natural language processing, large language models, or other generative AI technologies to analyze or formulate critiques of grant applications. The rationale is confidentiality: uploading grant content to external AI tools exposes proprietary scientific information to systems with opaque data handling. This prohibition runs in both directions for the integrity of the process; both applicants and reviewers are constrained by AI rules.

Core Principle: NIH's position is nuanced, not absolute. Limited AI assistance for specific preparatory tasks is not explicitly banned. What is banned is substantial AI development of the proposal itself, which NIH treats as undermining the originality requirement. The practical challenge is that NIH's detection technology may flag writing that was entirely original but whose statistical properties resemble AI output, particularly for ESL applicants and researchers writing in established disciplinary formats. |

The distinction between "AI-assisted" and "substantially AI-developed" is precisely where the risk of false positives lies. A researcher who used Grammarly for grammar checking, cited literature with a reference manager, and worked with a biostatistician on their methods section did not substantially develop their proposal with AI. But their polished, structured, technically consistent prose may score in the same statistical range as a proposal that was. Using humanized AI content tools to shift the statistical profile of genuine writing before submission addresses this risk without introducing any scientific inaccuracy.

IMRAD Structure and the Detection Trap

IMRAD stands for Introduction, Methods, Results, and Discussion. It is the standard organizational structure for empirical research papers in medicine and the life sciences, and it has been the dominant format for clinical research communication for decades. It exists because it works: it provides readers with a reliable map through a paper, allows them to extract the information they need, and facilitates peer review. It is also, from a statistical detection standpoint, an extremely predictable document structure.

Introduction: Purpose and Context

Medical paper introductions follow a recognizable arc. They open with the broader clinical context, narrow to the specific problem, review relevant prior work, identify the knowledge gap, and state the study's objective. Every successful medical writer learns this structure. The paragraph beginnings, the transition logic, and the concluding hypothesis statement are all predictable. A detector measuring phrase-level patterns will find them consistently predictable, scoring the Introduction as low-perplexity across the board.

Methods: The Most Vulnerable Section

Methods sections are the highest-risk IMRAD section for false positives. They use passive voice almost exclusively ("blood samples were collected," "participants were assigned," "data were analyzed using SPSS"), refer to established protocols by name, use precise measurement language, and contain almost no personal voice or stylistic variation. This is scientifically appropriate, rigorous writing. It is also text that produces some of the lowest perplexity scores in scientific communication, consistently triggering detection flags.

AI regulations and medical writing confirm that the challenge for medical writing AI governance is particularly acute because the technical nature of medical writing, its required precision and standardization, creates inherent vulnerabilities to AI-generated false positives that do not exist in other forms of professional writing. The regulatory frameworks deployed to address AI misuse in medical writing must account for this structural reality.

Results and Discussion: Less Flagged, Still at Risk

Results sections present data systematically, using tables, figures, and precise numerical reporting. Discussion sections interpret findings in the context of the prior literature, consider limitations, and propose future directions. Both sections carry less detection risk than the Methods sections, but they still rely on formulaic phrase patterns, disciplinary vocabulary, and structured argumentation, which can yield elevated AI detection scores. Using an AI text humanizer to introduce natural sentence-level variation across all four IMRAD sections, particularly the Methods, provides comprehensive coverage against the risk of false positives.

Clinical Language Patterns That Detectors Misread

Beyond IMRAD structure, specific clinical writing patterns reliably produce false positives. Understanding which ones does two things: it helps medical writers recognize why their work was flagged and know which elements to address when adjusting their work's statistical profile.

Hedging language. "These findings suggest," "may indicate," "appears to be associated with," "warrants further investigation." Clinical writers hedge carefully because scientific claims must reflect the actual evidence. Hedging language is a disciplinary norm. It also produces consistent, predictable sentence endings that detectors score as AI-like.

Standardized phrase constructions. "A statistically significant difference was observed," "no significant adverse events were reported," "the results are consistent with previous findings." These phrases appear in thousands of papers because they convey standard scientific meanings efficiently. They are also highly predictable to any language model trained on medical literature, resulting in low perplexity scores.

Consistent abbreviation use. A clinical paper defines an abbreviation once and uses it consistently thereafter. "Randomized controlled trial (RCT)" becomes "RCT" for the rest of the document. This consistency is required by style guides and improves reader navigation. Detectors see the consistently predicted abbreviation as a low-perplexity signal.

Passive construction density. As noted above, methodology sections are heavily passive. What is less obvious is that passive constructions also permeate results and discussion sections, and even introductions, when the emphasis is on findings rather than on researchers. A clinical paper may use the passive voice in 40 to 60 percent of its sentences, far higher than in any general-audience text a detector was trained on.

Producing undetectable AI text in a medical writing context is not about making the writing sound less scientific. It is about introducing sufficient statistical variation at the sentence and phrase levels so that the detector's perplexity and burstiness metrics fall within the human range, while the scientific content, terminology, and structure remain entirely intact.

Real Consequences: When Medical Writing Is Wrongly Flagged

A false positive in medical writing does not have the same consequences as a false positive on a student essay. The stakes scale with the context, and in healthcare and research, the context is often extremely high.

Grant rejection or compliance referral. Under NIH's 2025 policy, a grant application that triggers an AI detection flag and cannot be cleared faces rejection on originality grounds. At competitive funding rates of 15 to 20 percent, a single cycle lost to a false positive can set a research program back two years. More seriously, NIH explicitly notes that non-compliant use of AI may be referred to the Office of Research Integrity for misconduct review.

Journal submission complications. Major journals, including Nature, Science, and the NEJM, have implemented AI disclosure requirements. Some have moved toward active detection screening at submission. A paper that triggers a false positive during editorial review may be delayed, returned to authors for explanation, or, in the worst case, rejected without review, even when the work is entirely original.

Regulatory submission delays in clinical contexts. Clinical trial summaries, investigational new drug applications, and medical device documentation submitted to the FDA or other regulatory bodies are subject to increasing scrutiny for AI-generated content. A regulatory submission that gets flagged faces additional review, potential delay, and questions about the integrity of the underlying data.

Professional credibility damage. AI detectors in behavioral health published in Account Research confirmed that inaccurate AI detectors risk unnecessary penalties for human authors in medical and behavioral health academic writing. The study found that detector accuracy varied significantly across academic healthcare writing types, raising serious concerns about their suitability for consequential deployment without human oversight.

Each of these scenarios can be substantially reduced by running finished documents through a free AI humanizer before submission. The time investment is minimal compared to the time lost responding to a false accusation.

What Medical Writers and Researchers Can Do

Understand Which Sections Carry the Highest Risk

Methods sections are the most likely to be flagged. If you are submitting a paper or grant, run your Methods section through an AI detector before submission and note where the highest-confidence flags appear. This gives you a targeted roadmap for the sections that need the most statistical variation introduced.

Vary Sentence Length Deliberately in High-Risk Sections

The single most effective manual intervention for reducing burstiness-related flags is varying sentence length. If your Methods section consists predominantly of medium-length passive-voice sentences of similar structure, deliberately break up the rhythm. Add a short declarative sentence after a long explanatory one. Start a sentence with a clause rather than a noun phrase. These changes do not affect scientific content, but they significantly increase burstiness scores.

Introduce Personal Voice Where Appropriate

Discussion sections, in particular, permit more personal framing than Methods sections do. "We interpret these findings to suggest..." reads differently from "These findings suggest..." to a detector, because the first-person construction introduces vocabulary and structure that human writers, not language models, tend to produce. Discussion sections are the legitimate place to bring a research perspective into a paper, and doing so also improves detection scores.

Document Your Process as a Precaution

For grant applications in particular, maintaining a clear record of your drafting process is essential. Version-controlled drafts, notes from discussions with collaborators, and records of the preliminary data that informed your specific aims provide clear evidence of authentic intellectual development. If your application is flagged, this documentation is your primary defense.

Use a Statistical Profile Tool Before Submission

The most reliable proactive measure is running your completed document through a tool designed to shift its statistical profile toward the human writing range. AI detection bypass tools work by introducing controlled variation in vocabulary distribution, sentence rhythm, and phrasing patterns, targeting the specific metrics detectors measure without altering scientific content. For high-stakes submissions, this is a reasonable precaution that takes minutes and can prevent months of delays.

Solution Section: Protecting Genuine Medical and Scientific Writing

The core problem for medical writers is that the detection systems now being deployed against AI misuse were not designed to distinguish between AI-generated text and legitimate scientific writing conventions. They were designed to distinguish AI from general human writing, and medical writing is not general human writing. It is highly specialized, disciplinarily constrained, and statistically predictable by design. Treating medical writers as if the same detection criteria that work for essay mills apply to them is a category error with serious professional consequences.

A Testing-First Workflow

Before submitting any high-stakes document, run it through at least two different AI detection tools to understand its statistical profile. Note which sections receive the highest AI probability scores. This creates a targeted editing roadmap rather than requiring wholesale revision of a carefully crafted document. Focus your attention on Methods sections, literature review passages, and any section with dense passive voice.

Targeted Statistical Adjustment

Once you know which sections are at risk, address the specific statistical signals those sections produce. For perplexity, vary vocabulary at the phrase level without compromising terminology precision. For burstiness, vary sentence length and structural patterns within paragraphs. These adjustments are consistent with the goal of clear scientific communication and do not require changing any substantive content.

Unlimited Processing for Volume Writers

Prolific researchers, medical writers working across multiple client projects, and grant-writing teams may need to process substantial volumes of text. Platforms with per-word costs or session limits create friction that discourages consistent use. Humanize AI writing provides free, unlimited access to AI text transformation, so research teams can systematically process all their documents, not just the ones they are most worried about.

Compliance Documentation for Grant Submissions

For NIH and other grant submissions where AI detection is now explicitly part of compliance evaluation, consider including a brief process disclosure in your application. Noting that AI tools were used solely for grammar checking, with all scientific content developed by the research team, preempts the interpretation that any AI flag reflects substantive AI authorship. NIH's own guidance suggests that limited AI assistance for specific preparatory tasks is not the concern their policy targets.

Conclusion

Medical writing does not trigger AI detection false positives because researchers are cutting corners. It triggers false positives because disciplinary writing conventions, developed over decades of scientific communication, produce statistical patterns that detection tools were never calibrated to interpret correctly. IMRAD structure, technical vocabulary, passive voice, and formulaic phrase patterns are features of rigorous science. There are also features that detectors flag. As NIH and journal publishers deploy increasingly aggressive detection technology, researchers need both the documentation trail to defend their authentic work and the tools to shift their statistical profile into the range that detection systems recognize as human. An AI content humanizer that targets perplexity, burstiness, and phrase distribution without touching scientific content is the practical answer to a structural problem that will not be solved at the policy level before the next submission deadline.

Frequently Asked Questions

Why does medical writing get flagged as AI-generated?

Medical writing triggers AI detection false positives because its defining characteristics, including consistent technical vocabulary, IMRAD structure, passive voice, low stylistic variation, and formulaic phrase patterns, produce the same low-perplexity, low-burstiness statistical profile that AI detectors are trained to flag. A study by Rashidi et al. found that up to 8.7 percent of genuine scientific abstracts published before the existence of AI writing tools were falsely flagged as AI-generated. The problem is not the writing. It is a detection system calibrated on general text being applied to specialized scientific communication.

What is the NIH policy on AI use in grant proposals?

NIH issued NOT-OD-25-132 in July 2025, effective from September 25, 2025. The notice states that NIH will not consider applications substantially developed by AI to represent the original ideas of applicants, and confirms that NIH employs detection technology to identify such applications. A separate notice, NOT-OD-23-149, from June 2023, prohibits peer reviewers from using AI tools to analyze or critique applications, citing confidentiality concerns. Limited AI assistance for specific preparatory tasks, such as grammar checking, is not explicitly prohibited, but researchers should document their process and ensure all scientific content reflects their own original thinking.

Do AI detectors produce false positives on scientific abstracts?

Yes, at measurable rates. The Rashidi et al. 2023 study found false-positive rates of up to 8.7 percent on genuine abstracts, including those from journals like NEJM, where rates reached 10.2 percent. A 2024 JCO Clinical Cancer Informatics study analyzing over 15,000 oncology conference abstracts confirmed that a detection signal appeared in pre-ChatGPT abstracts, consistent with the false positive problem in medical writing. AI detector accuracy on behavioral health academic writing varies significantly across document types, as confirmed in a 2025 study published in Account Research.

How does the IMRAD structure trigger AI detection?

IMRAD (Introduction, Methods, Results, Discussion) is the standard structure for empirical medical research papers. Each section uses predictable organizational patterns, standardized transition phrases, and disciplinary language conventions. Methods sections, in particular, rely heavily on the passive voice and standardized protocol descriptions, which produce very low perplexity scores. Introduction sections follow a recognizable funnel structure with predictable phrase openings. Discussion sections use formulaic hedging language. All of these features are scientifically appropriate and professionally expected, but they collectively produce a statistical signature that AI detectors associate with machine-generated content.

What can healthcare writers do to avoid false AI detection flags?

Four practical steps help. First, identify which sections of your document carry the highest detection risk, typically Methods sections, and focus revision effort there. Second, deliberately introduce sentence-level variation in high-risk sections without altering scientific content. Third, use first-person voice in Discussion sections where disciplinary norms permit it. Fourth, for high-stakes submissions, including grant applications, use an AI text transformer that adjusts the statistical properties of your text toward the human writing range before submission. Maintain version-controlled drafts as documentation of your authentic writing process in case a detection flag prompts a challenge.