AI detection tools measure statistical properties of text. They do not verify authorship, read intent, or observe processes, they compute numbers from word sequences and classify the result. That means protecting your genuinely human writing from false flags is a technical problem with technical solutions, and those solutions are grounded in what the tools actually measure: primarily perplexity (how predictable your word choices are) and burstiness (how much your sentence lengths vary). A technical explanation of the burstiness and perplexity metrics AI detectors use and why they systematically overlap with certain patterns in human writing confirms that these two signals are the foundation of most commercial detection systems, and that formal writing, heavily edited prose, and non-native English writing all naturally produce the low perplexity and low burstiness scores that detectors associate with AI generation.

This guide presents seven concrete, data-backed methods for protecting your writing from false AI detection flags. Each method is grounded in how detection systems actually work, not in tricks, workarounds, or gimmicks, but in the measurable changes that shift statistical signals in the right direction. The methods apply to students, professionals, content creators, and anyone whose genuinely human-written work has been or might be evaluated by an AI detection tool. They are ordered from highest immediate impact to longest-term protection, and they can be applied individually or as a complete integrated workflow.

Key Takeaways

Every protection method in this guide addresses a specific, measurable signal that AI detectors use. Sentence-rhythm variation increases your burstiness score. Specificity and personal voice raise your perplexity score by introducing low-probability word choices. Removing AI vocabulary fingerprints eliminates n-gram patterns that neural classifiers have learned to flag. How AI detection systems in 2026 combine perplexity, burstiness, and n-gram analysis to classify text and which writing characteristics produce false positive risk- confirms that understanding which signals detectors measure is the prerequisite for addressing them effectively.

The most effective single intervention is sentence rhythm variation. No other technique produces a larger improvement in detection scores with less effort, because low burstiness (uniform sentence length) is the single most common cause of false positive flags on human writing, and introducing rhythm variation requires only editing, not rewriting.

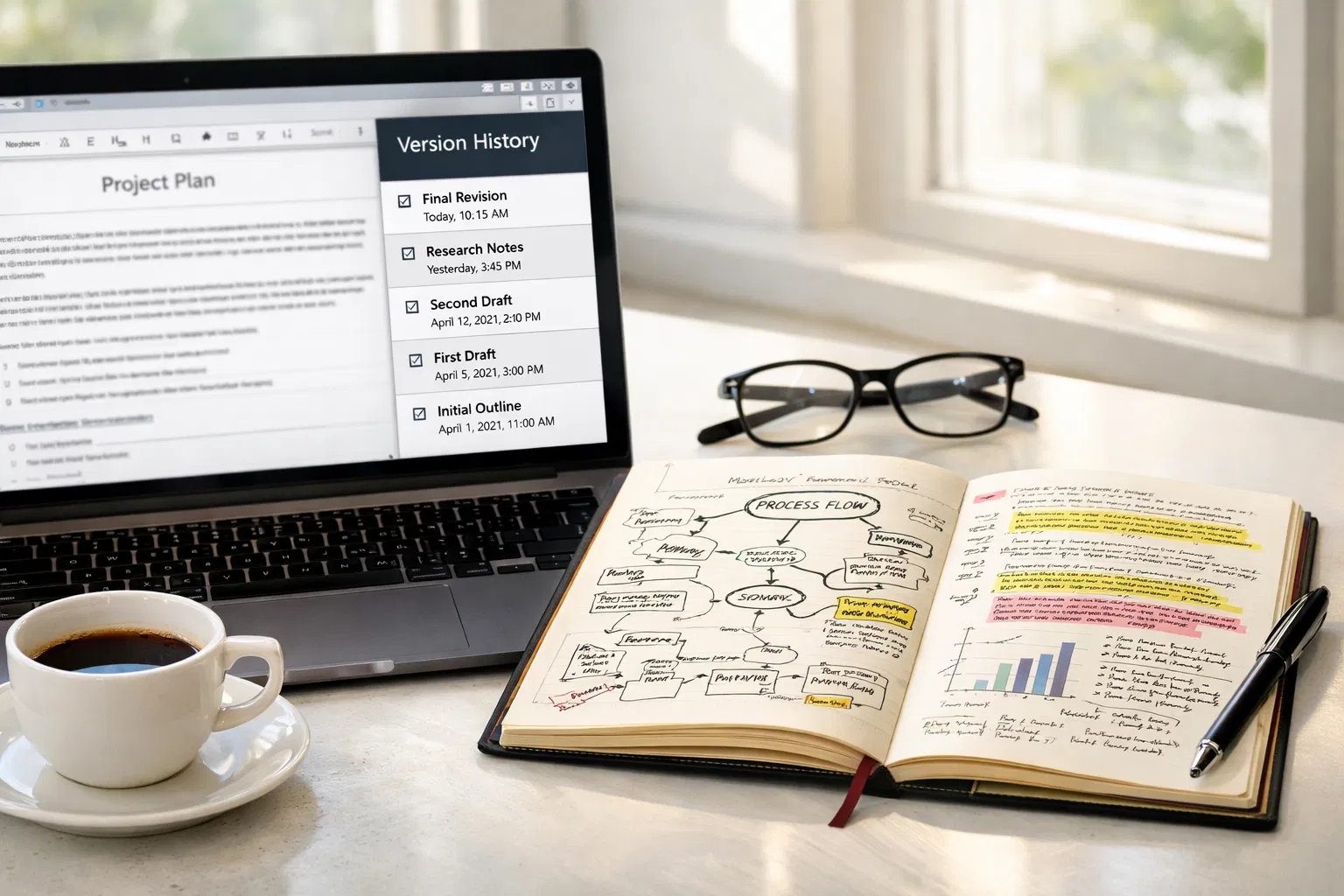

The strongest long-term protection is process documentation, version history, research notes, intermediate drafts, because it creates evidence that exists independently of any detection score. A timestamped record of your writing process cannot be contradicted by a statistical estimate. If you are flagged, documented process evidence is what resolves the dispute.

Running your work through multiple detection tools before submission is a 15-minute investment that can prevent significant consequences. Different tools disagree on flagged content more often than most writers expect, and such disagreement is one of the strongest available signals that a flag may be a false positive. How to use multiple AI detection tools as a pre-submission check and what disagreement between tools signals about detection reliability confirms that no single detection tool should be treated as authoritative, and that comparing results across at least two independent platforms is the minimum standard for pre-submission verification.

AI humanizer tools provide a useful automated final pass for addressing residual statistical patterns after manual editing, but they are most effective as the last step in a workflow that already includes manual rhythm variation, specificity additions, and vocabulary fingerprint removal. A humanizer applied to unedited AI-generated text addresses statistical symptoms without addressing the underlying structural causes. How AI humanizer tools work and their role in a comprehensive false-positive protection workflow for writers using AI assistance, illustrate how these tools should complement, not replace, the manual editing steps that produce genuinely human-sounding text.

The 7 Methods at a Glance

Method | Primary Signal It Addresses | Effort Level | Protection Against |

1. Sentence rhythm variation | Low burstiness score | Low — edit existing drafts | Uniform sentence length flagging |

2. Specificity and personal voice | Low perplexity, generic claims | Moderate — requires research and opinion | Vague AI-style attribution and abstract language |

3. Remove AI vocabulary fingerprints | N-gram overrepresentation of flagged terms | Low — find-and-replace pass | Specific word-level detection triggers |

4. Pre-submission multi-tool check | All measurable signals | Low — takes 15 minutes | Avoidable surprises before high-stakes submission |

5. Process documentation | None (not a writing change) | Low (ongoing habit) | False accusation consequences; strengthens appeal |

6. Workflow design before writing | All signals — addresses root before drafting | Moderate — requires discipline | Structural AI patterns from the start |

7. AI humanizer tools (as final pass) | Perplexity and burstiness mechanically | Low (automated) | Residual statistical patterns after manual editing |

Before You Begin: Understanding What Detectors Measure

Every method in this guide addresses one or more of the signals that AI detection tools measure. The core signals are perplexity and burstiness, two statistics that, when both are low, produce a high-confidence AI classification. High perplexity means your word choices are statistically unpredictable, a characteristic of human writing with varied vocabulary, personal idioms, and contextually specific language. High burstiness means your sentence lengths vary substantially, a characteristic of human prose where analytical passages, short emphatic statements, and complex multi-clause sentences alternate naturally. Why perplexity and burstiness measurements, while foundational to most AI detection tools, have documented limitations, including false positive bias on formal human writing, confirms that both signals overlap substantially between well-edited human writing and AI-generated text, which is why false positive protection requires actively managing both signals, not just hoping your writing falls on the right side of the boundary.

On top of perplexity and burstiness, neural classifier-based detectors (BERT, RoBERTa) also identify higher-level patterns: characteristic AI vocabulary, structural templates, and semantic coherence profiles. These are addressed by Methods 2 and 3 below. Keep both signal levels in mind as you work through the methods: the surface-level statistical signals (perplexity, burstiness) and the deeper learned patterns (vocabulary fingerprints, structural templates, voice absence).

Method 1: Vary Your Sentence Rhythm — the Burstiness Fix

Burstiness, the standard deviation of sentence lengths across a document, is the single most reliably fixable source of false positive detection risk in human writing. Uniform sentence length is the loudest possible AI signal: detectors flag it with high confidence because AI language models default to medium-length sentences throughout their output, producing a statistically flat rhythm that human writing rarely exhibits. Addressing this requires no rewriting of content, only restructuring of the sentences that already exist.

The Three-Beat Rule

Audit every paragraph of four or more sentences and apply the three-beat rule: the paragraph should contain at least one sentence under ten words, at least one sentence over twenty-five words, and at least one sentence in a different grammatical mode from the others (a question, a direct address to the reader, a sentence fragment for emphasis, or an extended clause-heavy analysis). You do not need all three in every paragraph, but if every paragraph reads at the same pace, the problem is structural, and the fix is structural.

Short sentences are the tool most underused in formal academic and professional writing. They do not have to stand alone as complete arguments, they can function as pivots, emphases, or transitions. 'This matters.' 'Three problems emerge.' 'The data suggests otherwise.' Each of these is complete, analytical, and rhythmically effective. Each introduces the kind of sentence length variance that raises your burstiness score and signals human compositional rhythm.

The No-Three-In-A-Row Rule

A simpler diagnostic: scan your draft for any run of three consecutive sentences of similar length. Where you find one, break it. The break can be a short emphatic sentence inserted between the second and third, a merger of two similar-length sentences into one longer one, or a restructuring of one sentence to introduce a subordinate clause that extends its length substantially. Any of these moves disrupts the uniform rhythm that detectors flag and restores the natural variation of human prose.

Use Punctuation for Rhythm

Em dashes, colons, and semicolons are human rhythm markers that AI models underuse because they introduce ambiguity about sentence boundaries that the model's training tends to resolve conservatively. Using these punctuation structures, particularly the em dash, which creates an interruption mid-sentence, contributes to both burstiness variation and the perplexity elevation that comes from less-predictable syntactic constructions. They are also genuinely useful for precise writing, making this a quality improvement as well as a detection score improvement.

Method 2: Inject Specificity and Personal Voice — the Perplexity Fix

Perplexity measures how predictable your word choices are. AI models systematically choose the highest-probability next word at every step, producing text that is statistically very predictable, low perplexity. Human writers make less predictable choices: they use specific vocabulary, personal idioms, domain-specific jargon from real experience, concrete examples from their own observation, and opinions formed through engagement with the subject. Adding these elements raises perplexity because they introduce word combinations that a language model optimising for the most probable sequence would not choose.

Replace Generic Claims with Specific Evidence

Every generic claim in your writing, 'many experts believe', 'research has shown', 'this is important for', lowers your perplexity score by using the most probable words to convey a commonly made point. Replace each with the specific evidence: the named researcher, the year, the actual finding, and the specific number. 'A 2026 meta-analysis of 13 detection studies found that no tool exceeded 80% real-world accuracy across varied content' is more specific, more credible, and statistically less predictable than 'research shows AI detectors are not always accurate'. The specific version raises perplexity and demonstrates genuine engagement with the subject.

Add First-Person Analytical Voice

AI models cannot form opinions through experience, and their training to avoid controversy means they default to neutral, noncommittal language. Adding your own evaluative perspective, which claim you find most convincing, which evidence strikes you as strongest, what surprised you, what you would have predicted differently, introduces word combinations that are genuinely personal and therefore genuinely unpredictable by a language model. 'What I find most striking about this data is...' and 'My reading of the evidence suggests...' are not stylistic indulgences, they are statistical differentiators.

Include Concrete Examples from Your Own Experience

Concrete examples, particularly those drawn from your own field experience, observation, or professional context, are the most powerful perplexity-raising technique available. They introduce proper nouns, specific dates, uncommon vocabulary related to your specific domain, and the kind of contextual detail that a language model generating general content would never produce. An example from your own workplace, research context, or lived experience is, by definition, something no AI model trained on general text could have generated, and it registers in the text as such.

Method 3: Remove AI Vocabulary Fingerprints

Beyond perplexity and burstiness, neural classifier-based detectors have learned the specific words and phrases that appear with disproportionate frequency in AI-generated text relative to human writing. These are not arbitrarily flagged, they are words that appear in the statistical fingerprint of LLM output and have trained the classifier to associate them with machine generation. A single occurrence of any of these words is unlikely to be decisive on its own, but their co-occurrence, multiple AI fingerprint words across a paragraph or section, compounds the classification signal substantially.

AI Fingerprint Word or Phrase | Why It Triggers Detection | Direct Human Alternative |

Delves into | Extremely overrepresented in AI output relative to all human writing corpora; appears in LLM training data at frequencies far above natural use | Examines, explores, looks at, covers, investigates |

Leverages / leverage | High-frequency AI business prose marker; nearly absent in natural spoken or informal written English | Uses, applies, draws on, relies on, puts to work |

Furthermore / Moreover | Appears as connective tissue in AI academic prose at rates dramatically above human writing; signals AI-generated transitions | And, also, [rewrite sentence to make connection implicit] |

It is worth noting that | Formulaic AI hedge phrase; appears in model output as a soft qualifier before claims the model is uncertain about | Note that, importantly, worth knowing:, [state the point directly] |

In today's rapidly evolving landscape | Clichéd AI throat-clearing opener with no informational value; among the highest-frequency AI-generated openers | [Start with the actual claim or data point instead] |

Underscores / underscored by | Academic register verb overrepresented in AI output; rarely appears in this frequency in human-written analysis | Shows, confirms, highlights, points to, makes clear |

Comprehensive / nuanced | Paired adjectives that appear with high frequency in AI text as quality signals; detectors flag their combined appearance | [Be specific instead: say what is comprehensive or nuanced and how] |

Showcases | AI register word used in place of 'shows' or 'demonstrates'; statistically overrepresented across AI writing tools | Shows, demonstrates, reveals, presents, displays |

Pivotal / crucial / vital | Overused AI emphasis words that detectors flag due to their disproportionate frequency in LLM output | Important, significant, key, essential — or omit the emphasis entirely |

It is important to note | Formulaic AI preamble that adds no information; often precedes claims the model wants to emphasise without being direct | [State the important thing directly, without the preamble] |

In conclusion / To summarize | AI essay-close formulaic marker; high frequency in model training data for structured writing | [Start the final paragraph without a meta-announcement] |

Robust | Overused AI adjective applied to systems, frameworks, strategies, and approaches at rates that do not reflect human usage | Strong, reliable, thorough, solid — or specify the actual quality |

The goal of this vocabulary audit is not to avoid all of these words in all contexts, some of them are legitimate English words with appropriate uses. The goal is to notice when you have used several of them in proximity and to replace them with more specific, direct alternatives that say the same thing without contributing to the AI classification fingerprint. Read every paragraph and ask: Would I actually say this in conversation? If the answer is no, rewrite it as you would actually say it. That test reliably identifies the formulaic, high-frequency AI phrasing that detectors flag.

Method 4: Run a Pre-Submission Multi-Tool Check

Running your work through a single AI detection tool before submission tells you one tool's estimate of one set of statistical properties. Running it through two or three independent tools with different methodologies tells you something more useful: whether the detection signal in your writing is consistent across approaches or tool-specific. Disagreement between tools, one flagging content that another clears, is one of the strongest available signals that a flag may be a false positive rather than a genuine AI signal, because genuinely AI-generated text tends to be flagged consistently across platforms. confirms that no single tool should be treated as authoritative and that cross-tool comparison is the minimum standard for pre-submission verification in high-stakes contexts.

The Recommended Pre-Submission Check Workflow

Run GPTZero's free tier first. It provides sentence-level flagging, telling you not just the overall score but which specific sentences triggered the flag. This is more actionable than an aggregate score because it tells you exactly where to focus your revision.

Run a second independent tool (Copyleaks, Originality.ai, or Winston AI). If both tools flag the same passages, those passages need revision. If only one flags them, the disagreement itself is evidence of false-positive risk.

Review every flagged sentence at the sentence level. Ask: Is this sentence more uniform in length than the surrounding sentences? Does it use any vocabulary fingerprints from the table above? Does it make a generic claim without specific evidence? These are the revision targets.

Revise flagged passages using Methods 1–3 above, then re-run the check. The goal is not to achieve a specific score, it is to confirm that the passages that concern you have been addressed and that the revised version produces consistent results across both tools.

If your content still flags after revision and you are confident it is genuinely human-written, document this in your pre-submission record. A screenshot of your detection scores, combined with your version history and research notes, provides the evidentiary foundation for any appeal that may be needed.

Method 5: Build a Writing Process Record Before You Need It

Process documentation is the only method on this list that does not change a single word of your final submission — and it is the most important long-term protection available. A detection score is a statistical estimate with a documented error rate. Your time stamped version history, research notes, and drafts are evidence that no statistical estimate can directly contradict. In any fair institutional review, documented evidence of process defeats a detection percentage. Why version history and writing process documentation are the primary evidence that successfully resolve false AI detection disputes, and how to build a defensible process record, confirms that timestamped drafts are the primary evidence that successfully resolve false detection disputes, because they demonstrate the incremental human process that statistical detection cannot directly observe.

Core Documentation Habits to Build Now

Write in Google Docs or Microsoft Word with version history enabled. Google Docs automatically saves every version with timestamps. Go to File → Version History → See Version History to confirm it is active. Never delete or overwrite earlier versions.

Keep all research notes, annotated sources, and outlines. Save highlighted PDFs of sources you cited. Keep screenshots of database searches. Save your source list as it develops. These materials demonstrate that you engaged with the subject matter before writing, the intellectual process that AI generation does not involve. How to structure a writing process record that withstands institutional scrutiny and why different types of evidence address different aspects of a false accusation confirms that research notes and annotated sources are among the most compelling components of a process record because they demonstrate the investigative work that preceded the writing.

Save intermediate drafts with clear timestamps. Even if Google Docs is capturing version history, explicitly naming key versions ('First draft — March 10', 'After revision — March 15', 'Final — March 18') creates a clearer timeline for any reviewer.

Log your writing sessions. A simple text note with the date, time, and what you worked on in each session creates a human-readable timeline of your composition process. This is the element of process documentation most often overlooked and most persuasive to a reviewer: a record showing three separate sessions over ten days is incompatible with AI generation in a single event.

Method 6: Design Your Writing Workflow to Produce Varied Text from the Start

Methods 1 through 5 are largely remedial, they address AI-like patterns after the draft exists. Method 6 is preventive: designing the workflow that produces your first draft so that the output naturally avoids the statistical patterns that detectors flag. This is the most sustainable long-term protection because it reduces the remediation work required later.

Freewrite First, Structure Second

The most effective workflow for producing naturally varied writing is to begin with a free write , ten to fifteen minutes of unstructured writing about your topic, without stopping to edit, without worrying about organisation, without attention to grammatical precision. The output is never the final draft. What it produces is a reservoir of your own authentic word choices, sentence rhythms, and analytical angles, the raw material of a genuinely human voice. Structure and organisation can then be imposed on that raw material, rather than generating structured content from scratch, producing the template-following, transition-heavy pattern that detectors flag.

Draft in Sections, Not Documents

A common pattern that produces AI-like consistency is drafting an entire document in a single focused session. Human writing that develops naturally across multiple sessions, days, and angles of a subject shows much more variability in sentence rhythm, vocabulary, and structural approach than content drafted uniformly. If your workflow requires writing quickly, break it into clearly distinct sessions, introduction one day, evidence analysis the next, conclusion separately, so that the natural variation of your thinking across time shows in the statistical properties of the text.

Read Your Draft Aloud Before Finalising

Reading your draft aloud is the most reliable test for rhythm uniformity, the ear catches the monotony that the eye skips over. Any passage where you are reading at exactly the same pace, sentence after sentence, with the same weight and the same length, is a passage that needs rhythm variation. Any passage where you use the same transition phrase twice in close proximity is a vocabulary fingerprint risk. Any passage that sounds like you are explaining something you do not personally have an opinion about is a perplexity risk. The reading-aloud test identifies all three problems simultaneously.

Method 7: Use AI Humanizer Tools as a Final Pass — Not a Replacement for Editing

AI humanizer tools, platforms that mechanically restructure text to increase burstiness and perplexity scores, have a legitimate and useful role in a comprehensive false-positive protection workflow. That role is as a final pass after you have already completed Methods 1 through 6, not as a substitute for them. A humanizer applied to unedited text with uniform sentence rhythms, AI vocabulary fingerprints, and generic claims will raise the statistical scores but will not add the personal voice, specific evidence, and analytical perspective that make writing genuinely human-sounding and resistant to human-reviewer scrutiny. confirms that humanizer tools are effective at the statistical layer of detection protection, and that the elements they cannot add (personal experience, specific evidence, genuine voice) must come from the writer through the earlier methods in this workflow.

How to Use a Humanizer Tool Effectively

Apply the humanizer after completing your manual editing pass (Methods 1–3). The manual pass ensures the content has a genuine voice and specific evidence, qualities the humanizer cannot provide. The humanizer then addresses any residual statistical patterns that survived the manual edit.

Always review the humanizer output before publishing or submitting. Humanizer tools can introduce awkward phrasing, subtle meaning changes, or grammatical constructions that read well to a statistical model but less well to a human reviewer. Compare the humanised version against your pre-humanizer draft and restore any passage where the change weakened either the meaning or the voice.

Run your post-humanizer version through your multi-tool check (Method 4) to confirm the scores have shifted in the right direction. If a passage still flags after both manual editing and humanizer processing, it needs a closer look, either the content genuinely overlaps with AI patterns in a way that requires more substantive revision, or the passage is short enough that there is insufficient text for reliable classification.

Do not run multiple humanizer passes on the same content. Repeated humanizer passes tend to produce increasingly awkward text as the tool optimises away from the original meaning. One pass, with human review, is the recommended maximum.

Conclusion

Protecting your writing from false AI detection is not primarily a defensive act, it is a writing quality act. The seven methods above address statistical signals that correlate with AI-generated content precisely because they also correlate with writing that is less varied, less specific, less personal, and less analytical than genuinely engaged human prose. Varying your sentence rhythm produces writing with better pacing. Adding specificity and personal voice produces writing that is more credible and more interesting. Removing AI vocabulary fingerprints produces writing that is more direct and less formulaic. Documenting your process produces writing with a defensible provenance. Running a multi-tool check before submission protects you from avoidable surprises. Designing your workflow for variation from the start reduces remediation work later. And using a humanizer tool as a final pass addresses the residual statistical patterns that manual editing may not fully resolve. Every one of these methods produces better writing. The reduced detection risk is a consequence, not the primary goal, and that framing is both honest and reliable.

Frequently Asked Questions

What is the most effective single change I can make to reduce false positive detection risk?

Sentence rhythm variation, introducing deliberate variation in sentence length across your document, produces the largest improvement in detection scores with the least effort of any available technique. Low burstiness (uniform sentence length) is the most common cause of false positive flags on human writing, and it is the most reliably addressable signal. Apply the no-three-in-a-row rule to your existing draft: any run of three consecutive sentences of similar length should be broken by inserting a shorter or longer sentence, merging two of the sentences, or restructuring one to extend its length. This single pass addresses the most common statistical trigger without requiring any content changes.

Which words most commonly trigger AI detection flags?

The highest-risk vocabulary fingerprints are words that appear with disproportionate frequency in AI output relative to human writing: 'delves into', 'leverages', 'furthermore', 'moreover', 'it is worth noting', 'underscores', 'comprehensive', 'nuanced', 'showcases', 'pivotal', 'crucial', 'robust', and 'in today's rapidly evolving landscape'. These words are not wrong to use individually, but their co-occurrence in proximity compounds the detection signal. Audit every paragraph for multiple fingerprint words and replace them with more direct, specific alternatives. The goal is not to eliminate the words entirely but to reduce their density to levels consistent with natural human usage rather than the disproportionate frequency of LLM output.

Does using Grammarly or spell-check cause AI detection flags?

Grammar tools, including Grammarly, can shift detection scores by correcting idiosyncratic phrasing, reducing grammatical variation, and standardising punctuation, all of which lower perplexity and increase the statistical regularity that AI detectors flag. This does not mean grammar tools should be avoided, but it does mean that if your Grammarly-corrected draft scores higher on AI detection than your pre-correction draft, the correction process is part of the explanation. The practical response is to reintroduce sentence rhythm variation after the grammar pass and to review passages where Grammarly replaced an idiosyncratic but clear construction with a more standard alternative.

How many AI detection tools should I check against before submitting?

At minimum, run your work through two independent tools before any high-stakes submission, ideally tools with different underlying methodologies, such as GPTZero (perplexity and burstiness based, with sentence-level output) and a neural classifier-based tool such as Copyleaks or Winston AI. If both flag the same passages, those passages need revision. If only one flags them, the disagreement itself is evidence of potential false-positive risk that you can document. For the highest-stakes contexts, academic integrity, professional assessment, publication, a third tool provides additional confidence, and the disagreement patterns across all three give you a more nuanced picture of which signals are consistently present versus which are tool-specific.

What should I do if I am falsely flagged despite following all these methods?

Gather your process documentation immediately: version history, research notes, intermediate drafts, writing session logs. Request the specific detection evidence from the institution, the tool used, the version, the specific score, and the sentence-level breakdown if available. Respond in writing, presenting your process documentation as affirmative evidence of authentic authorship and specifically noting the tool's documented false positive rates and the limitations of detection scores as evidence of authorship. Request a human review rather than an automated determination. If the initial response is unsatisfactory, access your institution's formal appeal process and submit all documentation in an organised package. A timestamped, research-grounded writing process record is the strongest available evidence, stronger than any argument about detection methodology, and the resource that most consistently produces fair outcomes in false positive disputes.

This guide reflects research on detection methodologies and tool capabilities as of March 2026. AI detection platforms update their models frequently, and specific vocabulary fingerprints and statistical thresholds may shift as LLM generation and detection technology continue to evolve in parallel.