The world of content moderation has undergone a significant structural shift in the past three years. No longer is it a purely reactive form of review, in which content is assessed after users report it. Instead, it has evolved into a proactive, real-time discipline in which AI classification systems play a significant role in speeding up the process at a scale and speed that no human review team could hope to match. Content moderation has grown into a market worth 11.63 billion USD in 2025, which is set to rise to 23.20 billion USD in 2030 at a 14.75 percent compound annual growth rate. This is a testament to the extent to which content moderation has become embedded in the digital world's infrastructure. Yet the cause of this growth is not the recognition of harmful content on the internet. Rather, it is the convergence of three elements that have made the previous model structurally unsuitable for the job: the rise in user-generated content, the democratization of AI generation tools, and the enforcement of regulatory requirements. AI content moderation trends and strategic priorities for 2026 documents how leading platforms are responding to these three forces by investing in AI-driven detection as a strategic pillar rather than a back-office function, embedding it directly into trust and safety infrastructure, and treating it as a compliance mechanism as much as an editorial tool.

This article explains how real-time AI content detection transforms online moderation practices across five dimensions: the shift from reactive to proactive moderation, the technical capabilities that enable sub-second classification at platform scale, the specific challenge of detecting AI-generated harmful content including synthetic text and deepfakes, the regulatory framework that now mandates systematic detection, and the human-in-the-loop design principles that prevent detection from becoming a fairness liability.

The Scale Problem That Made Reactive Moderation Obsolete

The primary reason why reactive report-based moderation is structurally inadequate is arithmetic. Large social media platforms receive billions of posts, comments, images, and video uploads daily. Assuming a human moderation team of 10,000 moderators, each reviewing a piece of content every 30 seconds for 8 hours a day, the team can review 10 million pieces of content per day. This is a small fraction of the content a large social media platform can generate in just one hour. The content that is never reported by users, which studies have shown is the most content, is simply not reviewed. EU Digital Services Act obligations and what systematic moderation means in practice require platforms with over 45 million monthly users in the EU to offer users tools to easily flag illegal content, to show risk mitigation strategies for systemic risks, and to offer users out-of-court dispute resolution for moderation decisions. These requirements all assume systematic moderation, not reactive review. A platform that only reviews reported content cannot show risk mitigation strategies. The DSA has, in effect, turned the scale problem from a business issue to a compliance requirement.

From Reactive to Proactive: What Real-Time Detection Actually Changes

The move away from reactive and towards more proactive moderation is more than just a speed concern. It represents a change in the logic of moderation itself, from a response to a reported event to a judgment on all content before or just after publication. Proactive moderation aims to prevent the spread of content before it gains significant traction, while reactive moderation aims to stop it after the damage has been done. For content categories where speed is most critical, such as incitement to imminent violence, child sexual abuse imagery, non-consensual intimate imagery, and crisis misinformation, the difference in the scale of exposure for the difference in approaches can be measured in the thousands or millions. how real-time AI detection is reshaping content moderation strategy in 2026 documents three specific ways in which real-time detection impacts moderation processes: LLM-based classifiers that can understand meaning instead of relying on keywords to filter out banned words, allowing for the identification of sarcasm, hidden meanings, and implied threats; sub-50ms classification latency for text content, preventing harmful content from reaching users before it can be reviewed; and smart triage to only present human moderators with the most concerning content identified as problematic, reducing moderator fatigue and time to make decisions based on prioritization.

Dimension | Reactive Moderation | Real-Time AI Detection |

Trigger | User report or scheduled batch review | Automatic classification at point of submission or publication |

Latency | Hours to days between harm occurring and content removal | Sub-50 milliseconds for text; 1-5 seconds for image and video |

Coverage | Only content that is reported; systematic under-coverage of unreported violations | All submitted content above configured thresholds, regardless of whether it is reported |

Accuracy | High for reported content; human reviewers have context | Variable; 85-99% on unmanipulated AI output; drops significantly on humanized or multimodal content |

Scale | Limited by human reviewer headcount; does not scale cost-effectively with content volume | Scales horizontally with content volume; cost per decision decreases with volume |

Explainability | Reviewer can document reasoning in natural language | Varies by tool; LLM-based classifiers can generate natural language explanations; statistical models cannot |

Regulatory compliance | Difficult to demonstrate consistent application under DSA and EU AI Act | Creates auditable logs of decision criteria; supports mandatory DSA transparency reporting |

False positive risk | Low; human reviewers apply contextual judgment | Elevated; systematic false positives on specific content categories; requires human review layer for consequential decisions |

Why LLM-Based Classification Replaces Keyword Filtering

Keyword filtering, the first form of automated content moderation, works by checking the submitted content against a list of forbidden words and phrases. It's fast, cheap, and deterministic. However, it's also easily subverted by any user willing to take the time to think about it. Anyone with a modicum of understanding of how keyword filtering works can bypass it by using different spellings, different characters, or by putting the forbidden content in a larger context. Most importantly, keyword filtering doesn't actually understand the content's meaning. A threat presented in a fictional or sarcastic context looks the same as a threat presented seriously. A coded reference to a forbidden activity can look like an innocent conversation. LLM-based classifiers actually understand the context, tone, and meaning of the content, allowing them to identify policy violations that a word-based system cannot. The EU AI Act compliance for AI systems used in content moderation contexts states that the EU’s Artificial Intelligence Act categorizes content moderation AI as high-risk and therefore requires conformity, technical documentation, and accuracy and robustness testing. This situation creates a context in which more advanced classification approaches will be more likely to be adopted, as they can provide auditable reasoning and accuracy measures that LLM-based classifiers can deliver more reliably than keyword filtering approaches, which provide neither.

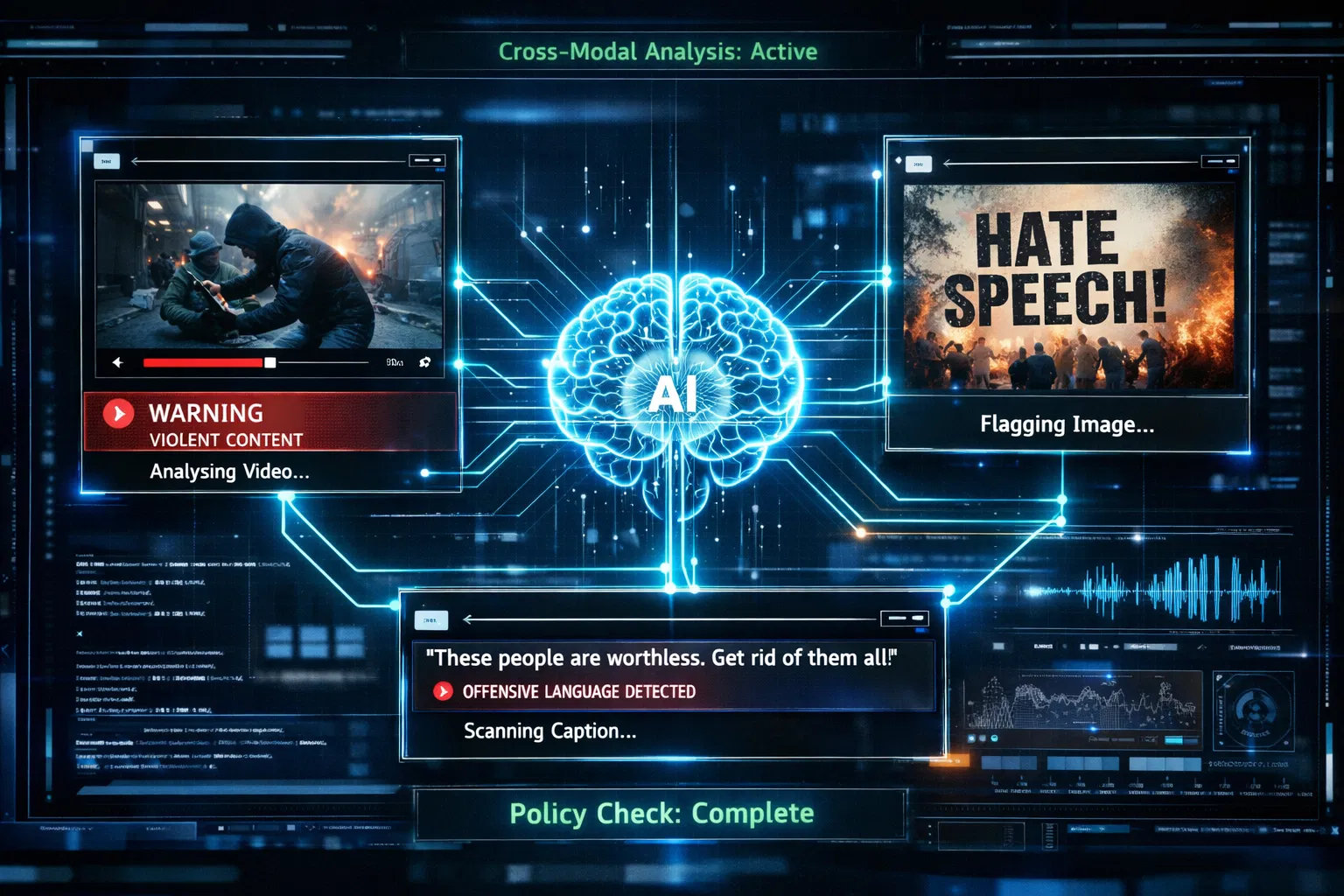

Multimodal Detection: Why Text Alone Is No Longer Sufficient

The biggest technical advancement in content moderation since 2023 has been the development of multimodal detection systems, which analyze content in text, images, audio, and video simultaneously rather than individually. This is because user-generated content is taking hybrid forms: text overlaid on images, memes combining images and text, live content where the offending content is audio rather than video, and content where the text itself is not offensive but the image accompanying it is. A text-based classifier will fail to detect all these cases, while an image-based classifier will fail to detect the text-based cases. automated content moderation trends and multimodal detection in 2025 documents that multimodal models consistently outperform text-only methods by combining textual, visual, and auditory signals simultaneously, and that organisations implementing cross-modal analysis achieve detection of policy violations that span multiple formats, including hate speech in text overlaid on benign images, violent imagery with misleading audio, and coordinated disinformation campaigns that use visual and textual elements in combination. Cloud-based deployment of multimodal systems captures 70% market share, with consumption-based pricing eliminating upfront infrastructure investment and enabling scaling that matches resource allocation to actual content volume.

Deepfake and Synthetic Media Detection: The Most Difficult Moderation Problem

Detecting deepfakes and synthetic media will be one of the toughest challenges for real-time content moderation by 2026. To put things in perspective, deepfakes jumped from about 500,000 online in 2023 to almost 8 million by 2025—a shocking increase of 1,500% in just two years. They're calling voice cloning indistinguishable now. Nowadays, synthetic voices distributed through typical channels sound nearly identical to genuine recordings for most people. Plus, advancements in video generation are leading to content that showcases fluid motion and consistent identities across frames. What's more, these models now feature realistic facial expressions, removing the visual artifacts that previously served as dependable forensic indicators. EU Code of Practice on AI-generated content and deepfake labeling obligations documents the response of the European Commission to these challenges: “the draft Code of Practice on Transparency of AI Generated Content agreed in December 2025 proposes common standards for user identification and identification of AI-generated and AI-manipulated content through labels, watermarks, and metadata. This Code will cover lawful deepfakes, while illegal deepfakes, such as non-consensual intimate images, defamation, and terrorist content, will need to be detected and removed as before under DSA obligations. The labeling and watermarking approach is the long-term solution to the detection challenge. The challenge in the short term is that most existing deepfake content will not have been labeled in the first place.

The Deepfake Detection Arms Race: Why Platforms Cannot Keep Up Alone

The problem with deepfake detection is akin to the cybersecurity arms race, in which detection and generation capabilities improve side by side, with neither able to gain an upper hand. The most advanced automated systems for detecting deepfakes see accuracy drop by 45-50% when faced with real-world deepfakes rather than simulated environments. The ability of humans to identify deepfakes is at 55-60%, barely better than chance. The sheer volume and quality of such content create a gap that no single moderation system can address. future content moderation trends and the multimodal detection challenge for 2026 documents that for effective deepfake detection in 2026, there is a need for multimodal forensic analysis to attain accuracy rates between 94% and 96%, deepfake detection models specifically developed for video and audio content produced using the latest generation of video and audio synthesis tools, watermarking and tracing for content produced by compliant AI providers, and human review processes for suspect content requiring contextual analysis. The article validates that the content moderation services market size is USD 12.48 billion in 2025 and will grow to USD 42.36 billion in 2035, driven by the need to invest in these services.

Why Detection Alone Cannot Solve the Synthetic Media Problem

There is a mathematical limit to the problem of deepfake detection that cannot be overcome with investments in the platform. As the capabilities of AI video generation tools increase, the statistical difference between synthetic and real content decreases. With an advanced enough content generation tool, the cues used in the detection process will be completely eliminated. The current understanding of the problem among top researchers in synthetic media is that the line of defense will not be based on human intuition or understanding, but on the infrastructure level, with the implementation of secure provenance using cryptographically signed media or technical tools that support the C2PA specification. Deepfake evolution in 2025 and what moderation faces in 2026. This is confirmed by the trajectory: video generation models in 2025 will reliably produce stable, coherent images of faces without flickering, warping, or structural distortions around eyes and jawlines, which were previously considered definitive forensic evidence of deepfakes. Interactive real-time deepfakes, enabling AI performers to engage with humans in real time, mark the next level of capabilities, making frame-by-frame forensic examination ineffective. The moderation strategies for platforms, based solely on detecting at distribution, will need to factor in verified provenance for AI-generated content as the primary defense.

Regulatory Obligations: How the DSA and EU AI Act Are Reshaping Moderation

Content moderation is no longer simply a matter of platform policy. Indeed, the EU’s Digital Service Act, which has been fully in force since 2024 for very large online platforms, sets specific requirements that effectively require the use of AI-assisted content moderation for any platform with over 45 million monthly users in the EU. These requirements include performing systemic risk assessments annually, implementing risk mitigation measures for systemic risks, including disinformation and illegal content, providing data access for independent research scrutiny, and maintaining a DSA Transparency Database for content moderation decisions. In the first half of 2025, over 1,800 content disputes were handled by out-of-court settlement bodies, overturning platform decisions in 52% of closed cases, confirming the effectiveness of the appeals structure the DSA requires, which overrides platform decisions on content moderation. What AI deception and detection revealed about platform moderation gaps in 2025 documents the failures that have prompted the current regulatory environment: deepfake-based electoral interference in multiple countries, the proliferation of non-consensual intimate content prior to the implementation of countermeasures, and coordinated disinformation campaigns that leveraged the gap between content creation/publication and human evaluation/response. The documented failures serve as the basis for the current regulatory environment.

DSA Transparency Requirements and What They Mean for Detection Architecture

The DSA's transparency requirements have important architectural implications for content detection systems. Platforms must provide statements of reasons for moderation decisions to the DSA's transparency database. This allows users to receive specific reasons why content was removed, demoted, or restricted. This is not compatible with the architecture of black-box detectors, which provide a decision without any reasons. This means the detection layer must provide explanations for decisions that are sufficiently specific to satisfy regulatory requirements. LLM-based classifiers provide explanations in the form of natural language reasoning for their decisions, something that was not previously possible with statistical models. Two years of DSA enforcement and systematic moderation have produced reports indicating that over 50 million content moderation decisions have been reversed, with 52% of such cases reviewed by out-of-court bodies. The rate at which such reversals are occurring indicates an overly mechanical approach to moderation based solely on detection results, without sufficient review and explanatory documentation as required by the DSA. The implication for platform design is to integrate review as an essential component of the process.

Human-in-the-Loop Design: Why Automation Without Oversight Creates Liability

The key design consideration for the application of real-time AI content detection in the regulatory setting was the need to ensure that the results of the automated detection process were fed into the human review process rather than the enforcement process for anything beyond the most clear-cut policy violations. This is not simply a matter of “fairness,” although it may be presented as such. Rather, it is a matter of regulatory obligation under the DSA's transparency and appeals provisions. Moreover, it reflects the known limitations of accuracy for all detection systems. AI detection can be applied at scale to detect content and mark it for review. Human moderators then apply contextual judgment to determine whether the detected content was a policy violation or a false positive. The appeals process then acts as a check on both. An academic analysis of generative AI moderation and why platform policies need general enforcement frameworks proposes that the issue of AI-generated content can be addressed by ensuring that general policies are enforced rather than creating separate policies for AI content, and that the threat posed by AI-generated content is not inherently different from that posed by regular harmful content. This is significant for platform design because it implies that platforms that treat AI-generated content as a separate entity with distinct policies are more complex than those that incorporate it into policies based on harm.

Intelligent Triage: How Real-Time Detection Improves Human Reviewer Efficiency

One of the most important practical advantages of real-time AI detection is not so much that we don't need human reviewers, but rather that we can make them far more effective at what they do. Currently, human reviewers must spend time on an undifferentiated queue of flagged content, devoting the same amount of time to clear violations as to questionable ones. With AI triage, the AI can score all content, automatically act on the highest-confidence violations for well-defined categories, automatically pass questionable content with an AI-generated summary and confidence information directly to the reviewer, and automatically pass low-confidence information on for secondary review only after meeting specific thresholds. how AI content moderation works in real time, including classification types and workflow documents, and the benefits of such intelligent triage, including reduced reviewer fatigue, faster decision-making, and improved outcomes in the gray areas where human judgment matters most. The use of AI-generated summaries of the case, which aggregate the signals relevant to the decision, the user's content history, and the section of the policy applicable to the decision, helps reviewers make more consistent decisions faster. The above approach of automated handling of clear violations, intelligent triage of gray areas, and the use of AI to generate a case summary represents best practice in the design of human-in-the-loop moderation systems.

False Positive Governance: The Fairness Obligation That Platforms Cannot Ignore

False positives occur with real-time AI systems, as with any system for detecting documents. The populations affected by false positives in content moderation systems are not exactly the same as those affected by false positives in text authorship systems, but the underlying problem is the same. Systemic overperformance for particular categories of content, languages, cultures, and user populations. Non-English content systematically underperforms compared to English content. Satire, parody, and art that intentionally mimics the surface features of banned content are overperformed compared to actual policy violations. News journalism that references banned content to discuss it is outperforming the banned content it references. deepfake detection limits and what platform moderation faces in 2026 and beyond The article confirms the extent of the false positive issue in AI-based content moderation: the detection capacities are already struggling with the amount and quality of synthetic media, and a system that does not recognize the difference between news footage and deepfakes is exactly the kind of system that would cause a false positive problem for its credibility. A platform that incorrectly removes real documentation of actual events because its detection system incorrectly classified it as synthetic does as much damage as one that fails to remove actual deepfakes.

Appeals Infrastructure and Moderation Accountability

Every system of real-time content moderation that has false positives must have an appeals system in place. The DSA makes this a legal requirement for very large platforms. Any defensible platform governance model makes this an ethical requirement. The appeals system must meet the following minimum criteria: it must be accessible to users without requiring technical skill, it must involve documented review of the decision by a person who has the authority to reverse the decision, and it must provide a specific explanation of the outcome rather than a generic form response. Automated content moderation tools and effective moderation workflows confirm that the best moderation systems in 2026 integrate AI detection with transparent, accessible appeals processes to turn false-positive disputes into signals for improving the detection model. False positive disputes that are successfully appealed and documented provide a training signal to the detection system. They demonstrate to the detection system where false-positive classifications are systematically incorrect, allowing the system to be improved without involving a human reviewer for every piece of content. This process represents one of the least used features of the moderation infrastructure in those platforms that have yet to integrate the appeals process into the development of the detection system.

The Future of Real-Time AI Detection in Online Moderation

The trajectory of real-time content detection for online content moderation appears to be heading in three directions, which are ultimately converging. First, content-provenance-based approaches, which leverage cryptographic signatures and C2PA-compliant metadata to verify content creation by AI and the tool used for creation, will be the dominant solution for detecting synthetic media as media creation quality improves beyond the capabilities of forensic-based approaches. Second, multimodal classifiers will eventually become ubiquitous for any platform seeking to operate at social media scale, eventually replacing the patchwork of single-modality-based solutions currently used by most platforms. Third, the DSA, the EU AI Act, and various proposed national legislative measures will continue to drive the push for auditable, transparent content-detection approaches that can articulate the basis for their determinations to regulators, researchers, and even content creators themselves. AI text humanizer to protect legitimate writing from false detection flags The writer-facing counterpart of these moderation developments is thus the need for accurate writer-facing tools, just as there is a need for accurate platform-facing detection of human versus synthetic content, and for accurate false positive protection for writers, so that human authentic content is not incorrectly labeled as being generated by AI by detection systems that have high false positive rates for certain styles of writing. The development of both accurate platform-facing detection and accurate writer-facing false positive protection thus stems from the same need for accurate and fair detection systems.

False Positive Reduction as a Platform Trust and Safety Advantage

Given the current state of the world in which DSA enforcement has already resulted in 50 million reversed moderation decisions and in which researchers have demonstrated the existence of false positive rates for non-English speakers, underrepresented communities, and satirical content, the reduction of false positive rates has become an important competitive and reputational factor for online platforms. Those platforms that can prove their lower false positive rates, faster appeals handling times, and more accurate detection threshold calibration across diverse content and languages are more likely to meet the demands of the regulatory environment, attract users who were unfairly moderated on other platforms, and win the trust of advertisers, which drives platform revenue. false positives in AI detection and how systematic bias affects different populations documents the false-positive problem from a detection-tool perspective, confirming that systematic miscalibration across populations is not random error but a structural property of detection systems trained on unrepresentative data. Platforms that invest in representative training data, multilingual classifier performance, and culturally sensitive policy application will systematically outperform those that apply one-size-fits-all detection thresholds across all content types and communities. In a regulatory environment increasingly focused on accountability and transparency, this investment is both ethically sound and commercially rational.

Conclusion

Real-time AI content detection has radically changed online moderation, moving from merely reacting to user reports to a proactive, structured discipline that can be scaled across an entire platform. This metamorphosis has been possible through the combination of three major factors: Firstly, the amount of user-generated content is so huge that manual moderation has become practically impossible. Secondly, the widespread availability of AI generation tools has enabled the creation of harmful synthetic content at scales that only automated detection can effectively address. Thirdly, the rules introduced by the EU Digital Services Act and AI Act require systematic, auditable moderation rather than best-effort manual review. But this new way of moderating content is still a work in progress. Deepfake detection is the slowest to catch up with the ever-improving generation capabilities. Also, the false-positive rates remain quite high for non-English content, satire, and minority-language communities. Many platforms' detection architectures still lack a human-in-the-loop design feature. Your guess is as good as mine: the ones who will keep user trust, meet regulatory requirements, and have the confidence of advertisers in 2026 and the years after are those who see real-time detection as a strategic investment rather than a work that comes at a cost, and that they will also create the necessary infrastructure for appeals, transparency that explains things, as well as governance of false positives that will turn detection from just a strict enforcement tool into a responsible moderation capability.

Frequently Asked Questions

How fast does real-time AI content detection work?

For content such as text, the current state of the art in real-time classification systems can achieve latency below 50 milliseconds. While not as fast as the real-time requirement might suggest, it is still rapid enough to be considered "pre-delivery," meaning it can be done before the content is delivered to other users in the live chat or comments system. The latency required for image classification is around one to five seconds, depending on the resolution of the image and the complexity of the model. Video classification is done at the frame level, with varying latency depending on whether it is applied to uploaded content (batch) or live streams (real-time). The key point is that it is not actually "pre-delivery" in the way the text uses the term; it is more of a "post-publication" feature that applies auto-restriction to the content until it can be reviewed by a human.

Can AI content moderation replace human moderators entirely?

No, and the regulatory framework actually requires it. The EU Digital Services Act requires user appeal processes, explanations for moderation actions, and out-of-court dispute resolution. These are all processes that require human input at the review phase. However, beyond regulatory requirements, the accuracy limitations of current systems mean that attempting to enforce anything beyond clear violations is both technically and ethically questionable. The role of AI detection in 2026 is to support intelligent triage: clear violations are handled automatically, borderline cases are passed to human reviewers with AI-generated summaries and probability scores, and an analytical infrastructure to enable human moderators to make faster, more consistent decisions at scale.

What does the EU Digital Services Act require from AI content moderation systems?

The DSA also requires very large online platforms with more than 45 million monthly users in the EU to conduct annual systemic risk assessments to determine their contribution to the dissemination of illegal content and to systemic risks. Online platforms are required to implement mitigation measures for identified systemic risks. This will mostly involve AI-assisted systematic identification of policy-violating content. Online platforms are required to make available a statement of reasons for moderation decisions to the DSA Transparency Database, make appeals processes easily accessible to users, and make data accessible to approved researchers. The EU AI Act requires conformity assessments for AI systems used in high-risk contexts, which may include content moderation depending on the context. AI systems must have technical documentation detailing their decision-making criteria.

What is the biggest unsolved problem in real-time content moderation?

Deepfake and synthetic media detection is the most technically challenging unsolved problem. The current state of the art in video synthesis in 2025 is such that the generated content completely removes the visual artifacts upon which forensic detection was previously based. Similarly, the state of the art in voice cloning means it is no longer possible to distinguish between synthetic and real voices in the normal course of distribution. The gap in detection method accuracy is 45-50 percentage points between the real world and the lab. The ultimate solution of using provenance-based detection with cryptography-based signatures and C2PA-compliant metadata is not feasible in the short term, as it requires adopting AI content generators, which is not possible with open-source tools or non-compliant content providers.

How do false positives in content moderation affect platform users?

False positives in content moderation, in which content is misclassified as violating policy, can impact users in a range of ways, from mildly inconvenient to severely problematic. At the lower end of the scale, the moderation of a comment into the "awaiting human review" queue might be mildly inconvenient. At the more problematic end of the scale, the systematic misclassification of content associated with certain types of community, language, or political perspective can silence lawful content and create a chilling effect that skews the platforms' overall discourse. The 52% rate of overturned decisions in out-of-court appeals in the first half of 2025 suggests that a significant percentage of decisions were misclassified in the first place. As such, reducing false positives is not simply a technical optimization; it is a governance requirement that determines the extent to which the moderation infrastructure supports or undermines the very purpose of the platforms it serves.

The information in this article represents the state of the capabilities of detecting AI content as of March 2026. The regulatory environment and the capabilities of AI generation and detection technologies change rapidly. For information on specific legal requirements, consult a legal professional. This article does not provide legal advice.