AI content detection tools measure specific statistical properties of text that consistently differentiate AI-generated writing from human writing. Those same properties, when read as feedback rather than judgment, are among the most practical diagnostics of writing style available. A detection tool that flags your writing as AI-like is telling you something measurable about how your prose behaves statistically. That information is actionable. It identifies where your writing is too predictable, where your sentences are too uniform in length and structure, where your vocabulary is too generic, and where your prose lacks the variation and concrete specificity that characterize strong human writing. Writers who learn to read these signals improve their craft regardless of whether detection is their primary concern.

Run your draft through BestHumanize to get your detection score and see exactly which passages are flagged, then use those results as a curated list of the passages most in need of revision. The detection feedback and the writing quality feedback point in the same direction, because the properties that detectors measure, predictability and structural uniformity, are also the properties that make writing forgettable rather than distinctive. Perplexity and burstiness in writing and what they reveal about prose style explain the practical writing implications of these two detection metrics, confirming that both are properties of writing quality in their own right, not just artifacts of AI detection methodology.

What Perplexity Tells You About Your Word Choices

Perplexity measures how predictable your word choices are at each position in a sentence. A language model evaluates each word by asking: given everything that preceded it, how likely was this word? If the next word is almost always what a statistical model would predict, your writing has low perplexity. If your choices frequently diverge from the most probable option, your writing has high perplexity. Low perplexity is not the same as bad writing. Short sentences and simple vocabulary can be excellent prose. But writing that is uniformly low in perplexity across an entire document is characteristically AI-like, because AI generators systematically select the most probable next word at every step. Burstiness and perplexity, explained with practical examples for writers and students, confirm that lower perplexity makes text more readable while higher perplexity signals creativity and distinctiveness. The optimal balance depends on context. When a detection tool flags your writing for low perplexity, it is identifying passages where your word choices are so predictable that a language model could have generated them from the sentence context alone. Those are your most generic passages.

Read the BestHumanize blog for detailed guides on raising perplexity and expanding your writing's vocabulary. The blog covers each of the following perplexity-raising techniques with examples: replacing generic categories with specific instances; using field-specific vocabulary where you genuinely know it; stating your actual opinion on contested questions rather than hedging; beginning sentences with context-setting clauses rather than predictable subject-verb openings; and replacing abstract nouns with concrete verbs and real numbers. These five changes consistently raise perplexity while improving writing quality, because they replace statistically generic choices with choices that reflect your actual knowledge and perspective.

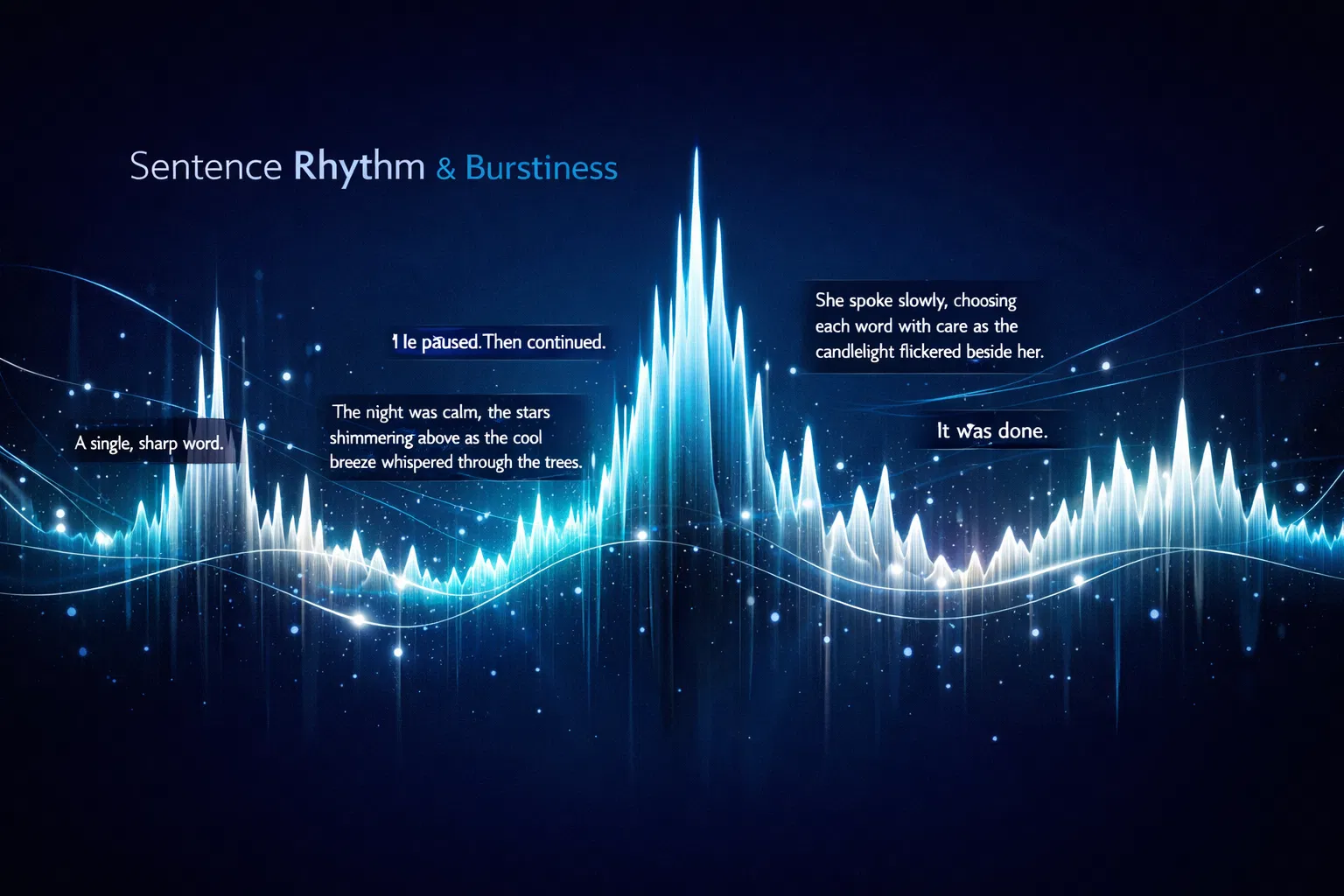

What Burstiness Tells You About Your Sentence Rhythm

Burstiness measures how much sentence length and structural complexity vary across a document. High burstiness means your sentences alternate substantially between short and long, between simple and compound-complex, between declarative and rhetorical. Low burstiness means your sentences are similar in length and structure, producing a smooth, consistent rhythm characteristic of AI-generated text. Language models generate each sentence using the same optimization process, which tends to produce sentences of similar statistical complexity and length. Human writers naturally vary their rhythm, following the emotional and logical arc of their argument. A short sentence creates emphasis. A long sentence creates elaboration. The alternation between them creates rhythm. How perplexity and burstiness are measured at the sentence level in AI detection tools explains that burstiness is calculated as the change in perplexity across the document, meaning that sentences that are locally uniform within a document that is otherwise varied produce a distinctive signature that detection models use to identify AI-generated sections within human-written documents.

Burstiness is a revision skill, not a drafting skill. The most practical revision audit: count the words in each sentence of a paragraph and write the number beside each. If every sentence is between fifteen and twenty-five words, the paragraph has low burstiness. Your revision target is a range of five to fifty words per sentence within the same paragraph, with the shortest sentences at moments of emphasis and the longest at moments of elaboration. Check BestHumanize pricing for the plan that fits your pre-submission detection and revision workflow, using the detection tool at the draft stage before you finalize your writing, so you get feedback while revision is still practical, rather than after the document is already submitted.

The Six Writing Patterns That AI Detectors Consistently Flag

AI content detection tools are calibrated to identify patterns more consistent with AI generation than with human writing. Each of these patterns, when present in genuinely human-written text, also reduces the quality and distinctiveness of the writing independently of any AI involvement. The table below lists the six patterns that most commonly drive elevated detection scores, explains what each reveals, and provides specific revision guidance.

Detection Signal | What It Reveals | How to Fix It |

Low perplexity | Word choices are statistically predictable. Common in polished, formulaic prose and grammar-corrected drafts. | Replace generic terms with specific ones. Use field-specific vocabulary where you genuinely know it. Begin some sentences with context-setting clauses rather than subject-verb patterns. |

Low burstiness | Sentences are similar in length and structure throughout. The single strongest predictor of AI authorship in current models. | Follow a compound sentence with a three-word punch. Let rhythm reflect meaning. Vary from five-word sentences to forty-word sentences within the same paragraph. |

Repetitive phrasing | The same transitions, openers, or structural formulas recur across paragraphs — an AI and formulaic-writing pattern. | Read your draft aloud paragraph by paragraph starting from the end. Recurring patterns become audible. Replace formulaic openers with context-specific ones that reflect your actual reasoning. |

Uniform tone | Identical formality and emotional register throughout. Human writing naturally varies between expository, emphatic, and reflective passages. | Identify your strongest claim and let the language at that point reflect genuine investment. One or two passages of different register add tonal variation that is both more engaging and less detectable. |

Generic vocabulary | Choices tend toward abstract rather than concrete. AI generates professional-sounding text that avoids specific terminology and concrete detail. | Every claim that can be specific should be specific. 'Many researchers' becomes a named study. 'Significant results' becomes the actual figure. Specificity is the strongest signal of genuine knowledge. |

Coordinated sentence openings | Multiple consecutive sentences begin with the same grammatical structure. This regularity persists even after synonym substitution. | Vary the grammatical position of information. Start one sentence with a subordinate clause, the next with the predicate, the next with an adverb. Structural variety is invisible when done well. |

The most commonly overlooked pattern is uniform tone: maintaining identical formality and emotional register throughout the document. Most writers focus on word choice and sentence length when thinking about detection patterns. But a document that is identically measured and equidistant from its subject across all sections reads as tonally flat and statistically AI-like. Allowing one or two passages to reflect genuine urgency, conviction, or uncertainty adds tonal variation that is both more engaging and less detectable. BestHumanize FAQ: how detection scores work and what each flagged passage tells you about your style explains each of these six signals in plain terms and gives you a practical test for each one that you can apply to any draft before submission.

Why Genuine Human Writing Sometimes Scores High on AI Detectors

Elevated detection scores do not necessarily indicate AI involvement. Specific human writing patterns produce the same low-perplexity, low-burstiness statistical profile as AI-generated text, and detection tools cannot distinguish between the two based solely on statistical properties. The most common patterns that cause falsely elevated scores are overuse of grammar tools, formal academic structure, highly edited professional prose, and subject-matter domains with standardized vocabulary. Top reasons AI detectors mistake human writing for AI, along with what each pattern means, are documented in detail. Grammar correction tools systematically reduce perplexity by replacing unusual word choices with more common alternatives and by fixing sentence structure to conform to grammatical conventions. Formal academic writing uses constrained vocabulary and standardized transitions that are statistically similar to AI output. Highly edited prose in any genre tends toward regularity because the editorial process removes idiosyncrasies that produce high perplexity and burstiness.

Learn about BestHumanize and how it is designed to help genuine human writers protect their original work from false detection flags. The tool is built not just as a humanizer but also as a diagnostic that shows writers which passages exhibit AI-like statistical properties, so they can focus revision energy where it matters most. Writers who use it before submission discover false positive risks early, when they still have time to revise, rather than after a flag has become a dispute.

ESL Writers and the False Positive Problem

ESL writers face a documented structural disadvantage in AI detection. Second-language writing tends to have lower lexical diversity and more predictable syntactic patterns than fluent native English writing, not because ESL writers use AI, but because second-language writing draws on a more constrained range of vocabulary and grammar patterns. Detection tools calibrated on native-speaker writing cannot distinguish between low perplexity produced by AI generation and low perplexity produced by second-language writing processes. A Stanford HAI study found that AI detectors misclassified ESL essays at high rates, with 61.3% of TOEFL essays by non-native English speakers misclassified as AI-generated, far higher than the rate for native-speaker essays. For ESL writers whose genuine work is being flagged, the most effective interventions are deliberately expanding vocabulary range by incorporating domain-specific terminology where accurate, introducing sentence-length variation at the revision stage, and including first-person perspective and personal professional experience where the content allows.

The Pre-Submission Detection Workflow as a Writing Improvement Loop

The most productive use of AI detection tools for writing improvement is as a structured pre-submission feedback loop rather than a one-time clearance check. Writers who run their draft through a detection tool early in the revision process, before their final editing pass, receive systematic feedback on their stylistic weaknesses while there is still editorial energy to address them. Writers who run the check at the submission stage receive the same feedback but without the opportunity to act on it. Building detection into the revision workflow as a diagnostic step, not a final clearance step, transforms it from a source of anxiety into a source of actionable style improvement. What AI detection percentages mean and how to interpret score thresholds for writing decisions provides useful framing: detection scores are probability estimates, not certainty. A score that drops after a revision pass targeting specific patterns confirms that the revision changed the statistical properties of the text in a meaningful way. If the score does not drop, the revision addressed surface features, such as synonym replacement, rather than the underlying statistical properties, such as sentence-length distribution or clause-level structure.

A practical four-step revision loop: run the draft through a sentence-level detection tool, collect the highlighted sentences in a separate document, read them as a list to identify the shared pattern, and revise each specifically for that pattern before re-checking. The score will typically drop after meaningful revision, and more importantly, the revised passages will be stronger than the originals. Contact BestHumanize to ask about using the platform as part of a systematic writing development practice, including how to track detection scores across multiple documents over time to identify the genre-specific and habit-specific patterns that are most affecting your writing's statistical profile.

Conclusion

AI content detection tools measure real properties of writing that distinguish AI-generated text from human writing. Those properties, particularly perplexity and burstiness, are also properties that distinguish generic, predictable prose from distinctive, engaging prose. Using detection scores as writing feedback rather than as verdicts converts a source of anxiety into one of the most systematic style diagnostics available to any writer. The practical improvement path is to run your draft through a sentence-level detection tool, read the highlighted sentences as a curated sample of your weakest passages, identify the pattern they share, and revise specifically for that pattern. The revised passages will be less detectable and better written, because the detection tools are measuring the same properties that distinguish memorable writing from forgettable writing.

Frequently Asked Questions

Can a detection score tell me which specific words to change?

A document-level score cannot. Sentence-level detection output identifies the specific sentences that most contributed to the overall score, but not the specific words within those sentences. Reading the highlighted sentences as a set and identifying what they have in common, whether that is similar length, similar structure, similar vocabulary, or similar tone, gives you more useful guidance than any word-level suggestion. The pattern is the target, not the individual word.

Will using a grammar correction tool make my writing score higher on AI detectors?

Yes, in many cases. Grammar correction tools systematically replace unusual word choices with more common alternatives and fix sentence structures toward grammatical regularity. Both changes reduce perplexity. The practical balance is to use grammar tools for error correction, not for style replacement: fix actual errors, but preserve word choices and sentence structures that are distinctively yours, even if unconventional.

How often should I run detection checks on my writing?

For writing that will be evaluated by detection tools in institutional or professional contexts, once at the end of a first complete draft and once after your substantive revision is complete. The first check identifies structural patterns to address during revision. The second confirms whether the revision successfully changed those patterns. For writing, use detection as a style development tool; check once per finished document, across multiple documents, to track whether your patterns are shifting over time.

Is it possible to write too high on the perplexity scale?

Yes. Very high perplexity can make writing difficult to follow. The unpredictability that characterizes human writing is calibrated to serve the reader: unexpected word choices create emphasis, sentence-length variation creates rhythm, and concrete specificity creates clarity. Writing that is unpredictable for its own sake without serving any of those goals is hard to read. The practical target is writing where the perplexity clearly reflects a specific human intelligence making specific choices, not so high that the reader loses the thread.

Does detection feedback apply equally to all genres?

No. Detection tools are calibrated primarily on academic essays, blog posts, articles, and professional documents. Burstiness feedback is most useful for expository and persuasive writing. Perplexity feedback is most useful for any writing where vocabulary choice matters to the reader. For very short documents under 250 words, detection scores are generally unreliable as style feedback because the sample size is too small for the statistical measures to be meaningful.

This guide reflects the methodology and writing improvement practices of AI content detection tools as of March 2026. Detection tool models update frequently. Always test your own writing against the specific tools used in your evaluation context rather than relying on general detection principles alone.