You wrote every word yourself. You researched, drafted, revised, and submitted. Then an AI detector flagged your content as likely machine-generated. This experience, which is increasingly common in 2026 across academic, professional, and publishing contexts, is not a random malfunction. It is the predictable result of specific, technically explainable mechanisms that cause detection systems to misclassify genuine human writing. Understanding those mechanisms is the difference between feeling arbitrarily accused and being able to respond to a false flag with precision and evidence. Why AI detection false positives are not random errors but the structural result of how statistical detection systems work confirms that false positives are one of the central reasons AI detection still requires human judgment, not incidental failures, but predictable outcomes of pattern-matching systems applied to the full diversity of real human writing.

This article explains the technical reasons why human-written content gets flagged by AI detectors not at a surface level, but at the level of the underlying mechanisms that produce these outcomes. It covers: why the distributions of human and AI writing overlap in ways that make any classification boundary mathematically imperfect; the specific content types and writing characteristics that fall within the zone of overlap most frequently; why Vanderbilt University disabled Turnitin's AI detector entirely; how the base rate problem means even accurate detectors produce more false positives than true positives in low-AI-use environments; and what the existence of these mechanisms means for how detection results should be interpreted and acted upon.

Key Takeaways

False positives are not a sign of tool malfunction; they are a mathematical necessity. The statistical features that distinguish AI-generated text from human-written text exist on a continuum, not as two cleanly separable populations. Any classification boundary drawn through the overlapping feature space will inevitably misclassify some human writing as AI. This is not fixable by improving the tool it is inherent to the classification problem itself. The technical foundations of AI content detection and why overlapping feature distributions make false positives structurally unavoidable confirm that no detection system can simultaneously achieve zero false positives and zero false negatives, reducing one error type always increases the other.

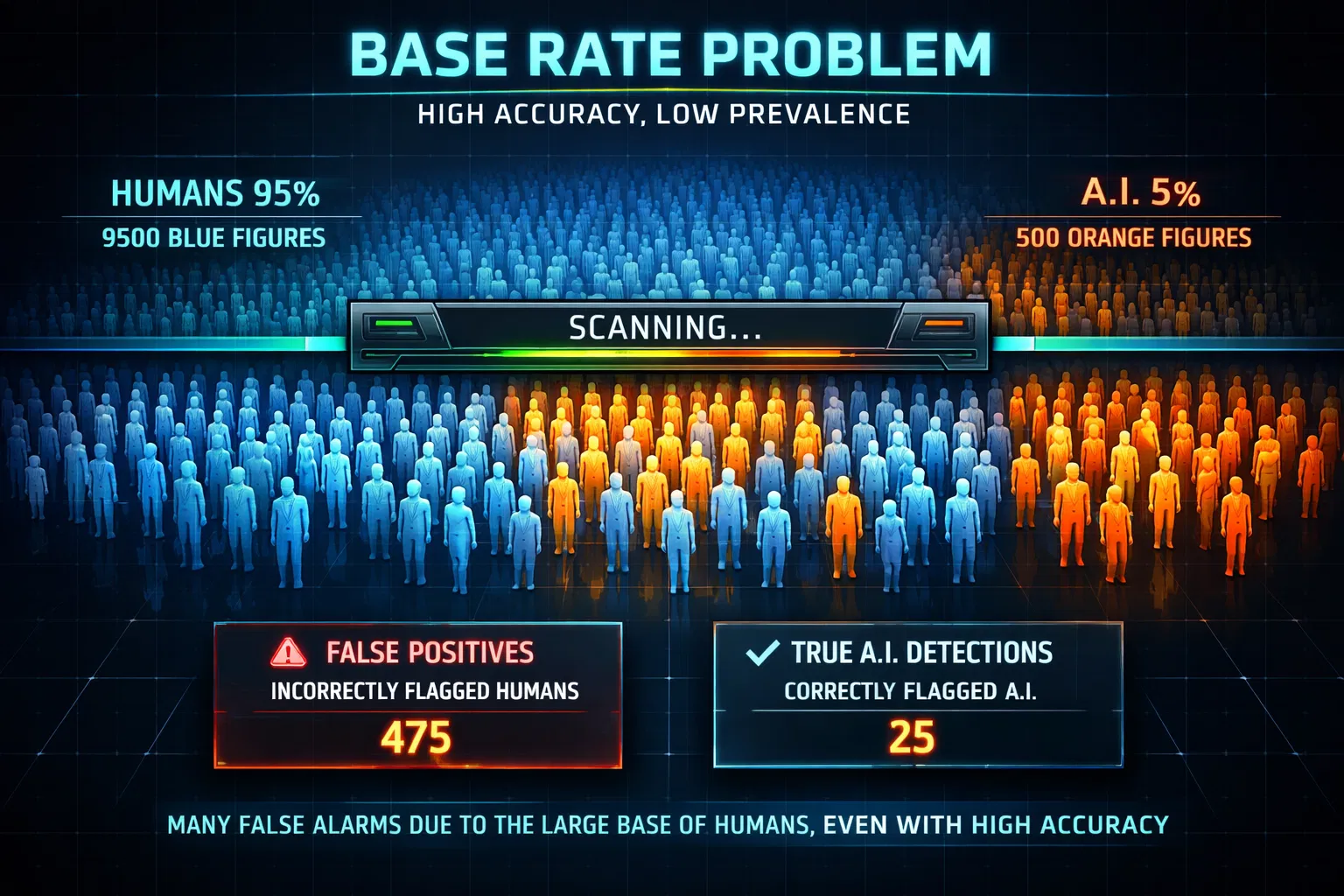

The base rate problem amplifies false positive rates far beyond what vendor accuracy claims suggest. In an environment where only 5% of submitted content uses AI, a detector with 99% accuracy and 1% false positive rate will produce more false flags on human writing than correct identifications of AI writing. This is a statistical reality that most institutional users of detection tools do not account for, and it means that in high-integrity environments, the majority of flagged content may be genuinely human-written. Why the base rate problem means even highly accurate AI detectors produce disproportionate false accusations in low-AI-use academic environments confirms that threshold selection and base rate assumptions are among the most consequential and least discussed variables in real-world detection accuracy.

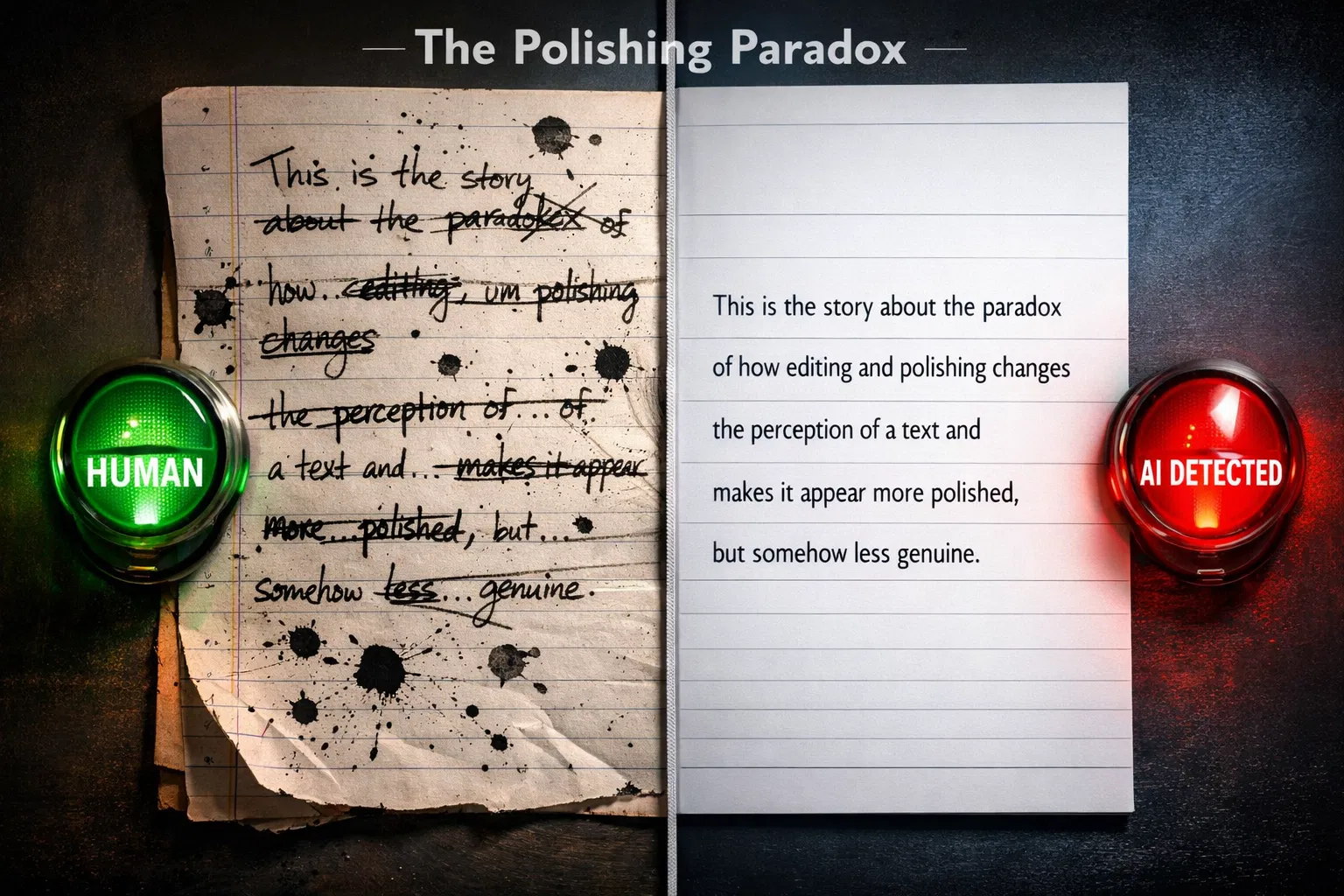

Polished, well-edited human writing is more likely to be flagged than rough, idiosyncratic writing. This is one of the most counterintuitive findings in the false positive literature: the revising, editing, and refining that produce high-quality prose also remove the idiosyncratic word choices, irregular sentence rhythms, and grammatical variation that detection tools associate with human authorship. The better the writing, the lower its perplexity score and the higher its resemblance to AI output.

Famous texts, including the US Constitution, the Declaration of Independence, and passages from classic literature, score as AI-generated under perplexity-based detection not because they were machine-written, but because they are so widely reproduced that language models have memorised their word sequences, producing artificially low perplexity scores. How training data contamination causes famous texts and widely reproduced content to score as AI-generated under perplexity-based detection methods documents this as a structural limitation of perplexity-based detection that cannot be corrected through threshold adjustment alone.

Grammar tools, including Grammarly, spell-check, and autocorrect shift detection scores by correcting idiosyncratic phrasing, reducing error variation, and imposing grammatical regularity, all of which lower perplexity and increase the likelihood of a false positive. Writers who use these tools extensively in their drafts may unknowingly move their writing into the detection zone without adding any AI-generated content. Using AI text humanizer tools, specifically designed to restore natural writing variation and reduce the risk of false positives, can counteract this effect, but the underlying cause is the editing process itself, not the content's origin.

The Statistical Foundation: Why False Positives Are Unavoidable

Core Insight: AI detectors are not authorship verifiers. They are probability estimators trained to find statistical patterns. When the statistical patterns of human writing overlap with those of AI writing — which they inevitably do, because both are produced by human language — those overlapping texts will be misclassified. This is not a calibration error. It is the mathematical structure of the problem. |

To understand why false positives are unavoidable, start with what AI detectors actually measure. Every major detection tool evaluates text against a set of statistical features, primarily perplexity (how predictable the word choices are relative to a language model's expectations) and burstiness (how much sentence length and structure vary across the document), and classifies the text based on where it falls relative to a learned decision boundary separating the human-writing feature space from the AI-writing feature space. The classifier does not know who wrote the text. It does not verify authorship. It estimates which side of the boundary the text's statistical profile falls on. How AI detection tools use statistical pattern analysis rather than authorship verification, and why this approach produces unavoidable classification errors on human writing, confirms that false positives occur because detection tools are statistical instruments, not intent readers, and when the thresholds and assumptions are not well calibrated for a specific type of writing, false positives are the predictable result.

The critical issue is that the statistical features of human writing and AI writing do not form two cleanly separable populations. They overlap. Some forms of human writing are highly predictable in their word choices: formal academic prose, technical documentation, and standardised business writing. Some AI-generated writing exhibits high variation, particularly when the generating model was prompted to change its style or when the output was lightly edited. The overlapping region between these populations is not small or incidental. It includes significant proportions of both populations. Any classification boundary drawn through this space will necessarily misclassify texts on both sides of the overlap, producing false positives on the human side and false negatives on the AI side.

This means there is no technically achievable AI detection system that produces zero false positives. The question is not whether false positives will occur, but how many there will be and which populations of human writers will bear the largest share. The answer, consistently across all major detection platforms and independent studies, is: writers whose work falls in the zone of overlap. Writers with formal, structured styles. Writers using non-native English. Writers who edit their work extensively. Writers working in technical or scientific domains. Precisely, the writers whose work most needs to be evaluated fairly.

Seven Technical Mechanisms That Cause False Positives

Mechanism 1: The Base Rate Problem

The base rate problem is the most consequential and least understood source of false positive inflation in AI detection. It works as follows: suppose an institution uses a detection tool that achieves 99% accuracy, meaning it correctly identifies 99% of AI-generated texts and misclassifies only 1% of human-written texts. Suppose further that in this institution, 5% of submitted work is AI-generated and 95% is genuinely human-written. For every 100 documents submitted, the detector will correctly flag 4.95 of the 5 AI documents and incorrectly flag 0.95 of the 95 human documents. Of the approximately 5.9 total flags, only 4.95 reflect actual AI use, meaning roughly 16% of flags are false positives, despite the tool's 99% accuracy.

Now extend this to a more realistic scenario: in a high-integrity academic programme where only 2% of submissions use AI. With the same 99% accurate, 1% false-positive rate tool, 2 AI documents were correctly flagged, and 0.98 human documents were incorrectly flagged. Of the 2.98 total flags, 33% are false positives. At 1% AI use, over half of all flags would be on human-written work. The base rate problem means that the detection context, not just the tool's accuracy, determines the proportion of flags that are wrongful accusations. In environments with low AI use and high writing quality, the majority of flags may be false positives regardless of how accurate the tool claims to be.

Mechanism 2: Overlapping Feature Distributions

The second mechanism is the fundamental statistical overlap described above. Perplexity scores for human writing and AI writing are not two distinct, non-overlapping ranges with a clear gap between them. There are two distributions that overlap substantially in the middle, with some human writing falling in the low-perplexity zone associated with AI generation and some AI writing falling in the higher-perplexity zone associated with human authorship. The detection tool draws a line through this overlap. Human texts on the AI side of the line are false positives. There is no way to draw this line that avoids all of them without simultaneously missing all AI texts on the human side.

What determines which human texts fall in the AI-like zone? Primarily: formal writing style, technical vocabulary, consistent register, reduced sentence length variation, and the characteristics associated with careful editing and revision. In other words, the writing characteristics that educational systems teach students to develop, that professional contexts reward, and that grammar tools enforce are precisely the characteristics that shift human writing into the AI detection zone.

Mechanism 3: Training Data Contamination

A specific and counterintuitive source of false positives affects famous texts and widely reproduced content. Perplexity-based detection works by asking how surprised a language model would be by each word in the sequence. If the exact text (or texts very similar to it) appeared in the language model's training data, the model will not be surprised at all, producing a very low perplexity score. This is training data contamination: the model has seen the text before, so it assigns high probability to each word, making the text score as AI-like even if it predates any AI system. documents cases where the US Constitution, the Declaration of Independence, and passages from well-known literature were flagged as AI-generated by perplexity-based tools, not because any AI produced them, but because they are so thoroughly represented in LLM training data that the model assigns near-maximum probability to their word sequences.

The practical implication extends beyond historical documents. Any content that has been widely published, frequently cited, or reproduced across many web pages may suffer partial contamination of its training data, with the most-reproduced phrases within that content receiving artificially low perplexity scores. Academic writing in well-established fields, where the conventional phrasings of methods sections, literature reviews, and conclusions are highly standardized across thousands of papers, is particularly vulnerable to this effect.

Mechanism 4: The Polishing Paradox

One of the most counterintuitive sources of false positives is the effect of editing and revision. When a human writer drafts a first version, it is idiosyncratic: word choices reflect the writer's particular vocabulary, sentence structures vary with the rhythm of their thinking, and grammatical constructions reflect their natural voice. These idiosyncrasies produce greater perplexity, as the writing is unpredictable in ways that characterise human authorship.

When the same writer revises that draft, correcting errors, smoothing awkward phrasing, strengthening argument structure, removing redundancy, and polishing transitions, they are systematically reducing idiosyncrasy. They replace unusual word choices with clearer ones. They regularise sentence rhythms. They make the text more consistent in the register. Every one of these edits moves the text toward lower perplexity and lower burstiness, closer to the statistical profile of AI-generated text. The first draft would likely pass detection; the final polished version may not. Ironically, higher writing quality correlates with a higher false-positive risk, because the editing process that produces quality also removes the statistical markers of human authorship.

Mechanism 5: Grammar Tool Interference

Grammar checkers, including Grammarly, spell-check, and autocorrect tools, intervene in the writing process in ways that shift detection scores not by adding AI content, but by removing the statistical variation that distinguishes human writing from machine output. When Grammarly corrects an awkward grammatical construction, it replaces a low-probability word sequence with a higher-probability one. When spell-check corrects a misspelling, it eliminates an unexpected character sequence. When autocorrect standardises punctuation, it reduces the punctuation variation that characterises human typing. Each intervention, individually minor, collectively moves the text toward the regularity and predictability that AI detectors flag. How AI detection tools have evolved in 2026 and why heavily grammar-checked and edited human writing increasingly falls within the detection zone across multiple platforms confirms that this effect is documented across platforms. A writer who uses grammar tools extensively on every sentence may see their detection scores shift significantly compared to the same content with natural writing variation preserved.

This is not an argument against using grammar tools. It is an argument against using detection scores as if they reflected something about the content's origin rather than its statistical properties. A piece of human-written content that has been thoroughly grammar-checked is not AI-generated; it has simply been edited to a level of grammatical regularity that overlaps with the AI detection zone. The detection score reflects the editing history, not the authorship.

Mechanism 6: Short Text Unreliability

Detection tools need a statistical signal to function. A statistical signal requires sufficient data. Short texts, anything under approximately 250–500 words, depending on the tool, do not provide enough data for the classifier to make a reliable determination. With small samples, random variation dominates: a few unusual word choices can produce a high perplexity score that makes a text look human-written, or a run of common words can produce a low perplexity score that makes it look AI-generated, purely by chance. The classification boundary, which is calibrated on longer texts, becomes unreliable when applied to short content.

Most major detection platforms explicitly acknowledge this limitation. Turnitin requires a minimum of 300 words for reliable results. GPTZero's documented accuracy applies to documents of sufficient length to generate meaningful statistical patterns. ZeroGPT's false-positive rate is already the highest among major tools at 20–28%, and it rises substantially for content under 250 words. Despite these documented limitations, short-form content is routinely submitted to detection tools in academic and professional contexts, producing detection results that approach random chance in their reliability.

Mechanism 7: Threshold Selection and Calibration Opacity

The final mechanism is less about the text and more about how detection results are reported and interpreted. Every detection tool produces a continuous probability score rather than a binary answer. A score of 73% AI does not mean the text is 73% machine-written; it means the tool's model assigns 73% probability to the hypothesis that the text originated from an AI system. Converting this continuous score to an actionable classification requires choosing a threshold: texts above 80% AI are flagged, say, or texts above 50% AI are flagged. Meta-analysis of AI detection accuracy studies, revealing how threshold selection, dataset composition, and calibration decisions shape real-world false positive rates, confirms that vendor-reported accuracy figures are almost always calculated at specific threshold settings that may differ from the default user-facing thresholds, meaning that the accuracy claims on vendor websites may not reflect the false positive rates that users experience in practice.

Vanderbilt University's decision to disable Turnitin's AI detection feature illustrates this problem concretely. When the university estimated the effect of applying Turnitin's detection to its 75,000 annual paper submissions using Turnitin's claimed false-positive rate of under 1%, it calculated that approximately 750 papers per year would be incorrectly flagged as AI-generated. At a higher but still plausible false-positive rate of 3–5%, the figure would be 2,250-3,750 wrongful flags annually. Vanderbilt concluded that the harm from wrongful accusations exceeded the benefit of AI detection and disabled the feature entirely, a decision documented and reported in higher education media.

Content Types at Elevated False Positive Risk

The following content types consistently yield elevated false-positive rates across multiple detection platforms. The elevation is not random; each type falls in the AI detection zone for specific, technically explainable reasons that map directly to the mechanisms described above.

Content Type | Why It Scores AI-Like | False Positive Risk Level |

Formal academic writing | Structured argument, consistent register, discipline-specific vocabulary — all overlap with the statistical profile of AI academic text | High — consistently elevated across all major detectors |

Technical documentation | Standardised phrasing, procedural structure, precise terminology without variation — matches AI output trained on similar corpora | High — particularly pronounced on step-by-step instructional content |

Heavily edited prose | Multiple revision passes remove idiosyncratic phrasing, reduce sentence length variation, and homogenise word choice | High — the more polished the draft, the lower the perplexity score |

Non-native English writing | Simpler vocabulary, more predictable grammar, reduced idiomatic usage — identical to the statistical markers detectors associate with AI | Very high — false positive rates 3–12x above native English baseline |

Short texts under 300 words | Insufficient statistical signal; small samples are dominated by random variance and produce classification results approaching chance | High — all major detectors recommend minimum 300–500 words |

Writing processed through grammar tools | Grammarly, spell-check, and similar tools correct idiosyncratic phrasing, reduce errors, and impose grammatical regularity — reducing perplexity | Moderate to high — depends on depth of grammar tool intervention |

Highly structured essay formats | Five-paragraph structure, explicit transitions, predictable organisation — reflects academic writing conventions that AI was trained to replicate | Moderate to high — particularly common in standardised academic formats |

Business reports and executive summaries | Formal register, consistent terminology, structured presentation — professionally polished content that overlaps with AI output profiles | Moderate — elevated for highly templated or formulaic report formats |

False-positive risk levels represent a consistent finding across multiple independent comparative studies. Specific rates vary by tool, content domain, text length, and writer background. All categories carry a higher risk than casual native-English writing on general topics, which represents the population on which most detection tools were calibrated.

What These Mechanisms Mean for Interpreting Detection Results

Understanding the technical mechanisms behind false positives fundamentally changes how detection results should be interpreted. A flag from an AI detection tool is not a finding of fact about who wrote the content. It is a statistical signal that the content's measurable properties fall within the range associated with AI-generated text. In many cases, particularly for the content types identified above, that signal is produced by features of human writing, not by AI authorship. confirms that every major detection platform explicitly acknowledges in its documentation that detection scores are probability estimates, not proof, and that human judgment is required before any consequential decision is made.

For Institutional Users

Never use a single detection score as the sole basis for an academic integrity decision. The base-rate problem means that, in most institutional contexts, a significant proportion of flags are false positives. Every flag should initiate a human review process that considers the student's writing history, the assignment context, the specific passages identified, and the documented limitations of the tool.

Run any implementation through a calibration check before deployment. Test the tool on a sample of known human writing from your specific student population, your students' previous submissions, in-class writing samples, or draft versions of the flagged assignments. Measure the false positive rate on content you know to be human-written before applying the tool to consequential decisions.

Disclose the use and limitations of the detection tool to students. Students who know they are subject to detection and understand the tool's documented false-positive rates can protect themselves through process documentation, the single most effective countermeasure to wrongful flags. Transparency about detection also reduces the chilling effect that causes ESL writers and students with formal writing styles to avoid developing sophisticated expression.

Calibrate your response thresholds to the base rate in your context. A threshold appropriate for an online publisher verifying freelancer submissions (where AI use may be high) is not appropriate for an academic integrity proceeding in a high-integrity programme (where AI use is likely low and the majority of flags may be false positives).

For Writers and Content Creators

Understand that a false positive is a statement about your writing's statistical properties, not about your authorship. If your writing is formal, technical, heavily edited, or produced using grammar tools, it may score as AI-like regardless of how you wrote it. This is not a reflection of writing quality; it is a reflection of the calibration limitations of statistical detection. Tools that help restore natural writing variation can shift scores by addressing the measurable features that trigger detection, even on genuinely human-written content.

Document your writing process regardless of whether you use AI. Timestamped drafts, research notes, version histories, and writing session logs provide evidence that resolve false-positive disputes by demonstrating the incremental, research-grounded human process that statistical detection tools cannot directly observe.

If you are flagged, respond with the technical evidence. Cite the specific mechanisms described in this article: the base rate problem, overlapping feature distributions, the polishing paradox, and short text unreliability in your response. Frame your defense around what the detection score actually measures (statistical properties) versus what it is being treated as measuring (authorship), and present your process documentation as the affirmative evidence of genuine authorship that the detection score cannot provide.

Conclusion

The technical explanation for why human content gets flagged by AI detection tools is not complicated, but it is consistently overlooked in the conversations that matter most: academic integrity proceedings, editorial rejections, and professional assessments. Detection tools measure statistical features of text. Those features overlap substantially between human and AI writing. Any classification boundary drawn through that overlap will produce false positives for human writing that falls within the AI-like zone, including formal academic prose, technical documentation, heavily edited work, non-native English writing, and short texts. The base rate problem means that even a highly accurate tool will produce proportionally more false positives than true positives in environments with low AI use. The polishing paradox states that better writing quality is associated with a higher false-positive risk. Grammar tool interference means that the editing process itself moves writing toward the detection zone. These are not arguments against using detection tools. They are arguments for treating detection results as a single probabilistic input to a human judgment process, exactly as the tools' own documentation recommends and exactly as institutions like Vanderbilt concluded when they saw the math.

Frequently Asked Questions

Why does polished, well-written content get flagged more often than rough drafts?

The editing and revision process that produces polished writing systematically reduces the statistical properties that detection tools associate with human authorship. Revision replaces idiosyncratic word choices with clearer alternatives, regularises sentence rhythms, smoothes awkward phrasing, and homogenises register. Each of these changes lowers perplexity (making word choices more predictable) and reduces burstiness (making sentence length more consistent), moving the text toward the statistical profile that detectors associate with AI-generated text. A first rough draft, with its natural irregularities and idiosyncrasies intact, will typically score as more human than the polished version of the same content.

What is the base rate problem, and why does it matter?

The base rate problem describes how false-positive rates multiply in practice, depending on how common the thing being detected is in the population being tested. A detector with 99% accuracy and a 1% false-positive rate will produce a specific ratio of correct to incorrect flags that depends entirely on the underlying frequency of AI use. In an environment where 5% of submissions use AI, roughly 16% of flags are false positives. At 2% AI use, a third of all flags are false positives. At 1% AI use, over half of all flags may be wrongful accusations. This means that institutional deployment of detection tools in high-integrity environments where AI use is genuinely rare can lead to situations in which the majority of flags are issued to innocent writers, regardless of the tool's headline accuracy figure.

Why did Vanderbilt University disable Turnitin's AI detection feature?

Vanderbilt University calculated that applying Turnitin's AI detection to its approximately 75,000 annual paper submissions would result in roughly 750 wrongful flags per year, based on Turnitin's claimed false-positive rate of under 1%. The university concluded that the harm from those wrongful accusations to students whose genuine work would be treated as AI-generated was not justified by the benefit of detecting the proportion of AI submissions the tool would correctly identify. The decision reflected the application of the base-rate problem at the institutional scale: even a low false-positive rate yields a large absolute number of wrongful accusations when applied to a large submission volume.

Does using Grammarly cause AI detection flags?

Using grammar tools, including Grammarly, spell-check, and autocorrect, on human-written text can shift detection scores toward higher AI probability, because these tools correct idiosyncratic phrasing, reduce grammatical variation, and standardise punctuation, all of which lower perplexity and increase the regularity that detection tools associate with machine-generated text. This does not mean grammar tools should be avoided, nor does it mean that grammar-checked content is AI-generated. It means that detection scores reflect the statistical properties of the final text, including the effects of any tools applied during the editing process, not just its ultimate authorship.

What is the most reliable way to prove human authorship when flagged?

The most reliable evidence of human authorship is a documented writing process, timestamped version history, research notes, intermediate drafts, and a record of the incremental development of the work over time. This evidence demonstrates what the detection score cannot measure: the process that produced the text. A document that developed across multiple writing sessions, grounded in specific research sources, and featuring visible revision from early draft to final version presents a pattern of authentic human composition that statistical detection cannot directly contradict. Process documentation, rather than arguments about detection tool accuracy, is the most effective affirmative evidence for resolving false-positive disputes.

This article reflects the state of AI content detection technology and the published research on false positive mechanisms as of March 2026. Detection tool performance, institutional policies, and the technical literature on this topic continue to evolve rapidly.